dependabot/cargo/cpal-0.16

28 Commits

| Author | SHA1 | Message | Date | |

|---|---|---|---|---|

|

|

18712f31f9

|

Make render and compute pipeline descriptors defaultable. (#19903)

A few versions ago, wgpu made it possible to set shader entry point to `None`, which will select the correct entry point in file where only a single entrypoint is specified. This makes it possible to implement `Default` for pipeline descriptors. This PR does so and attempts to `..default()` everything possible. |

||

|

|

96dcbc5f8c

|

Ugrade to wgpu version 25.0 (#19563)

# Objective Upgrade to `wgpu` version `25.0`. Depends on https://github.com/bevyengine/naga_oil/pull/121 ## Solution ### Problem The biggest issue we face upgrading is the following requirement: > To facilitate this change, there was an additional validation rule put in place: if there is a binding array in a bind group, you may not use dynamic offset buffers or uniform buffers in that bind group. This requirement comes from vulkan rules on UpdateAfterBind descriptors. This is a major difficulty for us, as there are a number of binding arrays that are used in the view bind group. Note, this requirement does not affect merely uniform buffors that use dynamic offset but the use of *any* uniform in a bind group that also has a binding array. ### Attempted fixes The easiest fix would be to change uniforms to be storage buffers whenever binding arrays are in use: ```wgsl #ifdef BINDING_ARRAYS_ARE_USED @group(0) @binding(0) var<uniform> view: View; @group(0) @binding(1) var<uniform> lights: types::Lights; #else @group(0) @binding(0) var<storage> view: array<View>; @group(0) @binding(1) var<storage> lights: array<types::Lights>; #endif ``` This requires passing the view index to the shader so that we know where to index into the buffer: ```wgsl struct PushConstants { view_index: u32, } var<push_constant> push_constants: PushConstants; ``` Using push constants is no problem because binding arrays are only usable on native anyway. However, this greatly complicates the ability to access `view` in shaders. For example: ```wgsl #ifdef BINDING_ARRAYS_ARE_USED mesh_view_bindings::view.view_from_world[0].z #else mesh_view_bindings::view[mesh_view_bindings::view_index].view_from_world[0].z #endif ``` Using this approach would work but would have the effect of polluting our shaders with ifdef spam basically *everywhere*. Why not use a function? Unfortunately, the following is not valid wgsl as it returns a binding directly from a function in the uniform path. ```wgsl fn get_view() -> View { #if BINDING_ARRAYS_ARE_USED let view_index = push_constants.view_index; let view = views[view_index]; #endif return view; } ``` This also poses problems for things like lights where we want to return a ptr to the light data. Returning ptrs from wgsl functions isn't allowed even if both bindings were buffers. The next attempt was to simply use indexed buffers everywhere, in both the binding array and non binding array path. This would be viable if push constants were available everywhere to pass the view index, but unfortunately they are not available on webgpu. This means either passing the view index in a storage buffer (not ideal for such a small amount of state) or using push constants sometimes and uniform buffers only on webgpu. However, this kind of conditional layout infects absolutely everything. Even if we were to accept just using storage buffer for the view index, there's also the additional problem that some dynamic offsets aren't actually per-view but per-use of a setting on a camera, which would require passing that uniform data on *every* camera regardless of whether that rendering feature is being used, which is also gross. As such, although it's gross, the simplest solution just to bump binding arrays into `@group(1)` and all other bindings up one bind group. This should still bring us under the device limit of 4 for most users. ### Next steps / looking towards the future I'd like to avoid needing split our view bind group into multiple parts. In the future, if `wgpu` were to add `@builtin(draw_index)`, we could build a list of draw state in gpu processing and avoid the need for any kind of state change at all (see https://github.com/gfx-rs/wgpu/issues/6823). This would also provide significantly more flexibility to handle things like offsets into other arrays that may not be per-view. ### Testing Tested a number of examples, there are probably more that are still broken. --------- Co-authored-by: François Mockers <mockersf@gmail.com> Co-authored-by: Elabajaba <Elabajaba@users.noreply.github.com> |

||

|

|

7645ce91ed

|

Add newlines before impl blocks (#19746)

# Objective Fix https://github.com/bevyengine/bevy/issues/19617 # Solution Add newlines before all impl blocks. I suspect that at least some of these will be objectionable! If there's a desired Bevy style for this then I'll update the PR. If not then we can just close it - it's the work of a single find and replace. |

||

|

|

7b1c9f192e

|

Adopt consistent FooSystems naming convention for system sets (#18900)

# Objective Fixes a part of #14274. Bevy has an incredibly inconsistent naming convention for its system sets, both internally and across the ecosystem. <img alt="System sets in Bevy" src="https://github.com/user-attachments/assets/d16e2027-793f-4ba4-9cc9-e780b14a5a1b" width="450" /> *Names of public system set types in Bevy* Most Bevy types use a naming of `FooSystem` or just `Foo`, but there are also a few `FooSystems` and `FooSet` types. In ecosystem crates on the other hand, `FooSet` is perhaps the most commonly used name in general. Conventions being so wildly inconsistent can make it harder for users to pick names for their own types, to search for system sets on docs.rs, or to even discern which types *are* system sets. To reign in the inconsistency a bit and help unify the ecosystem, it would be good to establish a common recommended naming convention for system sets in Bevy itself, similar to how plugins are commonly suffixed with `Plugin` (ex: `TimePlugin`). By adopting a consistent naming convention in first-party Bevy, we can softly nudge ecosystem crates to follow suit (for types where it makes sense to do so). Choosing a naming convention is also relevant now, as the [`bevy_cli` recently adopted lints](https://github.com/TheBevyFlock/bevy_cli/pull/345) to enforce naming for plugins and system sets, and the recommended naming used for system sets is still a bit open. ## Which Name To Use? Now the contentious part: what naming convention should we actually adopt? This was discussed on the Bevy Discord at the end of last year, starting [here](<https://discord.com/channels/691052431525675048/692572690833473578/1310659954683936789>). `FooSet` and `FooSystems` were the clear favorites, with `FooSet` very narrowly winning an unofficial poll. However, it seems to me like the consensus was broadly moving towards `FooSystems` at the end and after the poll, with Cart ([source](https://discord.com/channels/691052431525675048/692572690833473578/1311140204974706708)) and later Alice ([source](https://discord.com/channels/691052431525675048/692572690833473578/1311092530732859533)) and also me being in favor of it. Let's do a quick pros and cons list! Of course these are just what I thought of, so take it with a grain of salt. `FooSet`: - Pro: Nice and short! - Pro: Used by many ecosystem crates. - Pro: The `Set` suffix comes directly from the trait name `SystemSet`. - Pro: Pairs nicely with existing APIs like `in_set` and `configure_sets`. - Con: `Set` by itself doesn't actually indicate that it's related to systems *at all*, apart from the implemented trait. A set of what? - Con: Is `FooSet` a set of `Foo`s or a system set related to `Foo`? Ex: `ContactSet`, `MeshSet`, `EnemySet`... `FooSystems`: - Pro: Very clearly indicates that the type represents a collection of systems. The actual core concept, system(s), is in the name. - Pro: Parallels nicely with `FooPlugins` for plugin groups. - Pro: Low risk of conflicts with other names or misunderstandings about what the type is. - Pro: In most cases, reads *very* nicely and clearly. Ex: `PhysicsSystems` and `AnimationSystems` as opposed to `PhysicsSet` and `AnimationSet`. - Pro: Easy to search for on docs.rs. - Con: Usually results in longer names. - Con: Not yet as widely used. Really the big problem with `FooSet` is that it doesn't actually describe what it is. It describes what *kind of thing* it is (a set of something), but not *what it is a set of*, unless you know the type or check its docs or implemented traits. `FooSystems` on the other hand is much more self-descriptive in this regard, at the cost of being a bit longer to type. Ultimately, in some ways it comes down to preference and how you think of system sets. Personally, I was originally in favor of `FooSet`, but have been increasingly on the side of `FooSystems`, especially after seeing what the new names would actually look like in Avian and now Bevy. I prefer it because it usually reads better, is much more clearly related to groups of systems than `FooSet`, and overall *feels* more correct and natural to me in the long term. For these reasons, and because Alice and Cart also seemed to share a preference for it when it was previously being discussed, I propose that we adopt a `FooSystems` naming convention where applicable. ## Solution Rename Bevy's system set types to use a consistent `FooSet` naming where applicable. - `AccessibilitySystem` → `AccessibilitySystems` - `GizmoRenderSystem` → `GizmoRenderSystems` - `PickSet` → `PickingSystems` - `RunFixedMainLoopSystem` → `RunFixedMainLoopSystems` - `TransformSystem` → `TransformSystems` - `RemoteSet` → `RemoteSystems` - `RenderSet` → `RenderSystems` - `SpriteSystem` → `SpriteSystems` - `StateTransitionSteps` → `StateTransitionSystems` - `RenderUiSystem` → `RenderUiSystems` - `UiSystem` → `UiSystems` - `Animation` → `AnimationSystems` - `AssetEvents` → `AssetEventSystems` - `TrackAssets` → `AssetTrackingSystems` - `UpdateGizmoMeshes` → `GizmoMeshSystems` - `InputSystem` → `InputSystems` - `InputFocusSet` → `InputFocusSystems` - `ExtractMaterialsSet` → `MaterialExtractionSystems` - `ExtractMeshesSet` → `MeshExtractionSystems` - `RumbleSystem` → `RumbleSystems` - `CameraUpdateSystem` → `CameraUpdateSystems` - `ExtractAssetsSet` → `AssetExtractionSystems` - `Update2dText` → `Text2dUpdateSystems` - `TimeSystem` → `TimeSystems` - `AudioPlaySet` → `AudioPlaybackSystems` - `SendEvents` → `EventSenderSystems` - `EventUpdates` → `EventUpdateSystems` A lot of the names got slightly longer, but they are also a lot more consistent, and in my opinion the majority of them read much better. For a few of the names I took the liberty of rewording things a bit; definitely open to any further naming improvements. There are still also cases where the `FooSystems` naming doesn't really make sense, and those I left alone. This primarily includes system sets like `Interned<dyn SystemSet>`, `EnterSchedules<S>`, `ExitSchedules<S>`, or `TransitionSchedules<S>`, where the type has some special purpose and semantics. ## Todo - [x] Should I keep all the old names as deprecated type aliases? I can do this, but to avoid wasting work I'd prefer to first reach consensus on whether these renames are even desired. - [x] Migration guide - [x] Release notes |

||

|

|

101fcaa619

|

Combine output_index and indirect_parameters_index into one field in PreprocessWorkItem. (#17853)

The `output_index` field is only used in direct mode, and the

`indirect_parameters_index` field is only used in indirect mode.

Consequently, we can combine them into a single field, reducing the size

of `PreprocessWorkItem`, which

`batch_and_prepare_{binned,sorted}_render_phase` must construct every

frame for every mesh instance, from 96 bits to 64 bits.

|

||

|

|

0ede857103

|

Build batches across phases in parallel. (#17764)

Currently, invocations of `batch_and_prepare_binned_render_phase` and `batch_and_prepare_sorted_render_phase` can't run in parallel because they write to scene-global GPU buffers. After PR #17698, `batch_and_prepare_binned_render_phase` started accounting for the lion's share of the CPU time, causing us to be strongly CPU bound on scenes like Caldera when occlusion culling was on (because of the overhead of batching for the Z-prepass). Although I eventually plan to optimize `batch_and_prepare_binned_render_phase`, we can obtain significant wins now by parallelizing that system across phases. This commit splits all GPU buffers that `batch_and_prepare_binned_render_phase` and `batch_and_prepare_sorted_render_phase` touches into separate buffers for each phase so that the scheduler will run those phases in parallel. At the end of batch preparation, we gather the render phases up into a single resource with a new *collection* phase. Because we already run mesh preprocessing separately for each phase in order to make occlusion culling work, this is actually a cleaner separation. For example, mesh output indices (the unique ID that identifies each mesh instance on GPU) are now guaranteed to be sequential starting from 0, which will simplify the forthcoming work to remove them in favor of the compute dispatch ID. On Caldera, this brings the frame time down to approximately 9.1 ms with occlusion culling on.  |

||

|

|

85b366a8a2

|

Cache MeshInputUniform indices in each RenderBin. (#17772)

Currently, we look up each `MeshInputUniform` index in a hash table that maps the main entity ID to the index every frame. This is inefficient, cache unfriendly, and unnecessary, as the `MeshInputUniform` index for an entity remains the same from frame to frame (even if the input uniform changes). This commit changes the `IndexSet` in the `RenderBin` to an `IndexMap` that maps the `MainEntity` to `MeshInputUniformIndex` (a new type that this patch adds for more type safety). On Caldera with parallel `batch_and_prepare_binned_render_phase`, this patch improves that function from 3.18 ms to 2.42 ms, a 31% speedup. |

||

|

|

556b750782

|

Set late indirect parameter offsets every frame again. (#17736)

PR #17684 broke occlusion culling because it neglected to set the indirect parameter offsets for the late mesh preprocessing stage if the work item buffers were already set. This PR moves the update of those values to a new function, `init_work_item_buffers`, which is unconditionally called for every phase every frame. Note that there's some complexity in order to handle the case in which occlusion culling was enabled on one frame and disabled on the next, or vice versa. This was necessary in order to make the occlusion culling toggle in the `occlusion_culling` example work again. |

||

|

|

7fc122ad16

|

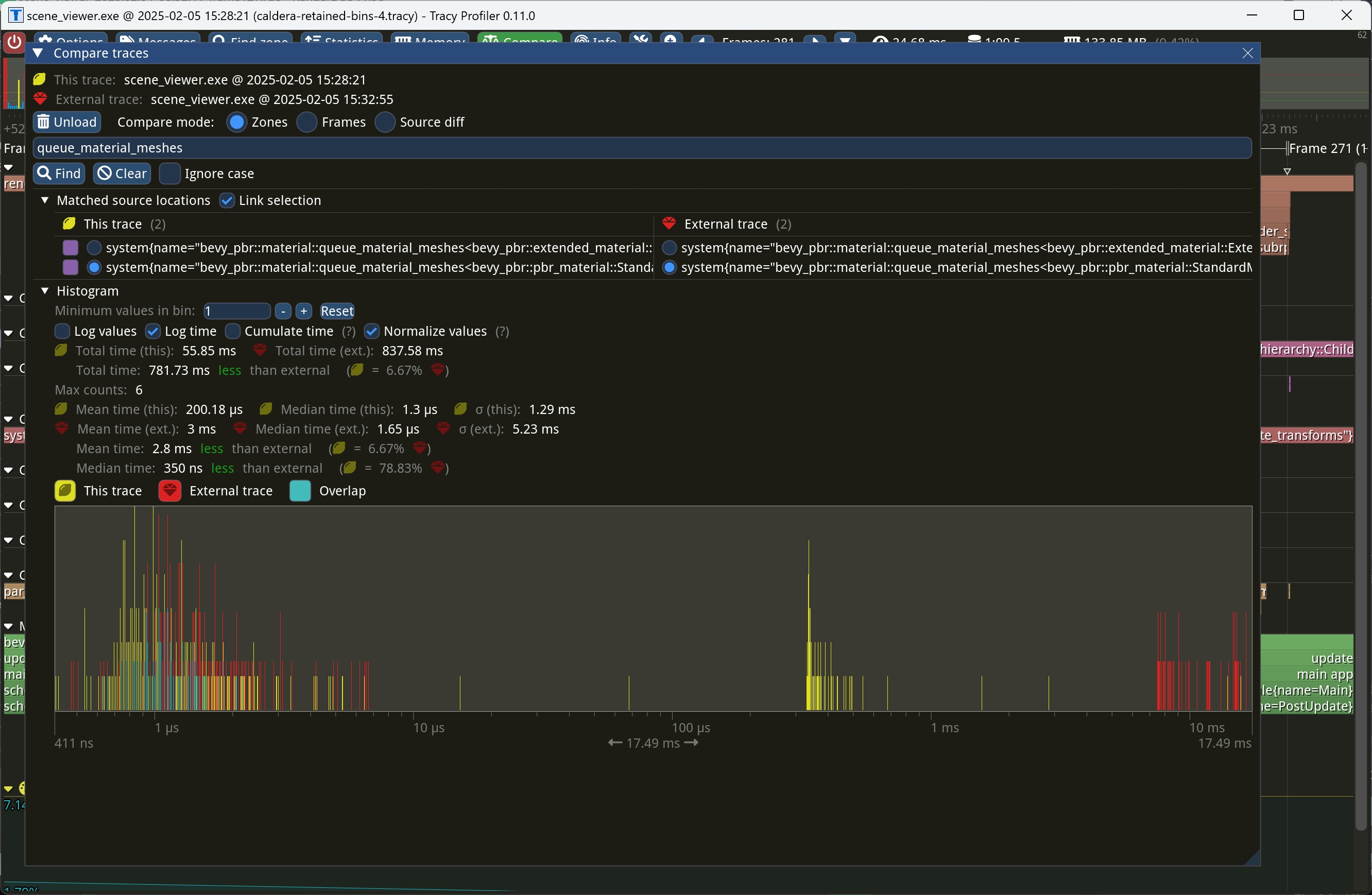

Retain bins from frame to frame. (#17698)

This PR makes Bevy keep entities in bins from frame to frame if they haven't changed. This reduces the time spent in `queue_material_meshes` and related functions to near zero for static geometry. This patch uses the same change tick technique that #17567 uses to detect when meshes have changed in such a way as to require re-binning. In order to quickly find the relevant bin for an entity when that entity has changed, we introduce a new type of cache, the *bin key cache*. This cache stores a mapping from main world entity ID to cached bin key, as well as the tick of the most recent change to the entity. As we iterate through the visible entities in `queue_material_meshes`, we check the cache to see whether the entity needs to be re-binned. If it doesn't, then we mark it as clean in the `valid_cached_entity_bin_keys` bit set. If it does, then we insert it into the correct bin, and then mark the entity as clean. At the end, all entities not marked as clean are removed from the bins. This patch has a dramatic effect on the rendering performance of most benchmarks, as it effectively eliminates `queue_material_meshes` from the profile. Note, however, that it generally simultaneously regresses `batch_and_prepare_binned_render_phase` by a bit (not by enough to outweigh the win, however). I believe that's because, before this patch, `queue_material_meshes` put the bins in the CPU cache for `batch_and_prepare_binned_render_phase` to use, while with this patch, `batch_and_prepare_binned_render_phase` must load the bins into the CPU cache itself. On Caldera, this reduces the time spent in `queue_material_meshes` from 5+ ms to 0.2ms-0.3ms. Note that benchmarking on that scene is very noisy right now because of https://github.com/bevyengine/bevy/issues/17535.  |

||

|

|

69b2ae871c

|

Don't reallocate work item buffers every frame. (#17684)

We were calling `clear()` on the work item buffer table, which caused us to deallocate all the CPU side buffers. This patch changes the logic to instead just clear the buffers individually, but leave their backing stores. This has two consequences: 1. To effectively retain work item buffers from frame to frame, we need to key them off `RetainedViewEntity` values and not the render world `Entity`, which is transient. This PR changes those buffers accordingly. 2. We need to clean up work item buffers that belong to views that went away. Amusingly enough, we actually have a system, `delete_old_work_item_buffers`, that tries to do this already, but it wasn't doing anything because the `clear_batched_gpu_instance_buffers` system already handled that. This patch actually makes the `delete_old_work_item_buffers` system useful, by removing the clearing behavior from `clear_batched_gpu_instance_buffers` and instead making `delete_old_work_item_buffers` delete buffers corresponding to nonexistent views. On Bistro, this PR improves the performance of `batch_and_prepare_binned_render_phase` from 61.2 us to 47.8 us, a 28% speedup.  |

||

|

|

dda97880c4

|

Implement experimental GPU two-phase occlusion culling for the standard 3D mesh pipeline. (#17413)

*Occlusion culling* allows the GPU to skip the vertex and fragment shading overhead for objects that can be quickly proved to be invisible because they're behind other geometry. A depth prepass already eliminates most fragment shading overhead for occluded objects, but the vertex shading overhead, as well as the cost of testing and rejecting fragments against the Z-buffer, is presently unavoidable for standard meshes. We currently perform occlusion culling only for meshlets. But other meshes, such as skinned meshes, can benefit from occlusion culling too in order to avoid the transform and skinning overhead for unseen meshes. This commit adapts the same [*two-phase occlusion culling*] technique that meshlets use to Bevy's standard 3D mesh pipeline when the new `OcclusionCulling` component, as well as the `DepthPrepass` component, are present on the camera. It has these steps: 1. *Early depth prepass*: We use the hierarchical Z-buffer from the previous frame to cull meshes for the initial depth prepass, effectively rendering only the meshes that were visible in the last frame. 2. *Early depth downsample*: We downsample the depth buffer to create another hierarchical Z-buffer, this time with the current view transform. 3. *Late depth prepass*: We use the new hierarchical Z-buffer to test all meshes that weren't rendered in the early depth prepass. Any meshes that pass this check are rendered. 4. *Late depth downsample*: Again, we downsample the depth buffer to create a hierarchical Z-buffer in preparation for the early depth prepass of the next frame. This step is done after all the rendering, in order to account for custom phase items that might write to the depth buffer. Note that this patch has no effect on the per-mesh CPU overhead for occluded objects, which remains high for a GPU-driven renderer due to the lack of `cold-specialization` and retained bins. If `cold-specialization` and retained bins weren't on the horizon, then a more traditional approach like potentially visible sets (PVS) or low-res CPU rendering would probably be more efficient than the GPU-driven approach that this patch implements for most scenes. However, at this point the amount of effort required to implement a PVS baking tool or a low-res CPU renderer would probably be greater than landing `cold-specialization` and retained bins, and the GPU driven approach is the more modern one anyway. It does mean that the performance improvements from occlusion culling as implemented in this patch *today* are likely to be limited, because of the high CPU overhead for occluded meshes. Note also that this patch currently doesn't implement occlusion culling for 2D objects or shadow maps. Those can be addressed in a follow-up. Additionally, note that the techniques in this patch require compute shaders, which excludes support for WebGL 2. This PR is marked experimental because of known precision issues with the downsampling approach when applied to non-power-of-two framebuffer sizes (i.e. most of them). These precision issues can, in rare cases, cause objects to be judged occluded that in fact are not. (I've never seen this in practice, but I know it's possible; it tends to be likelier to happen with small meshes.) As a follow-up to this patch, we desire to switch to the [SPD-based hi-Z buffer shader from the Granite engine], which doesn't suffer from these problems, at which point we should be able to graduate this feature from experimental status. I opted not to include that rewrite in this patch for two reasons: (1) @JMS55 is planning on doing the rewrite to coincide with the new availability of image atomic operations in Naga; (2) to reduce the scope of this patch. A new example, `occlusion_culling`, has been added. It demonstrates objects becoming quickly occluded and disoccluded by dynamic geometry and shows the number of objects that are actually being rendered. Also, a new `--occlusion-culling` switch has been added to `scene_viewer`, in order to make it easy to test this patch with large scenes like Bistro. [*two-phase occlusion culling*]: https://medium.com/@mil_kru/two-pass-occlusion-culling-4100edcad501 [Aaltonen SIGGRAPH 2015]: https://www.advances.realtimerendering.com/s2015/aaltonenhaar_siggraph2015_combined_final_footer_220dpi.pdf [Some literature]: https://gist.github.com/reduz/c5769d0e705d8ab7ac187d63be0099b5?permalink_comment_id=5040452#gistcomment-5040452 [SPD-based hi-Z buffer shader from the Granite engine]: https://github.com/Themaister/Granite/blob/master/assets/shaders/post/hiz.comp ## Migration guide * When enqueuing a custom mesh pipeline, work item buffers are now created with `bevy::render::batching::gpu_preprocessing::get_or_create_work_item_buffer`, not `PreprocessWorkItemBuffers::new`. See the `specialized_mesh_pipeline` example. ## Showcase Occlusion culling example:  Bistro zoomed out, before occlusion culling:  Bistro zoomed out, after occlusion culling:  In this scene, occlusion culling reduces the number of meshes Bevy has to render from 1591 to 585. |

||

|

|

35101f3ed5

|

Use multi_draw_indirect_count where available, in preparation for two-phase occlusion culling. (#17211)

This commit allows Bevy to use `multi_draw_indirect_count` for drawing meshes. The `multi_draw_indirect_count` feature works just like `multi_draw_indirect`, but it takes the number of indirect parameters from a GPU buffer rather than specifying it on the CPU. Currently, the CPU constructs the list of indirect draw parameters with the instance count for each batch set to zero, uploads the resulting buffer to the GPU, and dispatches a compute shader that bumps the instance count for each mesh that survives culling. Unfortunately, this is inefficient when we support `multi_draw_indirect_count`. Draw commands corresponding to meshes for which all instances were culled will remain present in the list when calling `multi_draw_indirect_count`, causing overhead. Proper use of `multi_draw_indirect_count` requires eliminating these empty draw commands. To address this inefficiency, this PR makes Bevy fully construct the indirect draw commands on the GPU instead of on the CPU. Instead of writing instance counts to the draw command buffer, the mesh preprocessing shader now writes them to a separate *indirect metadata buffer*. A second compute dispatch known as the *build indirect parameters* shader runs after mesh preprocessing and converts the indirect draw metadata into actual indirect draw commands for the GPU. The build indirect parameters shader operates on a batch at a time, rather than an instance at a time, and as such each thread writes only 0 or 1 indirect draw parameters, simplifying the current logic in `mesh_preprocessing`, which currently has to have special cases for the first mesh in each batch. The build indirect parameters shader emits draw commands in a tightly packed manner, enabling maximally efficient use of `multi_draw_indirect_count`. Along the way, this patch switches mesh preprocessing to dispatch one compute invocation per render phase per view, instead of dispatching one compute invocation per view. This is preparation for two-phase occlusion culling, in which we will have two mesh preprocessing stages. In that scenario, the first mesh preprocessing stage must only process opaque and alpha tested objects, so the work items must be separated into those that are opaque or alpha tested and those that aren't. Thus this PR splits out the work items into a separate buffer for each phase. As this patch rewrites so much of the mesh preprocessing infrastructure, it was simpler to just fold the change into this patch instead of deferring it to the forthcoming occlusion culling PR. Finally, this patch changes mesh preprocessing so that it runs separately for indexed and non-indexed meshes. This is because draw commands for indexed and non-indexed meshes have different sizes and layouts. *The existing code is actually broken for non-indexed meshes*, as it attempts to overlay the indirect parameters for non-indexed meshes on top of those for indexed meshes. Consequently, right now the parameters will be read incorrectly when multiple non-indexed meshes are multi-drawn together. *This is a bug fix* and, as with the change to dispatch phases separately noted above, was easiest to include in this patch as opposed to separately. ## Migration Guide * Systems that add custom phase items now need to populate the indirect drawing-related buffers. See the `specialized_mesh_pipeline` example for an example of how this is done. |

||

|

|

141b7673ab

|

Key render phases off the main world view entity, not the render world view entity. (#16942)

We won't be able to retain render phases from frame to frame if the keys are unstable. It's not as simple as simply keying off the main world entity, however, because some main world entities extract to multiple render world entities. For example, directional lights extract to multiple shadow cascades, and point lights extract to one view per cubemap face. Therefore, we key off a new type, `RetainedViewEntity`, which contains the main entity plus a *subview ID*. This is part of the preparation for retained bins. --------- Co-authored-by: ickshonpe <david.curthoys@googlemail.com> |

||

|

|

3742e621ef

|

Allow clippy::too_many_arguments to lint without warnings (#17249)

# Objective Many instances of `clippy::too_many_arguments` linting happen to be on systems - functions which we don't call manually, and thus there's not much reason to worry about the argument count. ## Solution Allow `clippy::too_many_arguments` globally, and remove all lint attributes related to it. |

||

|

|

a8f15bd95e

|

Introduce two-level bins for multidrawable meshes. (#16898)

Currently, our batchable binned items are stored in a hash table that maps bin key, which includes the batch set key, to a list of entities. Multidraw is handled by sorting the bin keys and accumulating adjacent bins that can be multidrawn together (i.e. have the same batch set key) into multidraw commands during `batch_and_prepare_binned_render_phase`. This is reasonably efficient right now, but it will complicate future work to retain indirect draw parameters from frame to frame. Consider what must happen when we have retained indirect draw parameters and the application adds a bin (i.e. a new mesh) that shares a batch set key with some pre-existing meshes. (That is, the new mesh can be multidrawn with the pre-existing meshes.) To be maximally efficient, our goal in that scenario will be to update *only* the indirect draw parameters for the batch set (i.e. multidraw command) containing the mesh that was added, while leaving the others alone. That means that we have to quickly locate all the bins that belong to the batch set being modified. In the existing code, we would have to sort the list of bin keys so that bins that can be multidrawn together become adjacent to one another in the list. Then we would have to do a binary search through the sorted list to find the location of the bin that was just added. Next, we would have to widen our search to adjacent indexes that contain the same batch set, doing expensive comparisons against the batch set key every time. Finally, we would reallocate the indirect draw parameters and update the stored pointers to the indirect draw parameters that the bins store. By contrast, it'd be dramatically simpler if we simply changed the way bins are stored to first map from batch set key (i.e. multidraw command) to the bins (i.e. meshes) within that batch set key, and then from each individual bin to the mesh instances. That way, the scenario above in which we add a new mesh will be simpler to handle. First, we will look up the batch set key corresponding to that mesh in the outer map to find an inner map corresponding to the single multidraw command that will draw that batch set. We will know how many meshes the multidraw command is going to draw by the size of that inner map. Then we simply need to reallocate the indirect draw parameters and update the pointers to those parameters within the bins as necessary. There will be no need to do any binary search or expensive batch set key comparison: only a single hash lookup and an iteration over the inner map to update the pointers. This patch implements the above technique. Because we don't have retained bins yet, this PR provides no performance benefits. However, it opens the door to maximally efficient updates when only a small number of meshes change from frame to frame. The main churn that this patch causes is that the *batch set key* (which uniquely specifies a multidraw command) and *bin key* (which uniquely specifies a mesh *within* that multidraw command) are now separate, instead of the batch set key being embedded *within* the bin key. In order to isolate potential regressions, I think that at least #16890, #16836, and #16825 should land before this PR does. ## Migration Guide * The *batch set key* is now separate from the *bin key* in `BinnedPhaseItem`. The batch set key is used to collect multidrawable meshes together. If you aren't using the multidraw feature, you can safely set the batch set key to `()`. |

||

|

|

40df1ea4b6

|

Remove the type parameter from check_visibility, and only invoke it once. (#16812)

Currently, `check_visibility` is parameterized over a query filter that specifies the type of potentially-visible object. This has the unfortunate side effect that we need a separate system, `mark_view_visibility_as_changed_if_necessary`, to trigger view visibility change detection. That system is quite slow because it must iterate sequentially over all entities in the scene. This PR moves the query filter from `check_visibility` to a new component, `VisibilityClass`. `VisibilityClass` stores a list of type IDs, each corresponding to one of the query filters we used to use. Because `check_visibility` is no longer specialized to the query filter at the type level, Bevy now only needs to invoke it once, leading to better performance as `check_visibility` can do change detection on the fly rather than delegating it to a separate system. This commit also has ergonomic improvements, as there's no need for applications that want to add their own custom renderable components to add specializations of the `check_visibility` system to the schedule. Instead, they only need to ensure that the `ViewVisibility` component is properly kept up to date. The recommended way to do this, and the way that's demonstrated in the `custom_phase_item` and `specialized_mesh_pipeline` examples, is to make `ViewVisibility` a required component and to add the type ID to it in a component add hook. This patch does this for `Mesh3d`, `Mesh2d`, `Sprite`, `Light`, and `Node`, which means that most app code doesn't need to change at all. Note that, although this patch has a large impact on the performance of visibility determination, it doesn't actually improve the end-to-end frame time of `many_cubes`. That's because the render world was already effectively hiding the latency from `mark_view_visibility_as_changed_if_necessary`. This patch is, however, necessary for *further* improvements to `many_cubes` performance. `many_cubes` trace before:  `many_cubes` trace after:  ## Migration Guide * `check_visibility` no longer takes a `QueryFilter`, and there's no need to add it manually to your app schedule anymore for custom rendering items. Instead, entities with custom renderable components should add the appropriate type IDs to `VisibilityClass`. See `custom_phase_item` for an example. |

||

|

|

35826be6f7

|

Implement bindless lightmaps. (#16653)

This commit allows Bevy to bind 16 lightmaps at a time, if the current platform supports bindless textures. Naturally, if bindless textures aren't supported, Bevy falls back to binding only a single lightmap at a time. As lightmaps are usually heavily atlased, I doubt many scenes will use more than 16 lightmap textures. This has little performance impact now, but it's desirable for us to reap the benefits of multidraw and bindless textures on scenes that use lightmaps. Otherwise, we might have to break batches in order to switch those lightmaps. Additionally, this PR slightly reduces the cost of binning because it makes the lightmap index in `Opaque3dBinKey` 32 bits instead of an `AssetId`. ## Migration Guide * The `Opaque3dBinKey::lightmap_image` field is now `Opaque3dBinKey::lightmap_slab`, which is a lightweight identifier for an entire binding array of lightmaps. |

||

|

|

0707c0717b

|

✏️ Fix typos across bevy (#16702)

# Objective Fixes typos in bevy project, following suggestion in https://github.com/bevyengine/bevy-website/pull/1912#pullrequestreview-2483499337 ## Solution I used https://github.com/crate-ci/typos to find them. I included only the ones that feel undebatable too me, but I am not in game engine so maybe some terms are expected. I left out the following typos: - `reparametrize` => `reparameterize`: There are a lot of occurences, I believe this was expected - `semicircles` => `hemicircles`: 2 occurences, may mean something specific in geometry - `invertation` => `inversion`: may mean something specific - `unparented` => `parentless`: may mean something specific - `metalness` => `metallicity`: may mean something specific ## Testing - Did you test these changes? If so, how? I did not test the changes, most changes are related to raw text. I expect the others to be tested by the CI. - Are there any parts that need more testing? I do not think - How can other people (reviewers) test your changes? Is there anything specific they need to know? To me there is nothing to test - If relevant, what platforms did you test these changes on, and are there any important ones you can't test? --- ## Migration Guide > This section is optional. If there are no breaking changes, you can delete this section. (kept in case I include the `reparameterize` change here) - If this PR is a breaking change (relative to the last release of Bevy), describe how a user might need to migrate their code to support these changes - Simply adding new functionality is not a breaking change. - Fixing behavior that was definitely a bug, rather than a questionable design choice is not a breaking change. ## Questions - [x] Should I include the above typos? No (https://github.com/bevyengine/bevy/pull/16702#issuecomment-2525271152) - [ ] Should I add `typos` to the CI? (I will check how to configure it properly) This project looks awesome, I really enjoy reading the progress made, thanks to everyone involved. |

||

|

|

f5de3f08fb

|

Use multidraw for opaque meshes when GPU culling is in use. (#16427)

This commit adds support for *multidraw*, which is a feature that allows multiple meshes to be drawn in a single drawcall. `wgpu` currently implements multidraw on Vulkan, so this feature is only enabled there. Multiple meshes can be drawn at once if they're in the same vertex and index buffers and are otherwise placed in the same bin. (Thus, for example, at present the materials and textures must be identical, but see #16368.) Multidraw is a significant performance improvement during the draw phase because it reduces the number of rebindings, as well as the number of drawcalls. This feature is currently only enabled when GPU culling is used: i.e. when `GpuCulling` is present on a camera. Therefore, if you run for example `scene_viewer`, you will not see any performance improvements, because `scene_viewer` doesn't add the `GpuCulling` component to its camera. Additionally, the multidraw feature is only implemented for opaque 3D meshes and not for shadows or 2D meshes. I plan to make GPU culling the default and to extend the feature to shadows in the future. Also, in the future I suspect that polyfilling multidraw on APIs that don't support it will be fruitful, as even without driver-level support use of multidraw allows us to avoid expensive `wgpu` rebindings. |

||

|

|

5adf831b42

|

Add a bindless mode to AsBindGroup. (#16368)

This patch adds the infrastructure necessary for Bevy to support

*bindless resources*, by adding a new `#[bindless]` attribute to

`AsBindGroup`.

Classically, only a single texture (or sampler, or buffer) can be

attached to each shader binding. This means that switching materials

requires breaking a batch and issuing a new drawcall, even if the mesh

is otherwise identical. This adds significant overhead not only in the

driver but also in `wgpu`, as switching bind groups increases the amount

of validation work that `wgpu` must do.

*Bindless resources* are the typical solution to this problem. Instead

of switching bindings between each texture, the renderer instead

supplies a large *array* of all textures in the scene up front, and the

material contains an index into that array. This pattern is repeated for

buffers and samplers as well. The renderer now no longer needs to switch

binding descriptor sets while drawing the scene.

Unfortunately, as things currently stand, this approach won't quite work

for Bevy. Two aspects of `wgpu` conspire to make this ideal approach

unacceptably slow:

1. In the DX12 backend, all binding arrays (bindless resources) must

have a constant size declared in the shader, and all textures in an

array must be bound to actual textures. Changing the size requires a

recompile.

2. Changing even one texture incurs revalidation of all textures, a

process that takes time that's linear in the total size of the binding

array.

This means that declaring a large array of textures big enough to

encompass the entire scene is presently unacceptably slow. For example,

if you declare 4096 textures, then `wgpu` will have to revalidate all

4096 textures if even a single one changes. This process can take

multiple frames.

To work around this problem, this PR groups bindless resources into

small *slabs* and maintains a free list for each. The size of each slab

for the bindless arrays associated with a material is specified via the

`#[bindless(N)]` attribute. For instance, consider the following

declaration:

```rust

#[derive(AsBindGroup)]

#[bindless(16)]

struct MyMaterial {

#[buffer(0)]

color: Vec4,

#[texture(1)]

#[sampler(2)]

diffuse: Handle<Image>,

}

```

The `#[bindless(N)]` attribute specifies that, if bindless arrays are

supported on the current platform, each resource becomes a binding array

of N instances of that resource. So, for `MyMaterial` above, the `color`

attribute is exposed to the shader as `binding_array<vec4<f32>, 16>`,

the `diffuse` texture is exposed to the shader as

`binding_array<texture_2d<f32>, 16>`, and the `diffuse` sampler is

exposed to the shader as `binding_array<sampler, 16>`. Inside the

material's vertex and fragment shaders, the applicable index is

available via the `material_bind_group_slot` field of the `Mesh`

structure. So, for instance, you can access the current color like so:

```wgsl

// `uniform` binding arrays are a non-sequitur, so `uniform` is automatically promoted

// to `storage` in bindless mode.

@group(2) @binding(0) var<storage> material_color: binding_array<Color, 4>;

...

@fragment

fn fragment(in: VertexOutput) -> @location(0) vec4<f32> {

let color = material_color[mesh[in.instance_index].material_bind_group_slot];

...

}

```

Note that portable shader code can't guarantee that the current platform

supports bindless textures. Indeed, bindless mode is only available in

Vulkan and DX12. The `BINDLESS` shader definition is available for your

use to determine whether you're on a bindless platform or not. Thus a

portable version of the shader above would look like:

```wgsl

#ifdef BINDLESS

@group(2) @binding(0) var<storage> material_color: binding_array<Color, 4>;

#else // BINDLESS

@group(2) @binding(0) var<uniform> material_color: Color;

#endif // BINDLESS

...

@fragment

fn fragment(in: VertexOutput) -> @location(0) vec4<f32> {

#ifdef BINDLESS

let color = material_color[mesh[in.instance_index].material_bind_group_slot];

#else // BINDLESS

let color = material_color;

#endif // BINDLESS

...

}

```

Importantly, this PR *doesn't* update `StandardMaterial` to be bindless.

So, for example, `scene_viewer` will currently not run any faster. I

intend to update `StandardMaterial` to use bindless mode in a follow-up

patch.

A new example, `shaders/shader_material_bindless`, has been added to

demonstrate how to use this new feature.

Here's a Tracy profile of `submit_graph_commands` of this patch and an

additional patch (not submitted yet) that makes `StandardMaterial` use

bindless. Red is those patches; yellow is `main`. The scene was Bistro

Exterior with a hack that forces all textures to opaque. You can see a

1.47x mean speedup.

## Migration Guide

* `RenderAssets::prepare_asset` now takes an `AssetId` parameter.

* Bin keys now have Bevy-specific material bind group indices instead of

`wgpu` material bind group IDs, as part of the bindless change. Use the

new `MaterialBindGroupAllocator` to map from bind group index to bind

group ID.

|

||

|

|

40640fdf42

|

Don't reëxport bevy_image from bevy_render (#16163)

# Objective Fixes #15940 ## Solution Remove the `pub use` and fix the compile errors. Make `bevy_image` available as `bevy::image`. ## Testing Feature Frenzy would be good here! Maybe I'll learn how to use it if I have some time this weekend, or maybe a reviewer can use it. ## Migration Guide Use `bevy_image` instead of `bevy_render::texture` items. --------- Co-authored-by: chompaa <antony.m.3012@gmail.com> Co-authored-by: Carter Anderson <mcanders1@gmail.com> |

||

|

|

c29e67153b

|

Expose Pipeline Compilation Zero Initialize Workgroup Memory Option (#16301)

# Objective - wgpu 0.20 made workgroup vars stop being zero-init by default. this broke some applications (cough foresight cough) and now we workaround it. wgpu exposes a compilation option that zero initializes workgroup memory by default, but bevy does not expose it. ## Solution - expose the compilation option wgpu gives us ## Testing - ran examples: 3d_scene, compute_shader_game_of_life, gpu_readback, lines, specialized_mesh_pipeline. they all work - confirmed fix for our own problems --- </details> ## Migration Guide - add `zero_initialize_workgroup_memory: false,` to `ComputePipelineDescriptor` or `RenderPipelineDescriptor` structs to preserve 0.14 functionality, add `zero_initialize_workgroup_memory: true,` to restore bevy 0.13 functionality. |

||

|

|

d17de6c105

|

Fix panic due to malformed mesh in specialized_mesh_pipeline (#15899)

# Objective Fixes #15891 ## Solution Just remove the invalid triangle. I'm assuming that line of code was originally copied from one that was drawing a quad. ## Testing - `cargo run --example specialized_mesh_pipeline` - hover over over the triangles Tested on macos |

||

|

|

bdd0af6bfb

|

Deprecate SpatialBundle (#15830)

# Objective - Required components replace bundles, but `SpatialBundle` is yet to be deprecated ## Solution - Deprecate `SpatialBundle` - Insert `Transform` and `Visibility` instead in examples using it - In `spawn` or `insert` inserting a default `Transform` or `Visibility` with component already requiring either, remove those components from the tuple ## Testing - Did you test these changes? If so, how? Yes, I ran the examples I changed and tests - Are there any parts that need more testing? The `gamepad_viewer` and and `custom_shader_instancing` examples don't work as intended due to entirely unrelated code, didn't check main. - How can other people (reviewers) test your changes? Is there anything specific they need to know? Run examples, or just check that all spawned values are identical - If relevant, what platforms did you test these changes on, and are there any important ones you can't test? Linux, wayland trough x11 (cause that's the default feature) --- ## Migration Guide `SpatialBundle` is now deprecated, insert `Transform` and `Visibility` instead which will automatically insert all other components that were in the bundle. If you do not specify these values and any other components in your `spawn`/`insert` call already requires either of these components you can leave that one out. before: ```rust commands.spawn(SpatialBundle::default()); ``` after: ```rust commands.spawn((Transform::default(), Visibility::default()); ``` |

||

|

|

dd812b3e49

|

Type safe retained render world (#15756)

# Objective In the Render World, there are a number of collections that are derived from Main World entities and are used to drive rendering. The most notable are: - `VisibleEntities`, which is generated in the `check_visibility` system and contains visible entities for a view. - `ExtractedInstances`, which maps entity ids to asset ids. In the old model, these collections were trivially kept in sync -- any extracted phase item could look itself up because the render entity id was guaranteed to always match the corresponding main world id. After #15320, this became much more complicated, and was leading to a number of subtle bugs in the Render World. The main rendering systems, i.e. `queue_material_meshes` and `queue_material2d_meshes`, follow a similar pattern: ```rust for visible_entity in visible_entities.iter::<With<Mesh2d>>() { let Some(mesh_instance) = render_mesh_instances.get_mut(visible_entity) else { continue; }; // Look some more stuff up and specialize the pipeline... let bin_key = Opaque2dBinKey { pipeline: pipeline_id, draw_function: draw_opaque_2d, asset_id: mesh_instance.mesh_asset_id.into(), material_bind_group_id: material_2d.get_bind_group_id().0, }; opaque_phase.add( bin_key, *visible_entity, BinnedRenderPhaseType::mesh(mesh_instance.automatic_batching), ); } ``` In this case, `visible_entities` and `render_mesh_instances` are both collections that are created and keyed by Main World entity ids, and so this lookup happens to work by coincidence. However, there is a major unintentional bug here: namely, because `visible_entities` is a collection of Main World ids, the phase item being queued is created with a Main World id rather than its correct Render World id. This happens to not break mesh rendering because the render commands used for drawing meshes do not access the `ItemQuery` parameter, but demonstrates the confusion that is now possible: our UI phase items are correctly being queued with Render World ids while our meshes aren't. Additionally, this makes it very easy and error prone to use the wrong entity id to look up things like assets. For example, if instead we ignored visibility checks and queued our meshes via a query, we'd have to be extra careful to use `&MainEntity` instead of the natural `Entity`. ## Solution Make all collections that are derived from Main World data use `MainEntity` as their key, to ensure type safety and avoid accidentally looking up data with the wrong entity id: ```rust pub type MainEntityHashMap<V> = hashbrown::HashMap<MainEntity, V, EntityHash>; ``` Additionally, we make all `PhaseItem` be able to provide both their Main and Render World ids, to allow render phase implementors maximum flexibility as to what id should be used to look up data. You can think of this like tracking at the type level whether something in the Render World should use it's "primary key", i.e. entity id, or needs to use a foreign key, i.e. `MainEntity`. ## Testing ##### TODO: This will require extensive testing to make sure things didn't break! Additionally, some extraction logic has become more complicated and needs to be checked for regressions. ## Migration Guide With the advent of the retained render world, collections that contain references to `Entity` that are extracted into the render world have been changed to contain `MainEntity` in order to prevent errors where a render world entity id is used to look up an item by accident. Custom rendering code may need to be changed to query for `&MainEntity` in order to look up the correct item from such a collection. Additionally, users who implement their own extraction logic for collections of main world entity should strongly consider extracting into a different collection that uses `MainEntity` as a key. Additionally, render phases now require specifying both the `Entity` and `MainEntity` for a given `PhaseItem`. Custom render phases should ensure `MainEntity` is available when queuing a phase item. |

||

|

|

3da0ef048e

|

Remove the Component trait implementation from Handle (#15796)

# Objective - Closes #15716 - Closes #15718 ## Solution - Replace `Handle<MeshletMesh>` with a new `MeshletMesh3d` component - As expected there were some random things that needed fixing: - A couple tests were storing handles just to prevent them from being dropped I believe, which seems to have been unnecessary in some. - The `SpriteBundle` still had a `Handle<Image>` field. I've removed this. - Tests in `bevy_sprite` incorrectly added a `Handle<Image>` field outside of the `Sprite` component. - A few examples were still inserting `Handle`s, switched those to their corresponding wrappers. - 2 examples that were still querying for `Handle<Image>` were changed to query `Sprite` ## Testing - I've verified that the changed example work now ## Migration Guide `Handle` can no longer be used as a `Component`. All existing Bevy types using this pattern have been wrapped in their own semantically meaningful type. You should do the same for any custom `Handle` components your project needs. The `Handle<MeshletMesh>` component is now `MeshletMesh3d`. The `WithMeshletMesh` type alias has been removed. Use `With<MeshletMesh3d>` instead. |

||

|

|

25bfa80e60

|

Migrate cameras to required components (#15641)

# Objective Yet another PR for migrating stuff to required components. This time, cameras! ## Solution As per the [selected proposal](https://hackmd.io/tsYID4CGRiWxzsgawzxG_g#Combined-Proposal-1-Selected), deprecate `Camera2dBundle` and `Camera3dBundle` in favor of `Camera2d` and `Camera3d`. Adding a `Camera` without `Camera2d` or `Camera3d` now logs a warning, as suggested by Cart [on Discord](https://discord.com/channels/691052431525675048/1264881140007702558/1291506402832945273). I would personally like cameras to work a bit differently and be split into a few more components, to avoid some footguns and confusing semantics, but that is more controversial, and shouldn't block this core migration. ## Testing I ran a few 2D and 3D examples, and tried cameras with and without render graphs. --- ## Migration Guide `Camera2dBundle` and `Camera3dBundle` have been deprecated in favor of `Camera2d` and `Camera3d`. Inserting them will now also insert the other components required by them automatically. |

||

|

|

bfcb19a871

|

Add example showing how to use SpecializedMeshPipeline (#14370)

# Objective - A lot of mid-level rendering apis are hard to figure out because they don't have any examples - SpecializedMeshPipeline can be really useful in some cases when you want more flexibility than a Material without having to go to low level apis. ## Solution - Add an example showing how to make a custom `SpecializedMeshPipeline`. ## Testing - Did you test these changes? If so, how? - Are there any parts that need more testing? - How can other people (reviewers) test your changes? Is there anything specific they need to know? - If relevant, what platforms did you test these changes on, and are there any important ones you can't test? --- ## Showcase The examples just spawns 3 triangles in a triangle pattern.  --------- Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com> |