# Objective

`TEXTURE_ADAPTER_SPECIFIC_FORMAT_FEATURES` was already included in `adapter.features()` on non-wasm target, and since it is the default value for `WgpuSettings.features`, the subsequent code will also combine into this feature:

b6066c30b6/crates/bevy_render/src/renderer/mod.rs (L155-L156)

# Objective

- fix new clippy lints before they get stable and break CI

## Solution

- run `clippy --fix` to auto-fix machine-applicable lints

- silence `clippy::should_implement_trait` for `fn HandleId::default<T: Asset>`

## Changes

- always prefer `format!("{inline}")` over `format!("{}", not_inline)`

- prefer `Box::default` (or `Box::<T>::default` if necessary) over `Box::new(T::default())`

# Objective

Currently, Bevy only supports rendering to the current "surface texture format". This means that "render to texture" scenarios must use the exact format the primary window's surface uses, or Bevy will crash. This is even harder than it used to be now that we detect preferred surface formats at runtime instead of using hard coded BevyDefault values.

## Solution

1. Look up and store each window surface's texture format alongside other extracted window information

2. Specialize the upscaling pass on the current `RenderTarget`'s texture format, now that we can cheaply correlate render targets to their current texture format

3. Remove the old `SurfaceTextureFormat` and `AvailableTextureFormats`: these are now redundant with the information stored on each extracted window, and probably should not have been globals in the first place (as in theory each surface could have a different format).

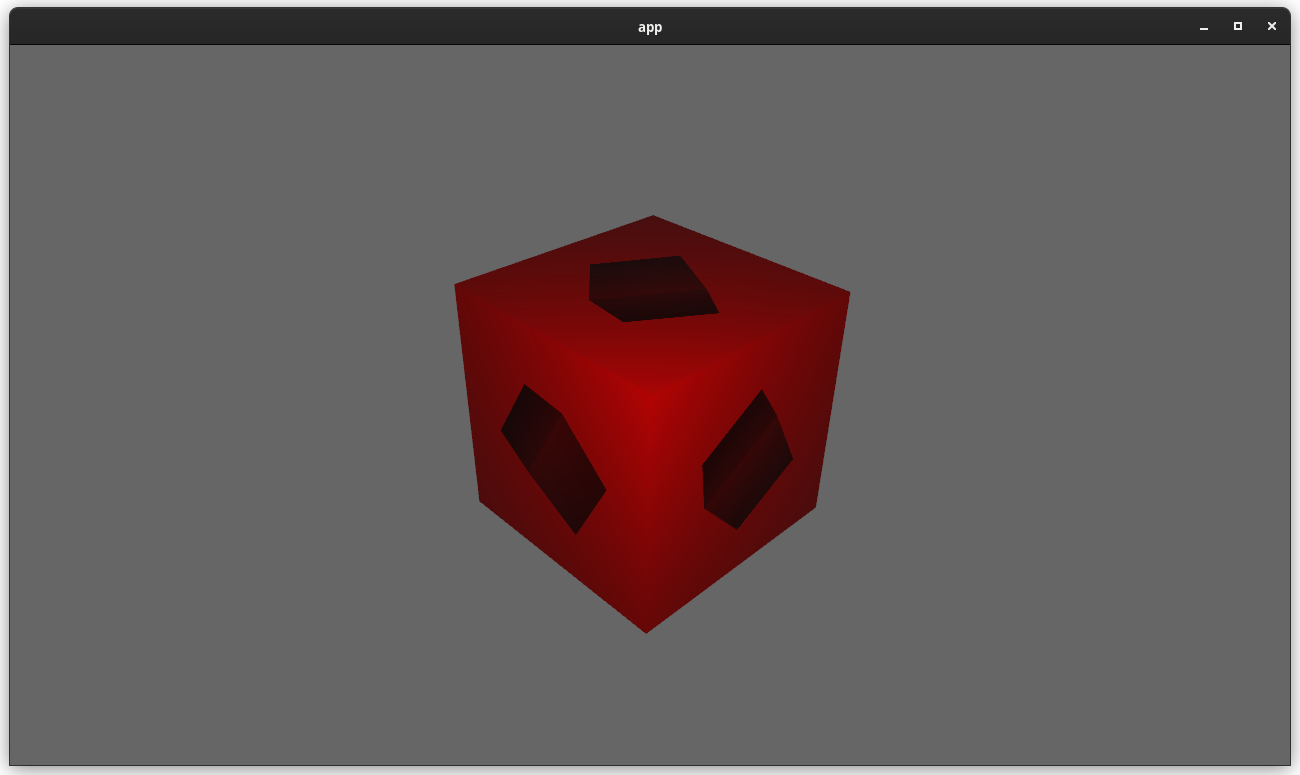

This means you can now use any texture format you want when rendering to a texture! For example, changing the `render_to_texture` example to use `R16Float` now doesn't crash / properly only stores the red component:

Attempt to make features like bloom https://github.com/bevyengine/bevy/pull/2876 easier to implement.

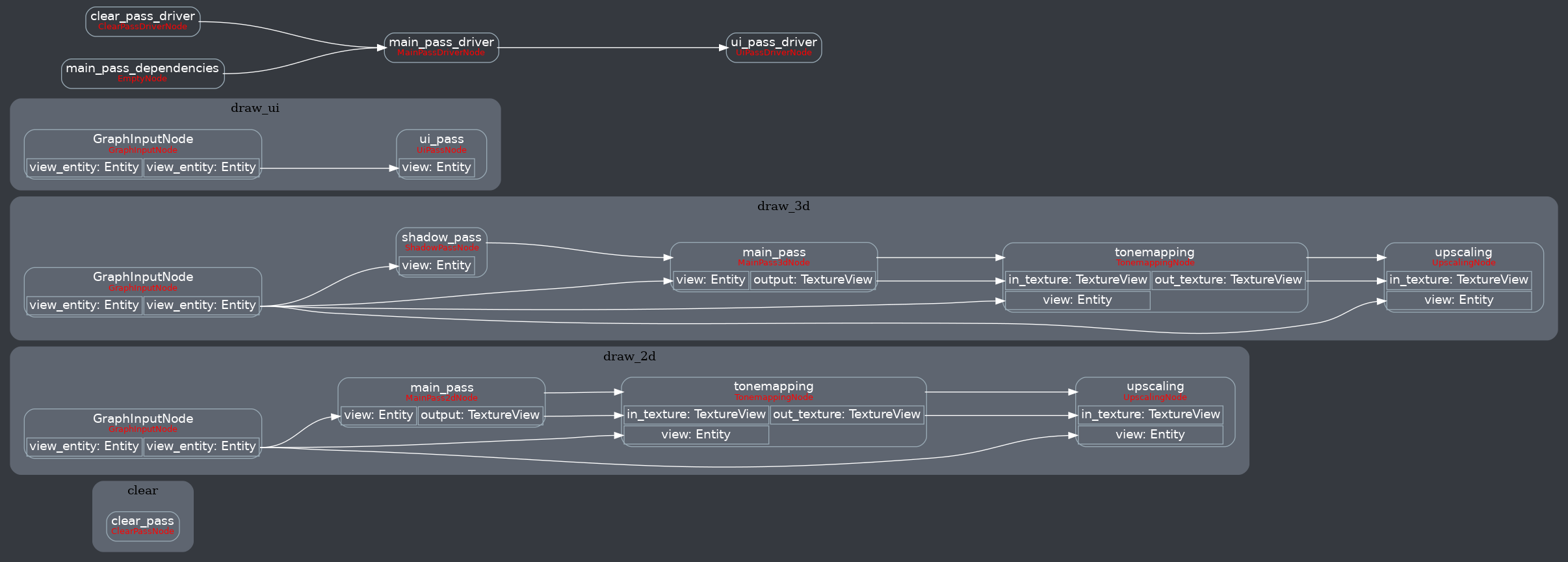

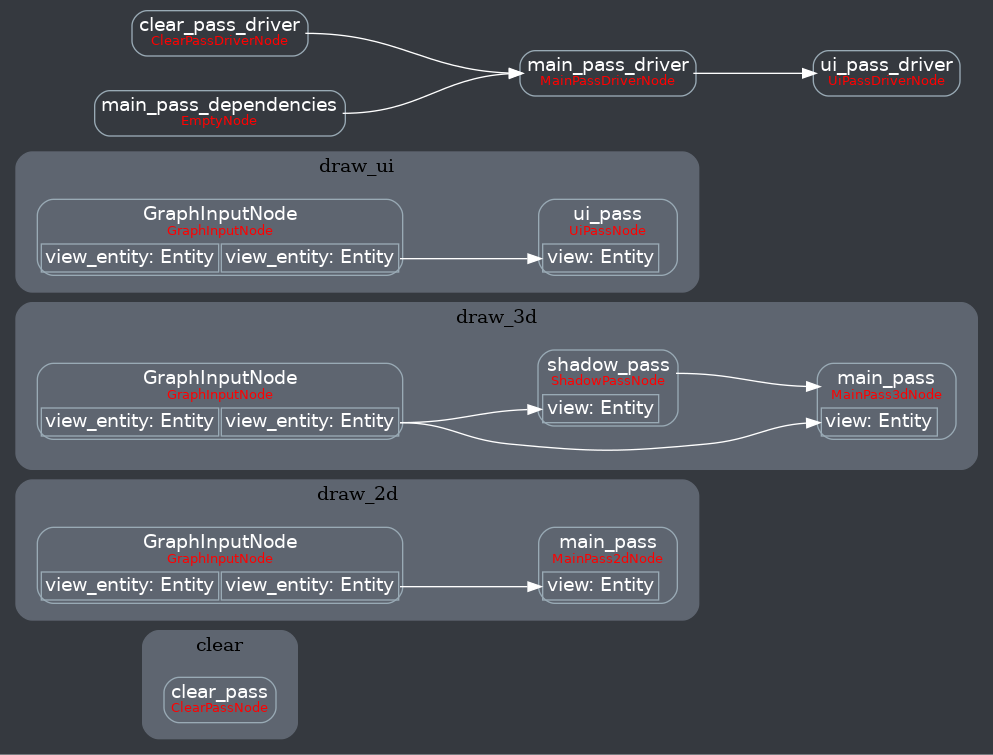

**This PR:**

- Moves the tonemapping from `pbr.wgsl` into a separate pass

- also add a separate upscaling pass after the tonemapping which writes to the swap chain (enables resolution-independant rendering and post-processing after tonemapping)

- adds a `hdr` bool to the camera which controls whether the pbr and sprite shaders render into a `Rgba16Float` texture

**Open questions:**

- ~should the 2d graph work the same as the 3d one?~ it is the same now

- ~The current solution is a bit inflexible because while you can add a post processing pass that writes to e.g. the `hdr_texture`, you can't write to a separate `user_postprocess_texture` while reading the `hdr_texture` and tell the tone mapping pass to read from the `user_postprocess_texture` instead. If the tonemapping and upscaling render graph nodes were to take in a `TextureView` instead of the view entity this would almost work, but the bind groups for their respective input textures are already created in the `Queue` render stage in the hardcoded order.~ solved by creating bind groups in render node

**New render graph:**

<details>

<summary>Before</summary>

</details>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

As suggested in #6104, it would be nice to link directly to `linux_dependencies.md` file in the panic message when running on Linux. And when not compiling for Linux, we fall back to the old message.

Signed-off-by: Lena Milizé <me@lvmn.org>

# Objective

Resolves#6104.

## Solution

Add link to `linux_dependencies.md` when compiling for Linux, and fall back to the old one when not.

# Objective

There is no Srgb support on some GPU and display protocols with `winit` (for example, Nvidia's GPUs with Wayland). Thus `TextureFormat::bevy_default()` which returns `Rgba8UnormSrgb` or `Bgra8UnormSrgb` will cause panics on such platforms. This patch will resolve this problem. Fix https://github.com/bevyengine/bevy/issues/3897.

## Solution

Make `initialize_renderer` expose `wgpu::Adapter` and `first_available_texture_format`, use the `first_available_texture_format` by default.

## Changelog

* Fixed https://github.com/bevyengine/bevy/issues/3897.

*This PR description is an edited copy of #5007, written by @alice-i-cecile.*

# Objective

Follow-up to https://github.com/bevyengine/bevy/pull/2254. The `Resource` trait currently has a blanket implementation for all types that meet its bounds.

While ergonomic, this results in several drawbacks:

* it is possible to make confusing, silent mistakes such as inserting a function pointer (Foo) rather than a value (Foo::Bar) as a resource

* it is challenging to discover if a type is intended to be used as a resource

* we cannot later add customization options (see the [RFC](https://github.com/bevyengine/rfcs/blob/main/rfcs/27-derive-component.md) for the equivalent choice for Component).

* dependencies can use the same Rust type as a resource in invisibly conflicting ways

* raw Rust types used as resources cannot preserve privacy appropriately, as anyone able to access that type can read and write to internal values

* we cannot capture a definitive list of possible resources to display to users in an editor

## Notes to reviewers

* Review this commit-by-commit; there's effectively no back-tracking and there's a lot of churn in some of these commits.

*ira: My commits are not as well organized :')*

* I've relaxed the bound on Local to Send + Sync + 'static: I don't think these concerns apply there, so this can keep things simple. Storing e.g. a u32 in a Local is fine, because there's a variable name attached explaining what it does.

* I think this is a bad place for the Resource trait to live, but I've left it in place to make reviewing easier. IMO that's best tackled with https://github.com/bevyengine/bevy/issues/4981.

## Changelog

`Resource` is no longer automatically implemented for all matching types. Instead, use the new `#[derive(Resource)]` macro.

## Migration Guide

Add `#[derive(Resource)]` to all types you are using as a resource.

If you are using a third party type as a resource, wrap it in a tuple struct to bypass orphan rules. Consider deriving `Deref` and `DerefMut` to improve ergonomics.

`ClearColor` no longer implements `Component`. Using `ClearColor` as a component in 0.8 did nothing.

Use the `ClearColorConfig` in the `Camera3d` and `Camera2d` components instead.

Co-authored-by: Alice <alice.i.cecile@gmail.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: devil-ira <justthecooldude@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

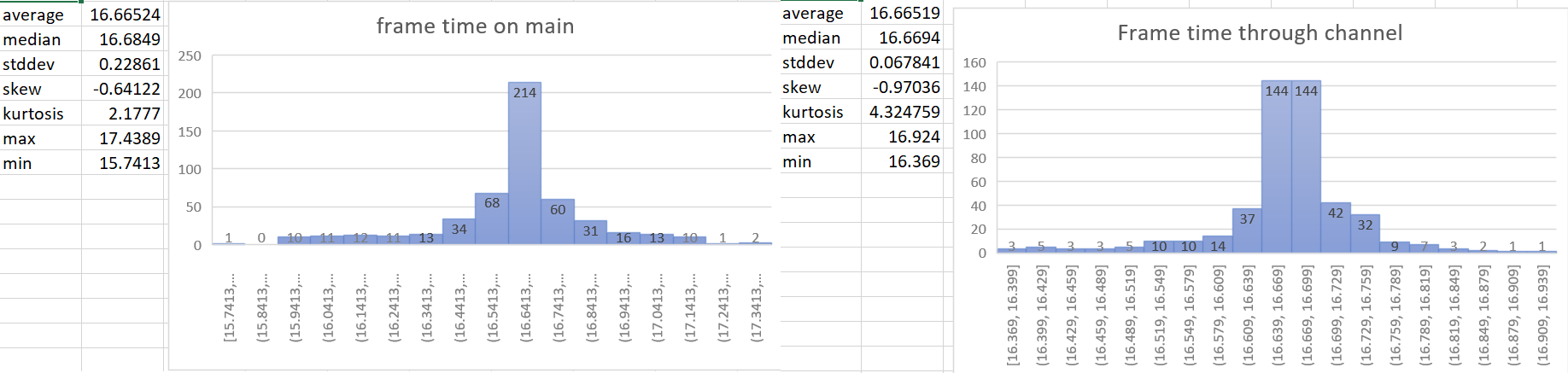

- The time update is currently done in the wrong part of the schedule. For a single frame the current order of things is update input, update time (First stage), other stages, render stage (frame presentation). So when we update the time it includes the input processing of the current frame and the frame presentation of the previous frame. This is a problem when vsync is on. When input processing takes a longer amount of time for a frame, the vsync wait time gets shorter. So when these are not paired correctly we can potentially have a long input processing time added to the normal vsync wait time in the previous frame. This leads to inaccurate frame time reporting and more variance of the time than actually exists. For more details of why this is an issue see the linked issue below.

- Helps with https://github.com/bevyengine/bevy/issues/4669

- Supercedes https://github.com/bevyengine/bevy/pull/4728 and https://github.com/bevyengine/bevy/pull/4735. This PR should be less controversial than those because it doesn't add to the API surface.

## Solution

- The most accurate frame time would come from hardware. We currently don't have access to that for multiple reasons, so the next best thing we can do is measure the frame time as close to frame presentation as possible. This PR gets the Instant::now() for the time immediately after frame presentation in the render system and then sends that time to the app world through a channel.

- implements suggestion from @aevyrie from here https://github.com/bevyengine/bevy/pull/4728#discussion_r872010606

## Statistics

---

## Changelog

- Make frame time reporting more accurate.

## Migration Guide

`time.delta()` now reports zero for 2 frames on startup instead of 1 frame.

# Objective

The frame marker event was emitted in the loop of presenting all the windows. This would mark the frame as finished multiple times if more than one window is used.

## Solution

Move the frame marker to after the `for`-loop, so that it gets executed only once.

Currently `tracy` interprets the entire trace as one frame because the marker for frames isn't being recorded.

~~When an event with `tracy.trace_marker=true` is recorded, `tracing-tracy` will mark the frame as finished:

<aa0b96b2ae/tracing-tracy/src/lib.rs (L240)>~~

~~Unfortunately this leads to~~

```rs

INFO bevy_app:frame: bevy_app::app: finished frame tracy.frame_mark=true

```

~~being printed every frame (we can't use DEBUG because bevy_log sets `max_release_level_info`.~~

Instead of emitting an event that gets logged every frame, we can depend on tracy-client itself and call `finish_continuous_frame!();`

Tracing added support for "inline span entering", which cuts down on a lot of complexity:

```rust

let span = info_span!("my_span").entered();

```

This adapts our code to use this pattern where possible, and updates our docs to recommend it.

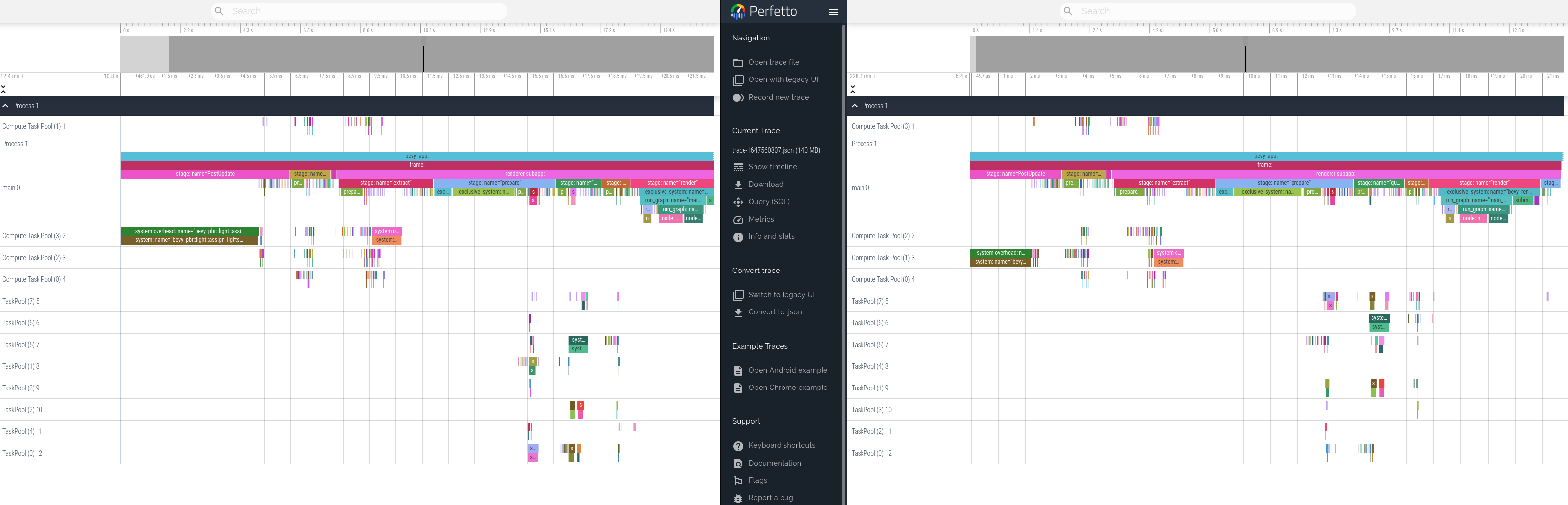

This produces equivalent tracing behavior. Here is a side by side profile of "before" and "after" these changes.

# Objective

Currently, errors in the render graph runner are exposed via a `Result::unwrap()` panic message, which dumps the debug representation of the error.

## Solution

This PR updates `render_system` to log the chain of errors, followed by an explicit panic:

```

ERROR bevy_render::renderer: Error running render graph:

ERROR bevy_render::renderer: > encountered an error when running a sub-graph

ERROR bevy_render::renderer: > tried to pass inputs to sub-graph "outline_graph", which has no input slots

thread 'main' panicked at 'Error running render graph: encountered an error when running a sub-graph', /[redacted]/bevy/crates/bevy_render/src/renderer/mod.rs:44:9

```

Some errors' `Display` impls (via `thiserror`) have also been updated to provide more detail about the cause of the error.

# Objective

- In the large majority of cases, users were calling `.unwrap()` immediately after `.get_resource`.

- Attempting to add more helpful error messages here resulted in endless manual boilerplate (see #3899 and the linked PRs).

## Solution

- Add an infallible variant named `.resource` and so on.

- Use these infallible variants over `.get_resource().unwrap()` across the code base.

## Notes

I did not provide equivalent methods on `WorldCell`, in favor of removing it entirely in #3939.

## Migration Guide

Infallible variants of `.get_resource` have been added that implicitly panic, rather than needing to be unwrapped.

Replace `world.get_resource::<Foo>().unwrap()` with `world.resource::<Foo>()`.

## Impact

- `.unwrap` search results before: 1084

- `.unwrap` search results after: 942

- internal `unwrap_or_else` calls added: 4

- trivial unwrap calls removed from tests and code: 146

- uses of the new `try_get_resource` API: 11

- percentage of the time the unwrapping API was used internally: 93%

# Objective

- `WgpuOptions` is mutated to be updated with the actual device limits and features, but this information is readily available to both the main and render worlds through the `RenderDevice` which has .limits() and .features() methods

- Information about the adapter in terms of its name, the backend in use, etc were not being exposed but have clear use cases for being used to take decisions about what rendering code to use. For example, if something works well on AMD GPUs but poorly on Intel GPUs. Or perhaps something works well in Vulkan but poorly in DX12.

## Solution

- Stop mutating `WgpuOptions `and don't insert the updated values into the main and render worlds

- Return `AdapterInfo` from `initialize_renderer` and insert it into the main and render worlds

- Use `RenderDevice` limits in the lighting code that was using `WgpuOptions.limits`.

- Renamed `WgpuOptions` to `WgpuSettings`

# Objective

- Support overriding wgpu features and limits that were calculated from default values or queried from the adapter/backend.

- Fixes#3686

## Solution

- Add `disabled_features: Option<wgpu::Features>` to `WgpuOptions`

- Add `constrained_limits: Option<wgpu::Limits>` to `WgpuOptions`

- After maybe obtaining updated features and limits from the adapter/backend in the case of `WgpuOptionsPriority::Functionality`, enable the `WgpuOptions` `features`, disable the `disabled_features`, and constrain the `limits` by `constrained_limits`.

- Note that constraining the limits means for `wgpu::Limits` members named `max_.*` we take the minimum of that which was configured/queried for the backend/adapter and the specified constrained limit value. This means the configured/queried value is used if the constrained limit is larger as that is as much as the device/API supports, or the constrained limit value is used if it is smaller as we are imposing an artificial constraint. For members named `min_.*` we take the maximum instead. For example, a minimum stride might be 256 but we set constrained limit value of 1024, then 1024 is the more conservative value. If the constrained limit value were 16, then 256 would be the more conservative.

# Objective

- While it is not safe to enable mappable primary buffers for all GPUs, it should be preferred for integrated GPUs where an integrated GPU is one that is sharing system memory.

## Solution

- Auto-disable mappable primary buffers only for discrete GPUs. If the GPU is integrated and mappable primary buffers are supported, use them.

# Objective

- When using `WgpuOptionsPriority::Functionality`, which is the default, wgpu::Features::MAPPABLE_PRIMARY_BUFFERS would be automatically enabled. This feature can and does have a significant negative impact on performance for discrete GPUs where resizable bar is not supported, which is a common case. As such, this feature should not be automatically enabled.

- Fixes the performance regression part of https://github.com/bevyengine/bevy/issues/3686 and at least some, if not all cases of https://github.com/bevyengine/bevy/issues/3687

## Solution

- When using `WgpuOptionsPriority::Functionality`, use the adapter-supported features, enable `TEXTURE_ADAPTER_SPECIFIC_FORMAT_FEATURES` and disable `MAPPABLE_PRIMARY_BUFFERS`

# Objective

- Allow the user to specify the priority when configuring wgpu features/limits and by default use the maximum capabilities of the chosen adapter.

## Solution

- Add a `WgpuOptionsPriority` enum with `Compatibility`, `Functionality` and `WebGL2` options.

- Add a `priority: WgpuOptionsPriority` member to `WgpuOptions`.

- When initialising the renderer, if `WgpuOptions::priority == WgpuOptionsPriority::Functionality`, query the adapter for the available features and limits, use them when creating a device, and update `WgpuOptions` with those values. If `Compatibility` use the behaviour as before this PR. If `WebGL2` then use the WebGL2 downlevel limits as used when when building for wasm, for convenience of testing WebGL2 limits without having to build for wasm.

- Add an environment variable `WGPU_OPTIONS_PRIO` that takes `compatibility`, `functionality`, `webgl2`.

- Default to `WgpuOptionsPriority::Functionality`.

- Insert updated `WgpuOptions` into render app world as well. This is useful for applying the limits when rendering, such as limiting the directional light shadow map texture to 2048x2048 when using WebGL2 downlevel limits but not on wasm.

- Reduced `draw_state` logs from `debug` to `trace` and added `debug` level logs for the wgpu features and limits. Use `RUST_LOG=bevy_render=debug` to see the output.

# Objective

- 3d examples fail to run in webgl2 because of unsupported texture formats or texture too large

## Solution

- switch to supported formats if a feature is enabled. I choose a feature instead of a build target to not conflict with a potential webgpu support

Very inspired by 6813b2edc5, and need #3290 to work.

I named the feature `webgl2`, but it's only needed if one want to use PBR in webgl2. Examples using only 2D already work.

Co-authored-by: François <8672791+mockersf@users.noreply.github.com>

This makes the [New Bevy Renderer](#2535) the default (and only) renderer. The new renderer isn't _quite_ ready for the final release yet, but I want as many people as possible to start testing it so we can identify bugs and address feedback prior to release.

The examples are all ported over and operational with a few exceptions:

* I removed a good portion of the examples in the `shader` folder. We still have some work to do in order to make these examples possible / ergonomic / worthwhile: #3120 and "high level shader material plugins" are the big ones. This is a temporary measure.

* Temporarily removed the multiple_windows example: doing this properly in the new renderer will require the upcoming "render targets" changes. Same goes for the render_to_texture example.

* Removed z_sort_debug: entity visibility sort info is no longer available in app logic. we could do this on the "render app" side, but i dont consider it a priority.