# Objective

- Introduce a stable alternative to

[`std::any::type_name`](https://doc.rust-lang.org/std/any/fn.type_name.html).

- Rewrite of #5805 with heavy inspiration in design.

- On the path to #5830.

- Part of solving #3327.

## Solution

- Add a `TypePath` trait for static stable type path/name information.

- Add a `TypePath` derive macro.

- Add a `impl_type_path` macro for implementing internal and foreign

types in `bevy_reflect`.

---

## Changelog

- Added `TypePath` trait.

- Added `DynamicTypePath` trait and `get_type_path` method to `Reflect`.

- Added a `TypePath` derive macro.

- Added a `bevy_reflect::impl_type_path` for implementing `TypePath` on

internal and foreign types in `bevy_reflect`.

- Changed `bevy_reflect::utility::(Non)GenericTypeInfoCell` to

`(Non)GenericTypedCell<T>` which allows us to be generic over both

`TypeInfo` and `TypePath`.

- `TypePath` is now a supertrait of `Asset`, `Material` and

`Material2d`.

- `impl_reflect_struct` needs a `#[type_path = "..."]` attribute to be

specified.

- `impl_reflect_value` needs to either specify path starting with a

double colon (`::core::option::Option`) or an `in my_crate::foo`

declaration.

- Added `bevy_reflect_derive::ReflectTypePath`.

- Most uses of `Ident` in `bevy_reflect_derive` changed to use

`ReflectTypePath`.

## Migration Guide

- Implementors of `Asset`, `Material` and `Material2d` now also need to

derive `TypePath`.

- Manual implementors of `Reflect` will need to implement the new

`get_type_path` method.

## Open Questions

- [x] ~This PR currently does not migrate any usages of

`std::any::type_name` to use `bevy_reflect::TypePath` to ease the review

process. Should it?~ Migration will be left to a follow-up PR.

- [ ] This PR adds a lot of `#[derive(TypePath)]` and `T: TypePath` to

satisfy new bounds, mostly when deriving `TypeUuid`. Should we make

`TypePath` a supertrait of `TypeUuid`? [Should we remove `TypeUuid` in

favour of

`TypePath`?](2afbd85532 (r961067892))

# Objective

- `apply_system_buffers` is an unhelpful name: it introduces a new

internal-only concept

- this is particularly rough for beginners as reasoning about how

commands work is a critical stumbling block

## Solution

- rename `apply_system_buffers` to the more descriptive `apply_deferred`

- rename related fields, arguments and methods in the internals fo

bevy_ecs for consistency

- update the docs

## Changelog

`apply_system_buffers` has been renamed to `apply_deferred`, to more

clearly communicate its intent and relation to `Deferred` system

parameters like `Commands`.

## Migration Guide

- `apply_system_buffers` has been renamed to `apply_deferred`

- the `apply_system_buffers` method on the `System` trait has been

renamed to `apply_deferred`

- the `is_apply_system_buffers` function has been replaced by

`is_apply_deferred`

- `Executor::set_apply_final_buffers` is now

`Executor::set_apply_final_deferred`

- `Schedule::apply_system_buffers` is now `Schedule::apply_deferred`

---------

Co-authored-by: JoJoJet <21144246+JoJoJet@users.noreply.github.com>

Links in the api docs are nice. I noticed that there were several places

where structs / functions and other things were referenced in the docs,

but weren't linked. I added the links where possible / logical.

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: François <mockersf@gmail.com>

# Objective

We have a few old system labels that are now system sets but are still named or documented as labels. Documentation also generally mentioned system labels in some places.

## Solution

- Clean up naming and documentation regarding system sets

## Migration Guide

`PrepareAssetLabel` is now called `PrepareAssetSet`

# Objective

NOTE: This depends on #7267 and should not be merged until #7267 is merged. If you are reviewing this before that is merged, I highly recommend viewing the Base Sets commit instead of trying to find my changes amongst those from #7267.

"Default sets" as described by the [Stageless RFC](https://github.com/bevyengine/rfcs/pull/45) have some [unfortunate consequences](https://github.com/bevyengine/bevy/discussions/7365).

## Solution

This adds "base sets" as a variant of `SystemSet`:

A set is a "base set" if `SystemSet::is_base` returns `true`. Typically this will be opted-in to using the `SystemSet` derive:

```rust

#[derive(SystemSet, Clone, Hash, Debug, PartialEq, Eq)]

#[system_set(base)]

enum MyBaseSet {

A,

B,

}

```

**Base sets are exclusive**: a system can belong to at most one "base set". Adding a system to more than one will result in an error. When possible we fail immediately during system-config-time with a nice file + line number. For the more nested graph-ey cases, this will fail at the final schedule build.

**Base sets cannot belong to other sets**: this is where the word "base" comes from

Systems and Sets can only be added to base sets using `in_base_set`. Calling `in_set` with a base set will fail. As will calling `in_base_set` with a normal set.

```rust

app.add_system(foo.in_base_set(MyBaseSet::A))

// X must be a normal set ... base sets cannot be added to base sets

.configure_set(X.in_base_set(MyBaseSet::A))

```

Base sets can still be configured like normal sets:

```rust

app.add_system(MyBaseSet::B.after(MyBaseSet::Ap))

```

The primary use case for base sets is enabling a "default base set":

```rust

schedule.set_default_base_set(CoreSet::Update)

// this will belong to CoreSet::Update by default

.add_system(foo)

// this will override the default base set with PostUpdate

.add_system(bar.in_base_set(CoreSet::PostUpdate))

```

This allows us to build apis that work by default in the standard Bevy style. This is a rough analog to the "default stage" model, but it use the new "stageless sets" model instead, with all of the ordering flexibility (including exclusive systems) that it provides.

---

## Changelog

- Added "base sets" and ported CoreSet to use them.

## Migration Guide

TODO

Huge thanks to @maniwani, @devil-ira, @hymm, @cart, @superdump and @jakobhellermann for the help with this PR.

# Objective

- Followup #6587.

- Minimal integration for the Stageless Scheduling RFC: https://github.com/bevyengine/rfcs/pull/45

## Solution

- [x] Remove old scheduling module

- [x] Migrate new methods to no longer use extension methods

- [x] Fix compiler errors

- [x] Fix benchmarks

- [x] Fix examples

- [x] Fix docs

- [x] Fix tests

## Changelog

### Added

- a large number of methods on `App` to work with schedules ergonomically

- the `CoreSchedule` enum

- `App::add_extract_system` via the `RenderingAppExtension` trait extension method

- the private `prepare_view_uniforms` system now has a public system set for scheduling purposes, called `ViewSet::PrepareUniforms`

### Removed

- stages, and all code that mentions stages

- states have been dramatically simplified, and no longer use a stack

- `RunCriteriaLabel`

- `AsSystemLabel` trait

- `on_hierarchy_reports_enabled` run criteria (now just uses an ad hoc resource checking run condition)

- systems in `RenderSet/Stage::Extract` no longer warn when they do not read data from the main world

- `RunCriteriaLabel`

- `transform_propagate_system_set`: this was a nonstandard pattern that didn't actually provide enough control. The systems are already `pub`: the docs have been updated to ensure that the third-party usage is clear.

### Changed

- `System::default_labels` is now `System::default_system_sets`.

- `App::add_default_labels` is now `App::add_default_sets`

- `CoreStage` and `StartupStage` enums are now `CoreSet` and `StartupSet`

- `App::add_system_set` was renamed to `App::add_systems`

- The `StartupSchedule` label is now defined as part of the `CoreSchedules` enum

- `.label(SystemLabel)` is now referred to as `.in_set(SystemSet)`

- `SystemLabel` trait was replaced by `SystemSet`

- `SystemTypeIdLabel<T>` was replaced by `SystemSetType<T>`

- The `ReportHierarchyIssue` resource now has a public constructor (`new`), and implements `PartialEq`

- Fixed time steps now use a schedule (`CoreSchedule::FixedTimeStep`) rather than a run criteria.

- Adding rendering extraction systems now panics rather than silently failing if no subapp with the `RenderApp` label is found.

- the `calculate_bounds` system, with the `CalculateBounds` label, is now in `CoreSet::Update`, rather than in `CoreSet::PostUpdate` before commands are applied.

- `SceneSpawnerSystem` now runs under `CoreSet::Update`, rather than `CoreStage::PreUpdate.at_end()`.

- `bevy_pbr::add_clusters` is no longer an exclusive system

- the top level `bevy_ecs::schedule` module was replaced with `bevy_ecs::scheduling`

- `tick_global_task_pools_on_main_thread` is no longer run as an exclusive system. Instead, it has been replaced by `tick_global_task_pools`, which uses a `NonSend` resource to force running on the main thread.

## Migration Guide

- Calls to `.label(MyLabel)` should be replaced with `.in_set(MySet)`

- Stages have been removed. Replace these with system sets, and then add command flushes using the `apply_system_buffers` exclusive system where needed.

- The `CoreStage`, `StartupStage, `RenderStage` and `AssetStage` enums have been replaced with `CoreSet`, `StartupSet, `RenderSet` and `AssetSet`. The same scheduling guarantees have been preserved.

- Systems are no longer added to `CoreSet::Update` by default. Add systems manually if this behavior is needed, although you should consider adding your game logic systems to `CoreSchedule::FixedTimestep` instead for more reliable framerate-independent behavior.

- Similarly, startup systems are no longer part of `StartupSet::Startup` by default. In most cases, this won't matter to you.

- For example, `add_system_to_stage(CoreStage::PostUpdate, my_system)` should be replaced with

- `add_system(my_system.in_set(CoreSet::PostUpdate)`

- When testing systems or otherwise running them in a headless fashion, simply construct and run a schedule using `Schedule::new()` and `World::run_schedule` rather than constructing stages

- Run criteria have been renamed to run conditions. These can now be combined with each other and with states.

- Looping run criteria and state stacks have been removed. Use an exclusive system that runs a schedule if you need this level of control over system control flow.

- For app-level control flow over which schedules get run when (such as for rollback networking), create your own schedule and insert it under the `CoreSchedule::Outer` label.

- Fixed timesteps are now evaluated in a schedule, rather than controlled via run criteria. The `run_fixed_timestep` system runs this schedule between `CoreSet::First` and `CoreSet::PreUpdate` by default.

- Command flush points introduced by `AssetStage` have been removed. If you were relying on these, add them back manually.

- Adding extract systems is now typically done directly on the main app. Make sure the `RenderingAppExtension` trait is in scope, then call `app.add_extract_system(my_system)`.

- the `calculate_bounds` system, with the `CalculateBounds` label, is now in `CoreSet::Update`, rather than in `CoreSet::PostUpdate` before commands are applied. You may need to order your movement systems to occur before this system in order to avoid system order ambiguities in culling behavior.

- the `RenderLabel` `AppLabel` was renamed to `RenderApp` for clarity

- `App::add_state` now takes 0 arguments: the starting state is set based on the `Default` impl.

- Instead of creating `SystemSet` containers for systems that run in stages, simply use `.on_enter::<State::Variant>()` or its `on_exit` or `on_update` siblings.

- `SystemLabel` derives should be replaced with `SystemSet`. You will also need to add the `Debug`, `PartialEq`, `Eq`, and `Hash` traits to satisfy the new trait bounds.

- `with_run_criteria` has been renamed to `run_if`. Run criteria have been renamed to run conditions for clarity, and should now simply return a bool.

- States have been dramatically simplified: there is no longer a "state stack". To queue a transition to the next state, call `NextState::set`

## TODO

- [x] remove dead methods on App and World

- [x] add `App::add_system_to_schedule` and `App::add_systems_to_schedule`

- [x] avoid adding the default system set at inappropriate times

- [x] remove any accidental cycles in the default plugins schedule

- [x] migrate benchmarks

- [x] expose explicit labels for the built-in command flush points

- [x] migrate engine code

- [x] remove all mentions of stages from the docs

- [x] verify docs for States

- [x] fix uses of exclusive systems that use .end / .at_start / .before_commands

- [x] migrate RenderStage and AssetStage

- [x] migrate examples

- [x] ensure that transform propagation is exported in a sufficiently public way (the systems are already pub)

- [x] ensure that on_enter schedules are run at least once before the main app

- [x] re-enable opt-in to execution order ambiguities

- [x] revert change to `update_bounds` to ensure it runs in `PostUpdate`

- [x] test all examples

- [x] unbreak directional lights

- [x] unbreak shadows (see 3d_scene, 3d_shape, lighting, transparaency_3d examples)

- [x] game menu example shows loading screen and menu simultaneously

- [x] display settings menu is a blank screen

- [x] `without_winit` example panics

- [x] ensure all tests pass

- [x] SubApp doc test fails

- [x] runs_spawn_local tasks fails

- [x] [Fix panic_when_hierachy_cycle test hanging](https://github.com/alice-i-cecile/bevy/pull/120)

## Points of Difficulty and Controversy

**Reviewers, please give feedback on these and look closely**

1. Default sets, from the RFC, have been removed. These added a tremendous amount of implicit complexity and result in hard to debug scheduling errors. They're going to be tackled in the form of "base sets" by @cart in a followup.

2. The outer schedule controls which schedule is run when `App::update` is called.

3. I implemented `Label for `Box<dyn Label>` for our label types. This enables us to store schedule labels in concrete form, and then later run them. I ran into the same set of problems when working with one-shot systems. We've previously investigated this pattern in depth, and it does not appear to lead to extra indirection with nested boxes.

4. `SubApp::update` simply runs the default schedule once. This sucks, but this whole API is incomplete and this was the minimal changeset.

5. `time_system` and `tick_global_task_pools_on_main_thread` no longer use exclusive systems to attempt to force scheduling order

6. Implemetnation strategy for fixed timesteps

7. `AssetStage` was migrated to `AssetSet` without reintroducing command flush points. These did not appear to be used, and it's nice to remove these bottlenecks.

8. Migration of `bevy_render/lib.rs` and pipelined rendering. The logic here is unusually tricky, as we have complex scheduling requirements.

## Future Work (ideally before 0.10)

- Rename schedule_v3 module to schedule or scheduling

- Add a derive macro to states, and likely a `EnumIter` trait of some form

- Figure out what exactly to do with the "systems added should basically work by default" problem

- Improve ergonomics for working with fixed timesteps and states

- Polish FixedTime API to match Time

- Rebase and merge #7415

- Resolve all internal ambiguities (blocked on better tools, especially #7442)

- Add "base sets" to replace the removed default sets.

Co-authored-by: Robert Swain <robert.swain@gmail.com>

# Objective

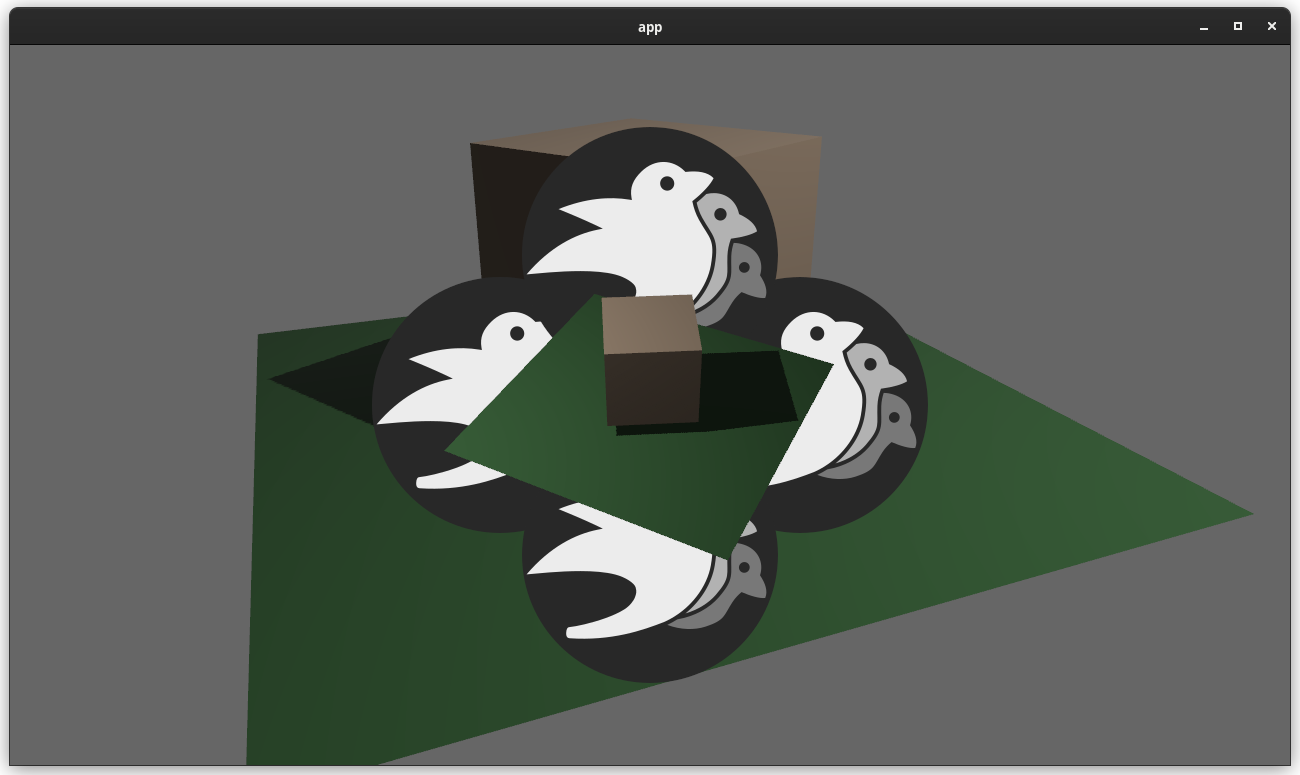

Implements cascaded shadow maps for directional lights, which produces better quality shadows without needing excessively large shadow maps.

Fixes#3629

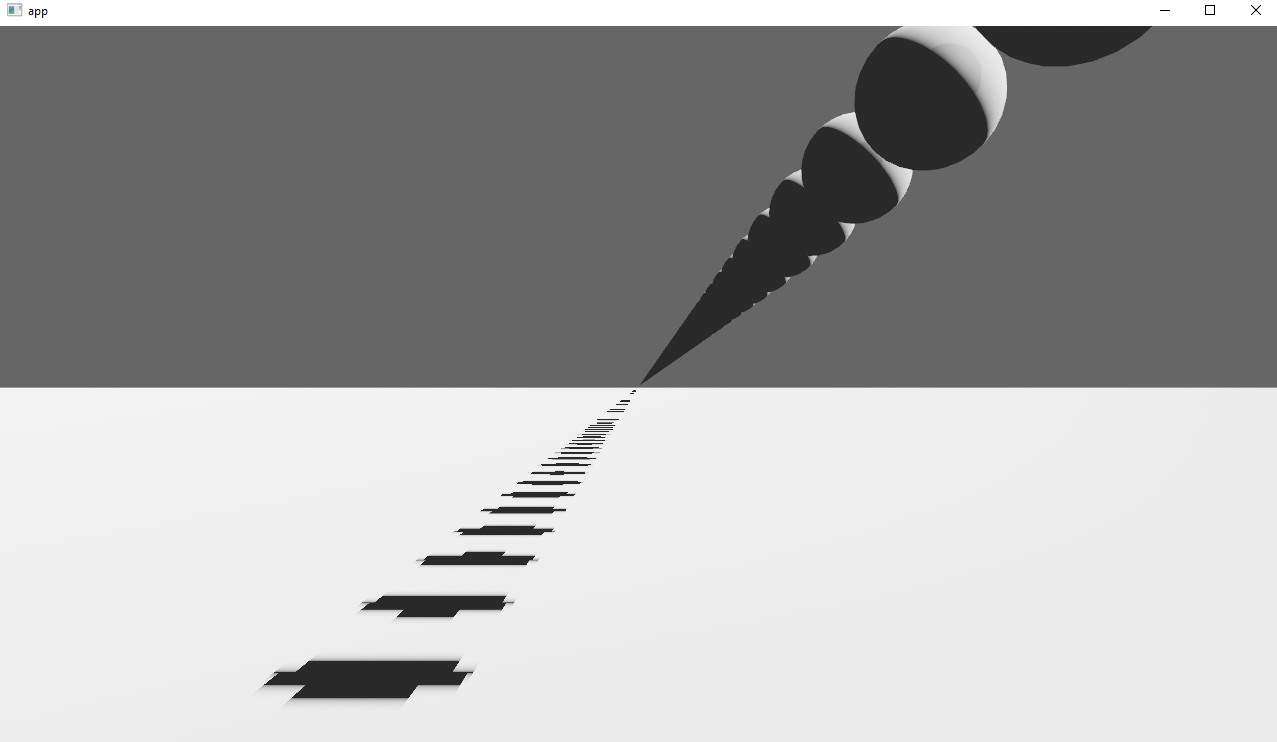

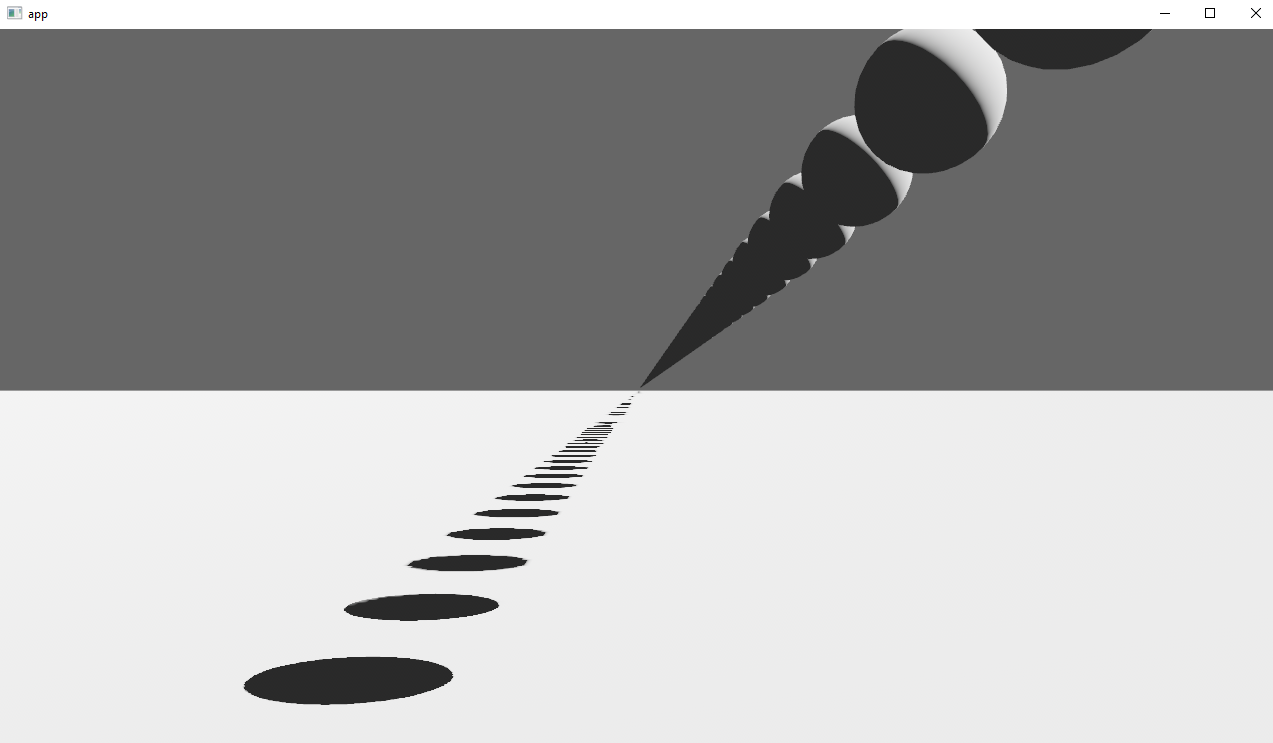

Before

After

## Solution

Rather than rendering a single shadow map for directional light, the view frustum is divided into a series of cascades, each of which gets its own shadow map. The correct cascade is then sampled for shadow determination.

---

## Changelog

Directional lights now use cascaded shadow maps for improved shadow quality.

## Migration Guide

You no longer have to manually specify a `shadow_projection` for a directional light, and these settings should be removed. If customization of how cascaded shadow maps work is desired, modify the `CascadeShadowConfig` component instead.

# Objective

Fixes#3184. Fixes#6640. Fixes#4798. Using `Query::par_for_each(_mut)` currently requires a `batch_size` parameter, which affects how it chunks up large archetypes and tables into smaller chunks to run in parallel. Tuning this value is difficult, as the performance characteristics entirely depends on the state of the `World` it's being run on. Typically, users will just use a flat constant and just tune it by hand until it performs well in some benchmarks. However, this is both error prone and risks overfitting the tuning on that benchmark.

This PR proposes a naive automatic batch-size computation based on the current state of the `World`.

## Background

`Query::par_for_each(_mut)` schedules a new Task for every archetype or table that it matches. Archetypes/tables larger than the batch size are chunked into smaller tasks. Assuming every entity matched by the query has an identical workload, this makes the worst case scenario involve using a batch size equal to the size of the largest matched archetype or table. Conversely, a batch size of `max {archetype, table} size / thread count * COUNT_PER_THREAD` is likely the sweetspot where the overhead of scheduling tasks is minimized, at least not without grouping small archetypes/tables together.

There is also likely a strict minimum batch size below which the overhead of scheduling these tasks is heavier than running the entire thing single-threaded.

## Solution

- [x] Remove the `batch_size` from `Query(State)::par_for_each` and friends.

- [x] Add a check to compute `batch_size = max {archeytpe/table} size / thread count * COUNT_PER_THREAD`

- [x] ~~Panic if thread count is 0.~~ Defer to `for_each` if the thread count is 1 or less.

- [x] Early return if there is no matched table/archetype.

- [x] Add override option for users have queries that strongly violate the initial assumption that all iterated entities have an equal workload.

---

## Changelog

Changed: `Query::par_for_each(_mut)` has been changed to `Query::par_iter(_mut)` and will now automatically try to produce a batch size for callers based on the current `World` state.

## Migration Guide

The `batch_size` parameter for `Query(State)::par_for_each(_mut)` has been removed. These calls will automatically compute a batch size for you. Remove these parameters from all calls to these functions.

Before:

```rust

fn parallel_system(query: Query<&MyComponent>) {

query.par_for_each(32, |comp| {

...

});

}

```

After:

```rust

fn parallel_system(query: Query<&MyComponent>) {

query.par_iter().for_each(|comp| {

...

});

}

```

Co-authored-by: Arnav Choubey <56453634+x-52@users.noreply.github.com>

Co-authored-by: Robert Swain <robert.swain@gmail.com>

Co-authored-by: François <mockersf@gmail.com>

Co-authored-by: Corey Farwell <coreyf@rwell.org>

Co-authored-by: Aevyrie <aevyrie@gmail.com>

# Objective

- Avoid slower than necessary first frame after spawning many entities due to them not having `Aabb`s and so being marked visible

- Avoids unnecessarily large system and VRAM allocations as a consequence

## Solution

- I noticed when debugging the `many_cubes` stress test in Xcode that the `MeshUniform` binding was much larger than it needed to be. I realised that this was because initially, all mesh entities are marked as being visible because they don't have `Aabb`s because `calculate_bounds` is being run in `PostUpdate` and there are no system commands applications before executing the visibility check systems that need the `Aabb`s. The solution then is to run the `calculate_bounds` system just before the previous system commands are applied which is at the end of the `Update` stage.

Consolidation of all the feedback about #6271 as well as the addition of an "unconditionally visible" mode.

# Objective

The current implementation of the `Visibility` struct simply wraps a boolean.. which seems like an odd pattern when rust has such nice enums that allow for more expression using pattern-matching.

Additionally as it stands Bevy only has two settings for visibility of an entity:

- "unconditionally hidden" `Visibility { is_visible: false }`,

- "inherit visibility from parent" `Visibility { is_visible: true }`

where a root level entity set to "inherit" is visible.

Note that given the behaviour, the current naming of the inner field is a little deceptive or unclear.

Using an enum for `Visibility` opens the door for adding an extra behaviour mode. This PR adds a new "unconditionally visible" mode, which causes an entity to be visible even if its Parent entity is hidden. There should not really be any performance cost to the addition of this new mode.

--

The recently added `toggle` method is removed in this PR, as its semantics could be confusing with 3 variants.

## Solution

Change the Visibility component into

```rust

enum Visibility {

Hidden, // unconditionally hidden

Visible, // unconditionally visible

Inherited, // inherit visibility from parent

}

```

---

## Changelog

### Changed

`Visibility` is now an enum

## Migration Guide

- evaluation of the `visibility.is_visible` field should now check for `visibility == Visibility::Inherited`.

- setting the `visibility.is_visible` field should now directly set the value: `*visibility = Visibility::Inherited`.

- usage of `Visibility::VISIBLE` or `Visibility::INVISIBLE` should now use `Visibility::Inherited` or `Visibility::Hidden` respectively.

- `ComputedVisibility::INVISIBLE` and `SpatialBundle::VISIBLE_IDENTITY` have been renamed to `ComputedVisibility::HIDDEN` and `SpatialBundle::INHERITED_IDENTITY` respectively.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Many types in `bevy_render` implemented `Reflect` but were not registered.

## Solution

Register all types in `bevy_render` that impl `Reflect`.

This also registers additional dependent types (i.e. field types).

> Note: Adding these dependent types would not be needed using something like #5781😉

---

## Changelog

- Register missing `bevy_render` types in the `TypeRegistry`:

- `camera::RenderTarget`

- `globals::GlobalsUniform`

- `texture::Image`

- `view::ComputedVisibility`

- `view::Visibility`

- `view::VisibleEntities`

- Register additional dependent types:

- `view::ComputedVisibilityFlags`

- `Vec<Entity>`

# Objective

`ComputedVisibility` could afford to be smaller/faster. Optimizing the size and performance of operations on the component will positively benefit almost all extraction systems.

This was listed as one of the potential pieces of future work for #5310.

## Solution

Merge both internal booleans into a single `u8` bitflag field. Rely on bitmasks to evaluate local, hierarchical, and general visibility.

Pros:

- `ComputedVisibility::is_visible` should be a single bitmask test instead of two.

- `ComputedVisibility` is now only 1 byte. Should be able to fit 100% more per cache line when using dense iteration.

Cons:

- Harder to read.

- Setting individual values inside `ComputedVisiblity` require bitmask mutations.

This should be a non-breaking change. No public API was changed. The only publicly visible effect is that `ComputedVisibility` is now 1 byte instead of 2.

# Objective

Bevy's internal plugins have lots of execution-order ambiguities, which makes the ambiguity detection tool very noisy for our users.

## Solution

Silence every last ambiguity that can currently be resolved.

Each time an ambiguity is silenced, it is accompanied by a comment describing why it is correct. This description should be based on the public API of the respective systems. Thus, I have added documentation to some systems describing how they use some resources.

# Future work

Some ambiguities remain, due to issues out of scope for this PR.

* The ambiguity checker does not respect `Without<>` filters, leading to false positives.

* Ambiguities between `bevy_ui` and `bevy_animation` cannot be resolved, since neither crate knows that the other exists. We will need a general solution to this problem.

# Objective

Make toggling the visibility of an entity slightly more convenient.

## Solution

Add a mutating `toggle` method to the `Visibility` component

```rust

fn my_system(mut query: Query<&mut Visibility, With<SomeMarker>>) {

let mut visibility = query.single_mut();

// before:

visibility.is_visible = !visibility.is_visible;

// after:

visibility.toggle();

}

```

## Changelog

### Added

- Added a mutating `toggle` method to the `Visibility` component

# Objective

- Reflecting `Default` is required for scripts to create `Reflect` types at runtime with no static type information.

- Reflecting `Default` on `Handle<T>` and `ComputedVisibility` should allow scripts from `bevy_mod_js_scripting` to actually spawn sprites from scratch, without needing any hand-holding from the host-game.

## Solution

- Derive `ReflectDefault` for `Handle<T>` and `ComputedVisiblity`.

---

## Changelog

> This section is optional. If this was a trivial fix, or has no externally-visible impact, you can delete this section.

- The `Default` trait is now reflected for `Handle<T>` and `ComputedVisibility`

# Objective

Now that we can consolidate Bundles and Components under a single insert (thanks to #2975 and #6039), almost 100% of world spawns now look like `world.spawn().insert((Some, Tuple, Here))`. Spawning an entity without any components is an extremely uncommon pattern, so it makes sense to give spawn the "first class" ergonomic api. This consolidated api should be made consistent across all spawn apis (such as World and Commands).

## Solution

All `spawn` apis (`World::spawn`, `Commands:;spawn`, `ChildBuilder::spawn`, and `WorldChildBuilder::spawn`) now accept a bundle as input:

```rust

// before:

commands

.spawn()

.insert((A, B, C));

world

.spawn()

.insert((A, B, C);

// after

commands.spawn((A, B, C));

world.spawn((A, B, C));

```

All existing instances of `spawn_bundle` have been deprecated in favor of the new `spawn` api. A new `spawn_empty` has been added, replacing the old `spawn` api.

By allowing `world.spawn(some_bundle)` to replace `world.spawn().insert(some_bundle)`, this opened the door to removing the initial entity allocation in the "empty" archetype / table done in `spawn()` (and subsequent move to the actual archetype in `.insert(some_bundle)`).

This improves spawn performance by over 10%:

To take this measurement, I added a new `world_spawn` benchmark.

Unfortunately, optimizing `Commands::spawn` is slightly less trivial, as Commands expose the Entity id of spawned entities prior to actually spawning. Doing the optimization would (naively) require assurances that the `spawn(some_bundle)` command is applied before all other commands involving the entity (which would not necessarily be true, if memory serves). Optimizing `Commands::spawn` this way does feel possible, but it will require careful thought (and maybe some additional checks), which deserves its own PR. For now, it has the same performance characteristics of the current `Commands::spawn_bundle` on main.

**Note that 99% of this PR is simple renames and refactors. The only code that needs careful scrutiny is the new `World::spawn()` impl, which is relatively straightforward, but it has some new unsafe code (which re-uses battle tested BundlerSpawner code path).**

---

## Changelog

- All `spawn` apis (`World::spawn`, `Commands:;spawn`, `ChildBuilder::spawn`, and `WorldChildBuilder::spawn`) now accept a bundle as input

- All instances of `spawn_bundle` have been deprecated in favor of the new `spawn` api

- World and Commands now have `spawn_empty()`, which is equivalent to the old `spawn()` behavior.

## Migration Guide

```rust

// Old (0.8):

commands

.spawn()

.insert_bundle((A, B, C));

// New (0.9)

commands.spawn((A, B, C));

// Old (0.8):

commands.spawn_bundle((A, B, C));

// New (0.9)

commands.spawn((A, B, C));

// Old (0.8):

let entity = commands.spawn().id();

// New (0.9)

let entity = commands.spawn_empty().id();

// Old (0.8)

let entity = world.spawn().id();

// New (0.9)

let entity = world.spawn_empty();

```

# Objective

Take advantage of the "impl Bundle for Component" changes in #2975 / add the follow up changes discussed there.

## Solution

- Change `insert` and `remove` to accept a Bundle instead of a Component (for both Commands and World)

- Deprecate `insert_bundle`, `remove_bundle`, and `remove_bundle_intersection`

- Add `remove_intersection`

---

## Changelog

- Change `insert` and `remove` now accept a Bundle instead of a Component (for both Commands and World)

- `insert_bundle` and `remove_bundle` are deprecated

## Migration Guide

Replace `insert_bundle` with `insert`:

```rust

// Old (0.8)

commands.spawn().insert_bundle(SomeBundle::default());

// New (0.9)

commands.spawn().insert(SomeBundle::default());

```

Replace `remove_bundle` with `remove`:

```rust

// Old (0.8)

commands.entity(some_entity).remove_bundle::<SomeBundle>();

// New (0.9)

commands.entity(some_entity).remove::<SomeBundle>();

```

Replace `remove_bundle_intersection` with `remove_intersection`:

```rust

// Old (0.8)

world.entity_mut(some_entity).remove_bundle_intersection::<SomeBundle>();

// New (0.9)

world.entity_mut(some_entity).remove_intersection::<SomeBundle>();

```

Consider consolidating as many operations as possible to improve ergonomics and cut down on archetype moves:

```rust

// Old (0.8)

commands.spawn()

.insert_bundle(SomeBundle::default())

.insert(SomeComponent);

// New (0.9) - Option 1

commands.spawn().insert((

SomeBundle::default(),

SomeComponent,

))

// New (0.9) - Option 2

commands.spawn_bundle((

SomeBundle::default(),

SomeComponent,

))

```

## Next Steps

Consider changing `spawn` to accept a bundle and deprecate `spawn_bundle`.

# Objective

Since `identity` is a const fn that takes no arguments it seems logical to make it an associated constant.

This is also more in line with types from glam (eg. `Quat::IDENTITY`).

## Migration Guide

The method `identity()` on `Transform`, `GlobalTransform` and `TransformBundle` has been deprecated.

Use the associated constant `IDENTITY` instead.

Co-authored-by: devil-ira <justthecooldude@gmail.com>

# Objective

Fix a nasty system ordering bug between `update_frusta` and `camera_system` that lead to incorrect frustum s, leading to excessive culling and extremely hard-to-debug visual glitches

## Solution

- add explicit system ordering

# Objective

Sadly, #4944 introduces a serious exponential despawn behavior, which cannot be included in 0.8. [Handling AABBs properly is a controversial topic](https://github.com/bevyengine/bevy/pull/5423#issuecomment-1199995825) and one that deserves more time than the day we have left before release.

## Solution

This reverts commit c2b332f98a.

# Objective

Update the `calculate_bounds` system to update `Aabb`s

for entities who've either:

- gotten a new mesh

- had their mesh mutated

Fixes https://github.com/bevyengine/bevy/issues/4294.

## Solution

There are two commits here to address the two issues above:

### Commit 1

**This Commit**

Updates the `calculate_bounds` system to operate not only on entities

without `Aabb`s but also on entities whose `Handle<Mesh>` has changed.

**Why?**

So if an entity gets a new mesh, its associated `Aabb` is properly

recalculated.

**Questions**

- This type is getting pretty gnarly - should I extract some types?

- This system is public - should I add some quick docs while I'm here?

### Commit 2

**This Commit**

Updates `calculate_bounds` to update `Aabb`s of entities whose meshes

have been directly mutated.

**Why?**

So if an entity's mesh gets updated, its associated `Aabb` is properly

recalculated.

**Questions**

- I think we should be using `ahash`. Do we want to do that with a

direct `hashbrown` dependency or an `ahash` dependency that we

configure the `HashMap` with?

- There is an edge case of duplicates with `Vec<Entity>` in the

`HashMap`. If an entity gets its mesh handle changed and changed back

again it'll be added to the list twice. Do we want to use a `HashSet`

to avoid that? Or do a check in the list first (assuming iterating

over the `Vec` is faster and this edge case is rare)?

- There is an edge case where, if an entity gets a new mesh handle and

then its old mesh is updated, we'll update the entity's `Aabb` to the

new geometry of the _old_ mesh. Do we want to remove items from the

`Local<HashMap>` when handles change? Does the `Changed` event give us

the old mesh handle? If not we might need to have a

`HashMap<Entity, Handle<Mesh>>` or something so we can unlink entities

from mesh handles when the handle changes.

- I did the `zip()` with the two `HashMap` gets assuming those would

be faster than calculating the Aabb of the mesh (otherwise we could do

`meshes.get(mesh_handle).and_then(Mesh::compute_aabb).zip(entity_mesh_map...)`

or something). Is that assumption way off?

## Testing

I originally tried testing this with `bevy_mod_raycast` as mentioned in the

original issue but it seemed to work (maybe they are currently manually

updating the Aabbs?). I then tried doing it in 2D but it looks like

`Handle<Mesh>` is just for 3D. So I took [this example](https://github.com/bevyengine/bevy/blob/main/examples/3d/pbr.rs)

and added some systems to mutate/assign meshes:

<details>

<summary>Test Script</summary>

```rust

use bevy::prelude::*;

use bevy::render:📷:ScalingMode;

use bevy::render::primitives::Aabb;

/// Make sure we only mutate one mesh once.

#[derive(Eq, PartialEq, Clone, Debug, Default)]

struct MutateMeshState(bool);

/// Let's have a few global meshes that we can cycle between.

/// This way we can be assigned a new mesh, mutate the old one, and then get the old one assigned.

#[derive(Eq, PartialEq, Clone, Debug, Default)]

struct Meshes(Vec<Handle<Mesh>>);

fn main() {

App::new()

.add_plugins(DefaultPlugins)

.init_resource::<MutateMeshState>()

.init_resource::<Meshes>()

.add_startup_system(setup)

.add_system(assign_new_mesh)

.add_system(show_aabbs.after(assign_new_mesh))

.add_system(mutate_meshes.after(show_aabbs))

.run();

}

fn setup(

mut commands: Commands,

mut meshes: ResMut<Assets<Mesh>>,

mut global_meshes: ResMut<Meshes>,

mut materials: ResMut<Assets<StandardMaterial>>,

) {

let m1 = meshes.add(Mesh::from(shape::Icosphere::default()));

let m2 = meshes.add(Mesh::from(shape::Icosphere {

radius: 0.90,

..Default::default()

}));

let m3 = meshes.add(Mesh::from(shape::Icosphere {

radius: 0.80,

..Default::default()

}));

global_meshes.0.push(m1.clone());

global_meshes.0.push(m2);

global_meshes.0.push(m3);

// add entities to the world

// sphere

commands.spawn_bundle(PbrBundle {

mesh: m1,

material: materials.add(StandardMaterial {

base_color: Color::hex("ffd891").unwrap(),

..default()

}),

..default()

});

// new 3d camera

commands.spawn_bundle(Camera3dBundle {

projection: OrthographicProjection {

scale: 3.0,

scaling_mode: ScalingMode::FixedVertical(1.0),

..default()

}

.into(),

..default()

});

// old 3d camera

// commands.spawn_bundle(OrthographicCameraBundle {

// transform: Transform::from_xyz(0.0, 0.0, 8.0).looking_at(Vec3::default(), Vec3::Y),

// orthographic_projection: OrthographicProjection {

// scale: 0.01,

// ..default()

// },

// ..OrthographicCameraBundle::new_3d()

// });

}

fn show_aabbs(query: Query<(Entity, &Handle<Mesh>, &Aabb)>) {

for thing in query.iter() {

println!("{thing:?}");

}

}

/// For testing the second part - mutating a mesh.

///

/// Without the fix we should see this mutate an old mesh and it affects the new mesh that the

/// entity currently has.

/// With the fix, the mutation doesn't affect anything until the entity is reassigned the old mesh.

fn mutate_meshes(

mut meshes: ResMut<Assets<Mesh>>,

time: Res<Time>,

global_meshes: Res<Meshes>,

mut mutate_mesh_state: ResMut<MutateMeshState>,

) {

let mutated = mutate_mesh_state.0;

if time.seconds_since_startup() > 4.5 && !mutated {

println!("Mutating {:?}", global_meshes.0[0]);

let m = meshes.get_mut(&global_meshes.0[0]).unwrap();

let mut p = m.attribute(Mesh::ATTRIBUTE_POSITION).unwrap().clone();

use bevy::render::mesh::VertexAttributeValues;

match &mut p {

VertexAttributeValues::Float32x3(v) => {

v[0] = [10.0, 10.0, 10.0];

}

_ => unreachable!(),

}

m.insert_attribute(Mesh::ATTRIBUTE_POSITION, p);

mutate_mesh_state.0 = true;

}

}

/// For testing the first part - assigning a new handle.

fn assign_new_mesh(

mut query: Query<&mut Handle<Mesh>, With<Aabb>>,

time: Res<Time>,

global_meshes: Res<Meshes>,

) {

let s = time.seconds_since_startup() as usize;

let idx = s % global_meshes.0.len();

for mut handle in query.iter_mut() {

*handle = global_meshes.0[idx].clone_weak();

}

}

```

</details>

## Changelog

### Fixed

Entity `Aabb`s not updating when meshes are mutated or re-assigned.

# Objective

- Help user when they need to add both a `TransformBundle` and a `VisibilityBundle`

## Solution

- Add a `SpatialBundle` adding all components

# Objective

- Add capability to use `Affine3A`s for some `GlobalTransform`s. This allows affine transformations that are not possible using a single `Transform` such as shear and non-uniform scaling along an arbitrary axis.

- Related to #1755 and #2026

## Solution

- `GlobalTransform` becomes an enum wrapping either a `Transform` or an `Affine3A`.

- The API of `GlobalTransform` is minimized to avoid inefficiency, and to make it clear that operations should be performed using the underlying data types.

- using `GlobalTransform::Affine3A` disables transform propagation, because the main use is for cases that `Transform`s cannot support.

---

## Changelog

- `GlobalTransform`s can optionally support any affine transformation using an `Affine3A`.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Fixes#4907. Fixes#838. Fixes#5089.

Supersedes #5146. Supersedes #2087. Supersedes #865. Supersedes #5114

Visibility is currently entirely local. Set a parent entity to be invisible, and the children are still visible. This makes it hard for users to hide entire hierarchies of entities.

Additionally, the semantics of `Visibility` vs `ComputedVisibility` are inconsistent across entity types. 3D meshes use `ComputedVisibility` as the "definitive" visibility component, with `Visibility` being just one data source. Sprites just use `Visibility`, which means they can't feed off of `ComputedVisibility` data, such as culling information, RenderLayers, and (added in this pr) visibility inheritance information.

## Solution

Splits `ComputedVisibilty::is_visible` into `ComputedVisibilty::is_visible_in_view` and `ComputedVisibilty::is_visible_in_hierarchy`. For each visible entity, `is_visible_in_hierarchy` is computed by propagating visibility down the hierarchy. The `ComputedVisibility::is_visible()` function combines these two booleans for the canonical "is this entity visible" function.

Additionally, all entities that have `Visibility` now also have `ComputedVisibility`. Sprites, Lights, and UI entities now use `ComputedVisibility` when appropriate.

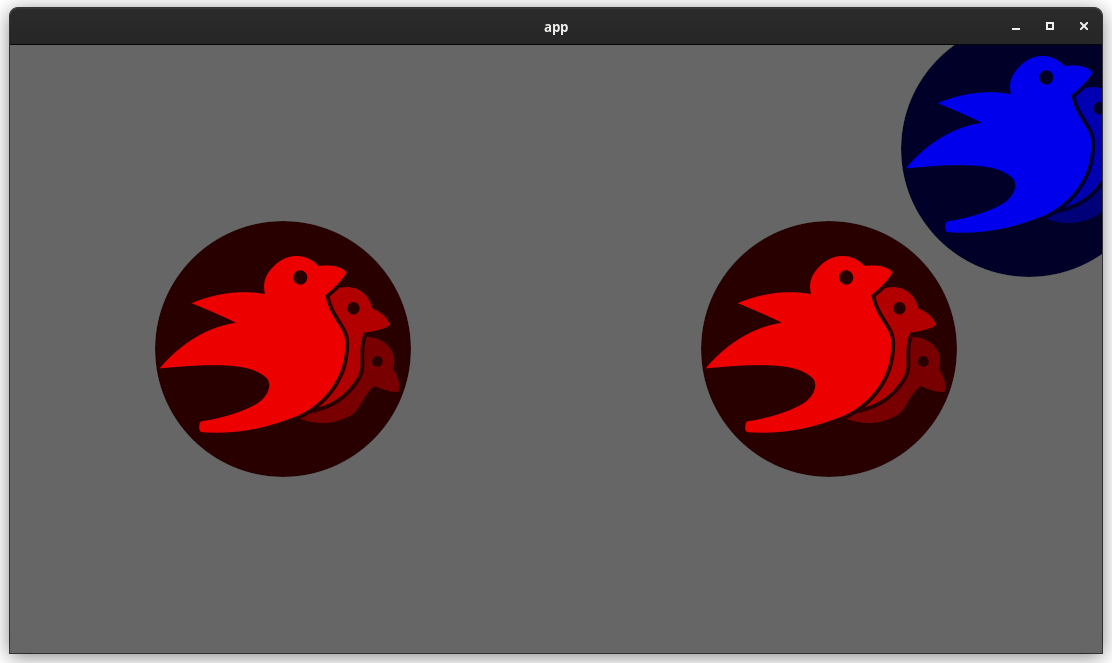

This means that in addition to visibility inheritance, everything using Visibility now also supports RenderLayers. Notably, Sprites (and other 2d objects) now support `RenderLayers` and work properly across multiple views.

Also note that this does increase the amount of work done per sprite. Bevymark with 100,000 sprites on `main` runs in `0.017612` seconds and this runs in `0.01902`. That is certainly a gap, but I believe the api consistency and extra functionality this buys us is worth it. See [this thread](https://github.com/bevyengine/bevy/pull/5146#issuecomment-1182783452) for more info. Note that #5146 in combination with #5114 _are_ a viable alternative to this PR and _would_ perform better, but that comes at the cost of api inconsistencies and doing visibility calculations in the "wrong" place. The current visibility system does have potential for performance improvements. I would prefer to evolve that one system as a whole rather than doing custom hacks / different behaviors for each feature slice.

Here is a "split screen" example where the left camera uses RenderLayers to filter out the blue sprite.

Note that this builds directly on #5146 and that @james7132 deserves the credit for the baseline visibility inheritance work. This pr moves the inherited visibility field into `ComputedVisibility`, then does the additional work of porting everything to `ComputedVisibility`. See my [comments here](https://github.com/bevyengine/bevy/pull/5146#issuecomment-1182783452) for rationale.

## Follow up work

* Now that lights use ComputedVisibility, VisibleEntities now includes "visible lights" in the entity list. Functionally not a problem as we use queries to filter the list down in the desired context. But we should consider splitting this out into a separate`VisibleLights` collection for both clarity and performance reasons. And _maybe_ even consider scoping `VisibleEntities` down to `VisibleMeshes`?.

* Investigate alternative sprite rendering impls (in combination with visibility system tweaks) that avoid re-generating a per-view fixedbitset of visible entities every frame, then checking each ExtractedEntity. This is where most of the performance overhead lives. Ex: we could generate ExtractedEntities per-view using the VisibleEntities list, avoiding the need for the bitset.

* Should ComputedVisibility use bitflags under the hood? This would cut down on the size of the component, potentially speed up the `is_visible()` function, and allow us to cheaply expand ComputedVisibility with more data (ex: split out local visibility and parent visibility, add more culling classes, etc).

---

## Changelog

* ComputedVisibility now takes hierarchy visibility into account.

* 2D, UI and Light entities now use the ComputedVisibility component.

## Migration Guide

If you were previously reading `Visibility::is_visible` as the "actual visibility" for sprites or lights, use `ComputedVisibilty::is_visible()` instead:

```rust

// before (0.7)

fn system(query: Query<&Visibility>) {

for visibility in query.iter() {

if visibility.is_visible {

log!("found visible entity");

}

}

}

// after (0.8)

fn system(query: Query<&ComputedVisibility>) {

for visibility in query.iter() {

if visibility.is_visible() {

log!("found visible entity");

}

}

}

```

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

Remove unnecessary calls to `iter()`/`iter_mut()`.

Mainly updates the use of queries in our code, docs, and examples.

```rust

// From

for _ in list.iter() {

for _ in list.iter_mut() {

// To

for _ in &list {

for _ in &mut list {

```

We already enable the pedantic lint [clippy::explicit_iter_loop](https://rust-lang.github.io/rust-clippy/stable/) inside of Bevy. However, this only warns for a few known types from the standard library.

## Note for reviewers

As you can see the additions and deletions are exactly equal.

Maybe give it a quick skim to check I didn't sneak in a crypto miner, but you don't have to torture yourself by reading every line.

I already experienced enough pain making this PR :)

Co-authored-by: devil-ira <justthecooldude@gmail.com>

# Objective

Further speed up visibility checking by removing the main sources of contention for the system.

## Solution

- ~~Make `ComputedVisibility` a resource wrapping a `FixedBitset`.~~

- ~~Remove `ComputedVisibility` as a component.~~

~~This adds a one-bit overhead to every entity in the app world. For a game with 100,000 entities, this is 12.5KB of memory. This is still small enough to fit entirely in most L1 caches. Also removes the need for a per-Entity change detection tick. This reduces the memory footprint of ComputedVisibility 72x.~~

~~The decreased memory usage and less fragmented memory locality should provide significant performance benefits.~~

~~Clearing visible entities should be significantly faster than before:~~

- ~~Setting one `u32` to 0 clears 32 entities per cycle.~~

- ~~No archetype fragmentation to contend with.~~

- ~~Change detection is applied to the resource, so there is no per-Entity update tick requirement.~~

~~The side benefit of this design is that it removes one more "computed component" from userspace. Though accessing the values within it are now less ergonomic.~~

This PR changes `crossbeam_channel` in `check_visibility` to use a `Local<ThreadLocal<Cell<Vec<Entity>>>` to mark down visible entities instead.

Co-Authored-By: TheRawMeatball <therawmeatball@gmail.com>

Co-Authored-By: Aevyrie <aevyrie@gmail.com>

# Objective

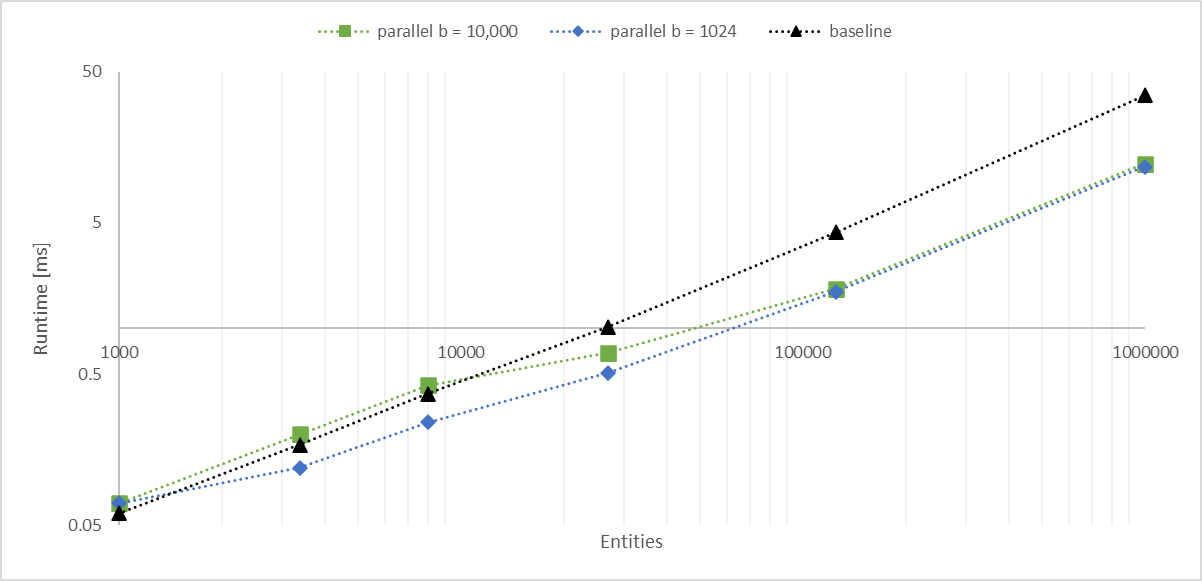

Working with a large number of entities with `Aabbs`, rendered with an instanced shader, I found the bottleneck became the frustum culling system. The goal of this PR is to significantly improve culling performance without any major changes. We should consider constructing a BVH for more substantial improvements.

## Solution

- Convert the inner entity query to a parallel iterator with `par_for_each_mut` using a batch size of 1,024.

- This outperforms single threaded culling when there are more than 1,000 entities.

- Below this they are approximately equal, with <= 10 microseconds of multithreading overhead.

- Above this, the multithreaded version is significantly faster, scaling linearly with core count.

- In my million-entity-workload, this PR improves my framerate by 200% - 300%.

## log-log of `check_visibility` time vs. entities for single/multithreaded

---

## Changelog

Frustum culling is now run with a parallel query. When culling more than a thousand entities, this is faster than the previous method, scaling proportionally with the number of available cores.

This adds "high level camera driven rendering" to Bevy. The goal is to give users more control over what gets rendered (and where) without needing to deal with render logic. This will make scenarios like "render to texture", "multiple windows", "split screen", "2d on 3d", "3d on 2d", "pass layering", and more significantly easier.

Here is an [example of a 2d render sandwiched between two 3d renders (each from a different perspective)](https://gist.github.com/cart/4fe56874b2e53bc5594a182fc76f4915):

Users can now spawn a camera, point it at a RenderTarget (a texture or a window), and it will "just work".

Rendering to a second window is as simple as spawning a second camera and assigning it to a specific window id:

```rust

// main camera (main window)

commands.spawn_bundle(Camera2dBundle::default());

// second camera (other window)

commands.spawn_bundle(Camera2dBundle {

camera: Camera {

target: RenderTarget::Window(window_id),

..default()

},

..default()

});

```

Rendering to a texture is as simple as pointing the camera at a texture:

```rust

commands.spawn_bundle(Camera2dBundle {

camera: Camera {

target: RenderTarget::Texture(image_handle),

..default()

},

..default()

});

```

Cameras now have a "render priority", which controls the order they are drawn in. If you want to use a camera's output texture as a texture in the main pass, just set the priority to a number lower than the main pass camera (which defaults to `0`).

```rust

// main pass camera with a default priority of 0

commands.spawn_bundle(Camera2dBundle::default());

commands.spawn_bundle(Camera2dBundle {

camera: Camera {

target: RenderTarget::Texture(image_handle.clone()),

priority: -1,

..default()

},

..default()

});

commands.spawn_bundle(SpriteBundle {

texture: image_handle,

..default()

})

```

Priority can also be used to layer to cameras on top of each other for the same RenderTarget. This is what "2d on top of 3d" looks like in the new system:

```rust

commands.spawn_bundle(Camera3dBundle::default());

commands.spawn_bundle(Camera2dBundle {

camera: Camera {

// this will render 2d entities "on top" of the default 3d camera's render

priority: 1,

..default()

},

..default()

});

```

There is no longer the concept of a global "active camera". Resources like `ActiveCamera<Camera2d>` and `ActiveCamera<Camera3d>` have been replaced with the camera-specific `Camera::is_active` field. This does put the onus on users to manage which cameras should be active.

Cameras are now assigned a single render graph as an "entry point", which is configured on each camera entity using the new `CameraRenderGraph` component. The old `PerspectiveCameraBundle` and `OrthographicCameraBundle` (generic on camera marker components like Camera2d and Camera3d) have been replaced by `Camera3dBundle` and `Camera2dBundle`, which set 3d and 2d default values for the `CameraRenderGraph` and projections.

```rust

// old 3d perspective camera

commands.spawn_bundle(PerspectiveCameraBundle::default())

// new 3d perspective camera

commands.spawn_bundle(Camera3dBundle::default())

```

```rust

// old 2d orthographic camera

commands.spawn_bundle(OrthographicCameraBundle::new_2d())

// new 2d orthographic camera

commands.spawn_bundle(Camera2dBundle::default())

```

```rust

// old 3d orthographic camera

commands.spawn_bundle(OrthographicCameraBundle::new_3d())

// new 3d orthographic camera

commands.spawn_bundle(Camera3dBundle {

projection: OrthographicProjection {

scale: 3.0,

scaling_mode: ScalingMode::FixedVertical,

..default()

}.into(),

..default()

})

```

Note that `Camera3dBundle` now uses a new `Projection` enum instead of hard coding the projection into the type. There are a number of motivators for this change: the render graph is now a part of the bundle, the way "generic bundles" work in the rust type system prevents nice `..default()` syntax, and changing projections at runtime is much easier with an enum (ex for editor scenarios). I'm open to discussing this choice, but I'm relatively certain we will all come to the same conclusion here. Camera2dBundle and Camera3dBundle are much clearer than being generic on marker components / using non-default constructors.

If you want to run a custom render graph on a camera, just set the `CameraRenderGraph` component:

```rust

commands.spawn_bundle(Camera3dBundle {

camera_render_graph: CameraRenderGraph::new(some_render_graph_name),

..default()

})

```

Just note that if the graph requires data from specific components to work (such as `Camera3d` config, which is provided in the `Camera3dBundle`), make sure the relevant components have been added.

Speaking of using components to configure graphs / passes, there are a number of new configuration options:

```rust

commands.spawn_bundle(Camera3dBundle {

camera_3d: Camera3d {

// overrides the default global clear color

clear_color: ClearColorConfig::Custom(Color::RED),

..default()

},

..default()

})

commands.spawn_bundle(Camera3dBundle {

camera_3d: Camera3d {

// disables clearing

clear_color: ClearColorConfig::None,

..default()

},

..default()

})

```

Expect to see more of the "graph configuration Components on Cameras" pattern in the future.

By popular demand, UI no longer requires a dedicated camera. `UiCameraBundle` has been removed. `Camera2dBundle` and `Camera3dBundle` now both default to rendering UI as part of their own render graphs. To disable UI rendering for a camera, disable it using the CameraUi component:

```rust

commands

.spawn_bundle(Camera3dBundle::default())

.insert(CameraUi {

is_enabled: false,

..default()

})

```

## Other Changes

* The separate clear pass has been removed. We should revisit this for things like sky rendering, but I think this PR should "keep it simple" until we're ready to properly support that (for code complexity and performance reasons). We can come up with the right design for a modular clear pass in a followup pr.

* I reorganized bevy_core_pipeline into Core2dPlugin and Core3dPlugin (and core_2d / core_3d modules). Everything is pretty much the same as before, just logically separate. I've moved relevant types (like Camera2d, Camera3d, Camera3dBundle, Camera2dBundle) into their relevant modules, which is what motivated this reorganization.

* I adapted the `scene_viewer` example (which relied on the ActiveCameras behavior) to the new system. I also refactored bits and pieces to be a bit simpler.

* All of the examples have been ported to the new camera approach. `render_to_texture` and `multiple_windows` are now _much_ simpler. I removed `two_passes` because it is less relevant with the new approach. If someone wants to add a new "layered custom pass with CameraRenderGraph" example, that might fill a similar niche. But I don't feel much pressure to add that in this pr.

* Cameras now have `target_logical_size` and `target_physical_size` fields, which makes finding the size of a camera's render target _much_ simpler. As a result, the `Assets<Image>` and `Windows` parameters were removed from `Camera::world_to_screen`, making that operation much more ergonomic.

* Render order ambiguities between cameras with the same target and the same priority now produce a warning. This accomplishes two goals:

1. Now that there is no "global" active camera, by default spawning two cameras will result in two renders (one covering the other). This would be a silent performance killer that would be hard to detect after the fact. By detecting ambiguities, we can provide a helpful warning when this occurs.

2. Render order ambiguities could result in unexpected / unpredictable render results. Resolving them makes sense.

## Follow Up Work

* Per-Camera viewports, which will make it possible to render to a smaller area inside of a RenderTarget (great for something like splitscreen)

* Camera-specific MSAA config (should use the same "overriding" pattern used for ClearColor)

* Graph Based Camera Ordering: priorities are simple, but they make complicated ordering constraints harder to express. We should consider adopting a "graph based" camera ordering model with "before" and "after" relationships to other cameras (or build it "on top" of the priority system).

* Consider allowing graphs to run subgraphs from any nest level (aka a global namespace for graphs). Right now the 2d and 3d graphs each need their own UI subgraph, which feels "fine" in the short term. But being able to share subgraphs between other subgraphs seems valuable.

* Consider splitting `bevy_core_pipeline` into `bevy_core_2d` and `bevy_core_3d` packages. Theres a shared "clear color" dependency here, which would need a new home.

### Problem

It currently isn't possible to construct the default value of a reflected type. Because of that, it isn't possible to use `add_component` of `ReflectComponent` to add a new component to an entity because you can't know what the initial value should be.

### Solution

1. add `ReflectDefault` type

```rust

#[derive(Clone)]

pub struct ReflectDefault {

default: fn() -> Box<dyn Reflect>,

}

impl ReflectDefault {

pub fn default(&self) -> Box<dyn Reflect> {

(self.default)()

}

}

impl<T: Reflect + Default> FromType<T> for ReflectDefault {

fn from_type() -> Self {

ReflectDefault {

default: || Box::new(T::default()),

}

}

}

```

2. add `#[reflect(Default)]` to all component types that implement `Default` and are user facing (so not `ComputedSize`, `CubemapVisibleEntities` etc.)

This makes it possible to add the default value of a component to an entity without any compile-time information:

```rust

fn main() {

let mut app = App::new();

app.register_type::<Camera>();

let type_registry = app.world.get_resource::<TypeRegistry>().unwrap();

let type_registry = type_registry.read();

let camera_registration = type_registry.get(std::any::TypeId::of::<Camera>()).unwrap();

let reflect_default = camera_registration.data::<ReflectDefault>().unwrap();

let reflect_component = camera_registration

.data::<ReflectComponent>()

.unwrap()

.clone();

let default = reflect_default.default();

drop(type_registry);

let entity = app.world.spawn().id();

reflect_component.add_component(&mut app.world, entity, &*default);

let camera = app.world.entity(entity).get::<Camera>().unwrap();

dbg!(&camera);

}

```

### Open questions

- should we have `ReflectDefault` or `ReflectFromWorld` or both?

# Objective

Add a system parameter `ParamSet` to be used as container for conflicting parameters.

## Solution

Added two methods to the SystemParamState trait, which gives the access used by the parameter. Did the implementation. Added some convenience methods to FilteredAccessSet. Changed `get_conflicts` to return every conflicting component instead of breaking on the first conflicting `FilteredAccess`.

Co-authored-by: bilsen <40690317+bilsen@users.noreply.github.com>

# Objective

- Reduce time spent in the `check_visibility` system

## Solution

- Use `Vec3A` for all bounding volume types to leverage SIMD optimisations and to avoid repeated runtime conversions from `Vec3` to `Vec3A`

- Inline all bounding volume intersection methods

- Add on-the-fly calculated `Aabb` -> `Sphere` and do `Sphere`-`Frustum` intersection tests before `Aabb`-`Frustum` tests. This is faster for `many_cubes` but could be slower in other cases where the sphere test gives a false-positive that the `Aabb` test discards. Also, I tested precalculating the `Sphere`s and inserting them alongside the `Aabb` but this was slower.

- Do not test meshes against the far plane. Apparently games don't do this anymore with infinite projections, and it's one fewer plane to test against. I made it optional and still do the test for culling lights but that is up for discussion.

- These collectively reduce `check_visibility` execution time in `many_cubes -- sphere` from 2.76ms to 1.48ms and increase frame rate from ~42fps to ~44fps

# Objective

- Hierarchy tools are not just used for `Transform`: they are also used for scenes.

- In the future there's interest in using them for other features, such as visiibility inheritance.

- The fact that these tools are found in `bevy_transform` causes a great deal of user and developer confusion

- Fixes#2758.

## Solution

- Split `bevy_transform` into two!

- Make everything work again.

Note that this is a very tightly scoped PR: I *know* there are code quality and docs issues that existed in bevy_transform that I've just moved around. We should fix those in a seperate PR and try to merge this ASAP to reduce the bitrot involved in splitting an entire crate.

## Frustrations

The API around `GlobalTransform` is a mess: we have massive code and docs duplication, no link between the two types and no clear way to extend this to other forms of inheritance.

In the medium-term, I feel pretty strongly that `GlobalTransform` should be replaced by something like `Inherited<Transform>`, which lives in `bevy_hierarchy`:

- avoids code duplication

- makes the inheritance pattern extensible

- links the types at the type-level

- allows us to remove all references to inheritance from `bevy_transform`, making it more useful as a standalone crate and cleaning up its docs

## Additional context

- double-blessed by @cart in https://github.com/bevyengine/bevy/issues/4141#issuecomment-1063592414 and https://github.com/bevyengine/bevy/issues/2758#issuecomment-913810963

- preparation for more advanced / cleaner hierarchy tools: go read https://github.com/bevyengine/rfcs/pull/53 !

- originally attempted by @finegeometer in #2789. It was a great idea, just needed more discussion!

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- Allow opting-out of the built-in frustum culling for cases where its behaviour would be incorrect

- Make use of the this in the shader_instancing example that uses a custom instancing method. The built-in frustum culling breaks the custom instancing in the shader_instancing example if the camera is moved to:

```rust

commands.spawn_bundle(PerspectiveCameraBundle {

transform: Transform::from_xyz(12.0, 0.0, 15.0)

.looking_at(Vec3::new(12.0, 0.0, 0.0), Vec3::Y),

..Default::default()

});

```

...such that the Aabb of the cube Mesh that is at the origin goes completely out of view. This incorrectly (for the purpose of the custom instancing) culls the `Mesh` and so culls all instances even though some may be visible.

## Solution

- Add a `NoFrustumCulling` marker component

- Do not compute and add an `Aabb` to `Mesh` entities without an `Aabb` if they have a `NoFrustumCulling` marker component

- Do not apply frustum culling to entities with the `NoFrustumCulling` marker component

# Objective

The current 2d rendering is specialized to render sprites, we need a generic way to render 2d items, using meshes and materials like we have for 3d.

## Solution

I cloned a good part of `bevy_pbr` into `bevy_sprite/src/mesh2d`, removed lighting and pbr itself, adapted it to 2d rendering, added a `ColorMaterial`, and modified the sprite rendering to break batches around 2d meshes.

~~The PR is a bit crude; I tried to change as little as I could in both the parts copied from 3d and the current sprite rendering to make reviewing easier. In the future, I expect we could make the sprite rendering a normal 2d material, cleanly integrated with the rest.~~ _edit: see <https://github.com/bevyengine/bevy/pull/3460#issuecomment-1003605194>_

## Remaining work

- ~~don't require mesh normals~~ _out of scope_

- ~~add an example~~ _done_

- support 2d meshes & materials in the UI?

- bikeshed names (I didn't think hard about naming, please check if it's fine)

## Remaining questions

- ~~should we add a depth buffer to 2d now that there are 2d meshes?~~ _let's revisit that when we have an opaque render phase_

- ~~should we add MSAA support to the sprites, or remove it from the 2d meshes?~~ _I added MSAA to sprites since it's really needed for 2d meshes_

- ~~how to customize vertex attributes?~~ _#3120_

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Fixes#3422

## Solution

Adds the existing `Visibility` component to UI bundles and checks for it in the extract phase of the render app.

The `ComputedVisibility` component was not added. I don't think the UI camera needs frustum culling, but having `RenderLayers` work may be desirable. However I think we would need to change `check_visibility()` to differentiate between 2d, 3d and UI entities.

This makes the [New Bevy Renderer](#2535) the default (and only) renderer. The new renderer isn't _quite_ ready for the final release yet, but I want as many people as possible to start testing it so we can identify bugs and address feedback prior to release.

The examples are all ported over and operational with a few exceptions:

* I removed a good portion of the examples in the `shader` folder. We still have some work to do in order to make these examples possible / ergonomic / worthwhile: #3120 and "high level shader material plugins" are the big ones. This is a temporary measure.

* Temporarily removed the multiple_windows example: doing this properly in the new renderer will require the upcoming "render targets" changes. Same goes for the render_to_texture example.

* Removed z_sort_debug: entity visibility sort info is no longer available in app logic. we could do this on the "render app" side, but i dont consider it a priority.