# Objective

* Remove all uses of render_resource_wrapper.

* Make it easier to share a `wgpu::Device` between Bevy and application

code.

## Solution

Removed the `render_resource_wrapper` macro.

To improve the `RenderCreation:: Manual ` API, `ErasedRenderDevice` was

replaced by `Arc`. Unfortunately I had to introduce one more usage of

`WgpuWrapper` which seems like an unwanted constraint on the caller.

## Testing

- Did you test these changes? If so, how?

- Ran `cargo test`.

- Ran a few examples.

- Used `RenderCreation::Manual` in my own project

- Exercised `RenderCreation::Automatic` through examples

- Are there any parts that need more testing?

- No

- How can other people (reviewers) test your changes? Is there anything

specific they need to know?

- Run examples

- Use `RenderCreation::Manual` in their own project

# Objective

- Fixes#6370

- Closes#6581

## Solution

- Added the following lints to the workspace:

- `std_instead_of_core`

- `std_instead_of_alloc`

- `alloc_instead_of_core`

- Used `cargo +nightly fmt` with [item level use

formatting](https://rust-lang.github.io/rustfmt/?version=v1.6.0&search=#Item%5C%3A)

to split all `use` statements into single items.

- Used `cargo clippy --workspace --all-targets --all-features --fix

--allow-dirty` to _attempt_ to resolve the new linting issues, and

intervened where the lint was unable to resolve the issue automatically

(usually due to needing an `extern crate alloc;` statement in a crate

root).

- Manually removed certain uses of `std` where negative feature gating

prevented `--all-features` from finding the offending uses.

- Used `cargo +nightly fmt` with [crate level use

formatting](https://rust-lang.github.io/rustfmt/?version=v1.6.0&search=#Crate%5C%3A)

to re-merge all `use` statements matching Bevy's previous styling.

- Manually fixed cases where the `fmt` tool could not re-merge `use`

statements due to conditional compilation attributes.

## Testing

- Ran CI locally

## Migration Guide

The MSRV is now 1.81. Please update to this version or higher.

## Notes

- This is a _massive_ change to try and push through, which is why I've

outlined the semi-automatic steps I used to create this PR, in case this

fails and someone else tries again in the future.

- Making this change has no impact on user code, but does mean Bevy

contributors will be warned to use `core` and `alloc` instead of `std`

where possible.

- This lint is a critical first step towards investigating `no_std`

options for Bevy.

---------

Co-authored-by: François Mockers <francois.mockers@vleue.com>

# Objective

- Fixes#14974

## Solution

- Replace all* instances of `NonZero*` with `NonZero<*>`

## Testing

- CI passed locally.

---

## Notes

Within the `bevy_reflect` implementations for `std` types,

`impl_reflect_value!()` will continue to use the type aliases instead,

as it inappropriately parses the concrete type parameter as a generic

argument. If the `ZeroablePrimitive` trait was stable, or the macro

could be modified to accept a finite list of types, then we could fully

migrate.

Today, we sort all entities added to all phases, even the phases that

don't strictly need sorting, such as the opaque and shadow phases. This

results in a performance loss because our `PhaseItem`s are rather large

in memory, so sorting is slow. Additionally, determining the boundaries

of batches is an O(n) process.

This commit makes Bevy instead applicable place phase items into *bins*

keyed by *bin keys*, which have the invariant that everything in the

same bin is potentially batchable. This makes determining batch

boundaries O(1), because everything in the same bin can be batched.

Instead of sorting each entity, we now sort only the bin keys. This

drops the sorting time to near-zero on workloads with few bins like

`many_cubes --no-frustum-culling`. Memory usage is improved too, with

batch boundaries and dynamic indices now implicit instead of explicit.

The improved memory usage results in a significant win even on

unbatchable workloads like `many_cubes --no-frustum-culling

--vary-material-data-per-instance`, presumably due to cache effects.

Not all phases can be binned; some, such as transparent and transmissive

phases, must still be sorted. To handle this, this commit splits

`PhaseItem` into `BinnedPhaseItem` and `SortedPhaseItem`. Most of the

logic that today deals with `PhaseItem`s has been moved to

`SortedPhaseItem`. `BinnedPhaseItem` has the new logic.

Frame time results (in ms/frame) are as follows:

| Benchmark | `binning` | `main` | Speedup |

| ------------------------ | --------- | ------- | ------- |

| `many_cubes -nfc -vpi` | 232.179 | 312.123 | 34.43% |

| `many_cubes -nfc` | 25.874 | 30.117 | 16.40% |

| `many_foxes` | 3.276 | 3.515 | 7.30% |

(`-nfc` is short for `--no-frustum-culling`; `-vpi` is short for

`--vary-per-instance`.)

---

## Changelog

### Changed

* Render phases have been split into binned and sorted phases. Binned

phases, such as the common opaque phase, achieve improved CPU

performance by avoiding the sorting step.

## Migration Guide

- `PhaseItem` has been split into `BinnedPhaseItem` and

`SortedPhaseItem`. If your code has custom `PhaseItem`s, you will need

to migrate them to one of these two types. `SortedPhaseItem` requires

the fewest code changes, but you may want to pick `BinnedPhaseItem` if

your phase doesn't require sorting, as that enables higher performance.

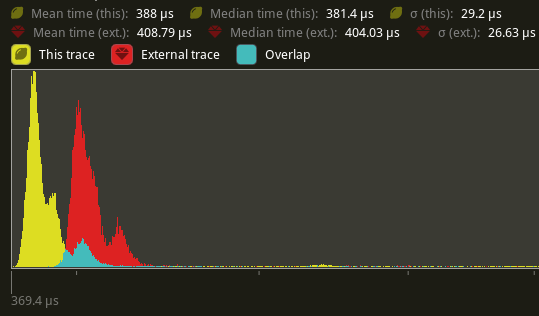

## Tracy graphs

`many-cubes --no-frustum-culling`, `main` branch:

<img width="1064" alt="Screenshot 2024-03-12 180037"

src="https://github.com/bevyengine/bevy/assets/157897/e1180ce8-8e89-46d2-85e3-f59f72109a55">

`many-cubes --no-frustum-culling`, this branch:

<img width="1064" alt="Screenshot 2024-03-12 180011"

src="https://github.com/bevyengine/bevy/assets/157897/0899f036-6075-44c5-a972-44d95895f46c">

You can see that `batch_and_prepare_binned_render_phase` is a much

smaller fraction of the time. Zooming in on that function, with yellow

being this branch and red being `main`, we see:

<img width="1064" alt="Screenshot 2024-03-12 175832"

src="https://github.com/bevyengine/bevy/assets/157897/0dfc8d3f-49f4-496e-8825-a66e64d356d0">

The binning happens in `queue_material_meshes`. Again with yellow being

this branch and red being `main`:

<img width="1064" alt="Screenshot 2024-03-12 175755"

src="https://github.com/bevyengine/bevy/assets/157897/b9b20dc1-11c8-400c-a6cc-1c2e09c1bb96">

We can see that there is a small regression in `queue_material_meshes`

performance, but it's not nearly enough to outweigh the large gains in

`batch_and_prepare_binned_render_phase`.

---------

Co-authored-by: James Liu <contact@jamessliu.com>

# Objective

This gets Bevy building on Wasm when the `atomics` flag is enabled. This

does not yet multithread Bevy itself, but it allows Bevy users to use a

crate like `wasm_thread` to spawn their own threads and manually

parallelize work. This is a first step towards resolving #4078 . Also

fixes#9304.

This provides a foothold so that Bevy contributors can begin to think

about multithreaded Wasm's constraints and Bevy can work towards changes

to get the engine itself multithreaded.

Some flags need to be set on the Rust compiler when compiling for Wasm

multithreading. Here's what my build script looks like, with the correct

flags set, to test out Bevy examples on web:

```bash

set -e

RUSTFLAGS='-C target-feature=+atomics,+bulk-memory,+mutable-globals' \

cargo build --example breakout --target wasm32-unknown-unknown -Z build-std=std,panic_abort --release

wasm-bindgen --out-name wasm_example \

--out-dir examples/wasm/target \

--target web target/wasm32-unknown-unknown/release/examples/breakout.wasm

devserver --header Cross-Origin-Opener-Policy='same-origin' --header Cross-Origin-Embedder-Policy='require-corp' --path examples/wasm

```

A few notes:

1. `cpal` crashes immediately when the `atomics` flag is set. That is

patched in https://github.com/RustAudio/cpal/pull/837, but not yet in

the latest crates.io release.

That can be temporarily worked around by patching Cpal like so:

```toml

[patch.crates-io]

cpal = { git = "https://github.com/RustAudio/cpal" }

```

2. When testing out `wasm_thread` you need to enable the `es_modules`

feature.

## Solution

The largest obstacle to compiling Bevy with `atomics` on web is that

`wgpu` types are _not_ Send and Sync. Longer term Bevy will need an

approach to handle that, but in the near term Bevy is already configured

to be single-threaded on web.

Therefor it is enough to wrap `wgpu` types in a

`send_wrapper::SendWrapper` that _is_ Send / Sync, but panics if

accessed off the `wgpu` thread.

---

## Changelog

- `wgpu` types that are not `Send` are wrapped in

`send_wrapper::SendWrapper` on Wasm + 'atomics'

- CommandBuffers are not generated in parallel on Wasm + 'atomics'

## Questions

- Bevy should probably add CI checks to make sure this doesn't regress.

Should that go in this PR or a separate PR? **Edit:** Added checks to

build Wasm with atomics

---------

Co-authored-by: François <mockersf@gmail.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: daxpedda <daxpedda@gmail.com>

Co-authored-by: François <francois.mockers@vleue.com>

# Objective

Enables warning on `clippy::undocumented_unsafe_blocks` across the

workspace rather than only in `bevy_ecs`, `bevy_transform` and

`bevy_utils`. This adds a little awkwardness in a few areas of code that

have trivial safety or explain safety for multiple unsafe blocks with

one comment however automatically prevents these comments from being

missed.

## Solution

This adds `undocumented_unsafe_blocks = "warn"` to the workspace

`Cargo.toml` and fixes / adds a few missed safety comments. I also added

`#[allow(clippy::undocumented_unsafe_blocks)]` where the safety is

explained somewhere above.

There are a couple of safety comments I added I'm not 100% sure about in

`bevy_animation` and `bevy_render/src/view` and I'm not sure about the

use of `#[allow(clippy::undocumented_unsafe_blocks)]` compared to adding

comments like `// SAFETY: See above`.

# Objective

[as noted](https://github.com/bevyengine/bevy/pull/5950#discussion_r1080762807) by james, transmuting arcs may be UB.

we now store a `*const ()` pointer internally, and only rely on `ptr.cast::<()>().cast::<T>() == ptr`.

as a happy side effect this removes the need for boxing the value, so todo: potentially use this for release mode as well

# Objective

Speed up the render phase for rendering.

## Solution

- Follow up #6988 and make the internals of atomic IDs `NonZeroU32`. This niches the `Option`s of the IDs in draw state, which reduces the size and branching behavior when evaluating for equality.

- Require `&RenderDevice` to get the device's `Limits` when initializing a `TrackedRenderPass` to preallocate the bind groups and vertex buffer state in `DrawState`, this removes the branch on needing to resize those `Vec`s.

## Performance

This produces a similar speed up akin to that of #6885. This shows an approximate 6% speed up in `main_opaque_pass_3d` on `many_foxes` (408.79 us -> 388us). This should be orthogonal to the gains seen there.

---

## Changelog

Added: `RenderContext::begin_tracked_render_pass`.

Changed: `TrackedRenderPass` now requires a `&RenderDevice` on construction.

Removed: `bevy_render::render_phase::DrawState`. It was not usable in any form outside of `bevy_render`.

## Migration Guide

TODO

# Objective

- alternative to #2895

- as mentioned in #2535 the uuid based ids in the render module should be replaced with atomic-counted ones

## Solution

- instead of generating a random UUID for each render resource, this implementation increases an atomic counter

- this might be replaced by the ids of wgpu if they expose them directly in the future

- I have not benchmarked this solution yet, but this should be slightly faster in theory.

- Bevymark does not seem to be affected much by this change, which is to be expected.

- Nothing of our API has changed, other than that the IDs have lost their IMO rather insignificant documentation.

- Maybe the documentation could be added back into the macro, but this would complicate the code.