The goal of `bevy_platform_support` is to provide a set of platform

agnostic APIs, alongside platform-specific functionality. This is a high

traffic crate (providing things like HashMap and Instant). Especially in

light of https://github.com/bevyengine/bevy/discussions/18799, it

deserves a friendlier / shorter name.

Given that it hasn't had a full release yet, getting this change in

before Bevy 0.16 makes sense.

- Rename `bevy_platform_support` to `bevy_platform`.

Fixes#17591

Looking at the arm downloads page, "r48p0" is a version number that

increments, where rXX is the major version and pX seems to be a patch

version. Take the conservative approach here that we know gpu

preprocessing is working on at least version 48 and presumably higher.

The assumption here is that the driver_info string will be reported

similarly on non-pixel devices.

# Objective

Fixes#16896Fixes#17737

## Solution

Adds a new render phase, including all the new cold specialization

patterns, for wireframes. There's a *lot* of regrettable duplication

here between 3d/2d.

## Testing

All the examples.

## Migration Guide

- `WireframePlugin` must now be created with

`WireframePlugin::default()`.

## Objective

The `MotionBlur` component exposes renderer internals. Users shouldn't

have to deal with this.

```rust

MotionBlur {

shutter_angle: 1.0,

samples: 2,

#[cfg(all(feature = "webgl2", target_arch = "wasm32", not(feature = "webgpu")))]

_webgl2_padding: Default::default(),

},

```

## Solution

The renderer now uses a separate `MotionBlurUniform` struct for its

internals. `MotionBlur` no longer needs padding.

I was a bit unsure about the name `MotionBlurUniform`. Other modules use

a mix of `Uniform` and `Uniforms`.

## Testing

```

cargo run --example motion_blur

```

Tested on Win10/Nvidia across Vulkan, WebGL/Chrome, WebGPU/Chrome.

# Objective

Unlike for their helper typers, the import paths for

`unique_array::UniqueEntityArray`, `unique_slice::UniqueEntitySlice`,

`unique_vec::UniqueEntityVec`, `hash_set::EntityHashSet`,

`hash_map::EntityHashMap`, `index_set::EntityIndexSet`,

`index_map::EntityIndexMap` are quite redundant.

When looking at the structure of `hashbrown`, we can also see that while

both `HashSet` and `HashMap` have their own modules, the main types

themselves are re-exported to the crate level.

## Solution

Re-export the types in their shared `entity` parent module, and simplify

the imports where they're used.

# Objective

Requires are currently more verbose than they need to be. People would

like to define inline component values. Additionally, the current

`#[require(Foo(custom_constructor))]` and `#[require(Foo(|| Foo(10))]`

syntax doesn't really make sense within the context of the Rust type

system. #18309 was an attempt to improve ergonomics for some cases, but

it came at the cost of even more weirdness / unintuitive behavior. Our

approach as a whole needs a rethink.

## Solution

Rework the `#[require()]` syntax to make more sense. This is a breaking

change, but I think it will make the system easier to learn, while also

improving ergonomics substantially:

```rust

#[derive(Component)]

#[require(

A, // this will use A::default()

B(1), // inline tuple-struct value

C { value: 1 }, // inline named-struct value

D::Variant, // inline enum variant

E::SOME_CONST, // inline associated const

F::new(1), // inline constructor

G = returns_g(), // an expression that returns G

H = SomethingElse::new(), // expression returns SomethingElse, where SomethingElse: Into<H>

)]

struct Foo;

```

## Migration Guide

Custom-constructor requires should use the new expression-style syntax:

```rust

// before

#[derive(Component)]

#[require(A(returns_a))]

struct Foo;

// after

#[derive(Component)]

#[require(A = returns_a())]

struct Foo;

```

Inline-closure-constructor requires should use the inline value syntax

where possible:

```rust

// before

#[derive(Component)]

#[require(A(|| A(10))]

struct Foo;

// after

#[derive(Component)]

#[require(A(10)]

struct Foo;

```

In cases where that is not possible, use the expression-style syntax:

```rust

// before

#[derive(Component)]

#[require(A(|| A(10))]

struct Foo;

// after

#[derive(Component)]

#[require(A = A(10)]

struct Foo;

```

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: François Mockers <mockersf@gmail.com>

# Objective

Extract sprites into a `Vec` instead of a `HashMap`.

## Solution

Extract UI nodes into a `Vec` instead of an `EntityHashMap`.

Add an index into the `Vec` to `Transparent2d`.

Compare both the index and render entity in prepare so there aren't any

collisions.

## Showcase

yellow this PR, red main

```

cargo run --example many_sprites --release --features "trace_tracy"

```

`extract_sprites`

<img width="452" alt="extract_sprites"

src="https://github.com/user-attachments/assets/66c60406-7c2b-4367-907d-4a71d3630296"

/>

`queue_sprites`

<img width="463" alt="queue_sprites"

src="https://github.com/user-attachments/assets/54b903bd-4137-4772-9f87-e10e1e050d69"

/>

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

# Objective

Now that #13432 has been merged, it's important we update our reflected

types to properly opt into this feature. If we do not, then this could

cause issues for users downstream who want to make use of

reflection-based cloning.

## Solution

This PR is broken into 4 commits:

1. Add `#[reflect(Clone)]` on all types marked `#[reflect(opaque)]` that

are also `Clone`. This is mandatory as these types would otherwise cause

the cloning operation to fail for any type that contains it at any

depth.

2. Update the reflection example to suggest adding `#[reflect(Clone)]`

on opaque types.

3. Add `#[reflect(clone)]` attributes on all fields marked

`#[reflect(ignore)]` that are also `Clone`. This prevents the ignored

field from causing the cloning operation to fail.

Note that some of the types that contain these fields are also `Clone`,

and thus can be marked `#[reflect(Clone)]`. This makes the

`#[reflect(clone)]` attribute redundant. However, I think it's safer to

keep it marked in the case that the `Clone` impl/derive is ever removed.

I'm open to removing them, though, if people disagree.

4. Finally, I added `#[reflect(Clone)]` on all types that are also

`Clone`. While not strictly necessary, it enables us to reduce the

generated output since we can just call `Clone::clone` directly instead

of calling `PartialReflect::reflect_clone` on each variant/field. It

also means we benefit from any optimizations or customizations made in

the `Clone` impl, including directly dereferencing `Copy` values and

increasing reference counters.

Along with that change I also took the liberty of adding any missing

registrations that I saw could be applied to the type as well, such as

`Default`, `PartialEq`, and `Hash`. There were hundreds of these to

edit, though, so it's possible I missed quite a few.

That last commit is **_massive_**. There were nearly 700 types to

update. So it's recommended to review the first three before moving onto

that last one.

Additionally, I can break the last commit off into its own PR or into

smaller PRs, but I figured this would be the easiest way of doing it

(and in a timely manner since I unfortunately don't have as much time as

I used to for code contributions).

## Testing

You can test locally with a `cargo check`:

```

cargo check --workspace --all-features

```

Fix https://github.com/bevyengine/bevy/issues/17763.

Attachment info needs to be created outside of the command encoding

task, as it needs to be part of the serial node runners in order to get

the ordering correct.

# Objective

Prevents duplicate implementation between IntoSystemConfigs and

IntoSystemSetConfigs using a generic, adds a NodeType trait for more

config flexibility (opening the door to implement

https://github.com/bevyengine/bevy/issues/14195?).

## Solution

Followed writeup by @ItsDoot:

https://hackmd.io/@doot/rJeefFHc1x

Removes IntoSystemConfigs and IntoSystemSetConfigs, instead using

IntoNodeConfigs with generics.

## Testing

Pending

---

## Showcase

N/A

## Migration Guide

SystemSetConfigs -> NodeConfigs<InternedSystemSet>

SystemConfigs -> NodeConfigs<ScheduleSystem>

IntoSystemSetConfigs -> IntoNodeConfigs<InternedSystemSet, M>

IntoSystemConfigs -> IntoNodeConfigs<ScheduleSystem, M>

---------

Co-authored-by: Christian Hughes <9044780+ItsDoot@users.noreply.github.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

# Overview

Fixes https://github.com/bevyengine/bevy/issues/17869.

# Summary

`WGPU_SETTINGS_PRIO` changes various limits on `RenderDevice`. This is

useful to simulate platforms with lower limits.

However, some plugins only check the limits on `RenderAdapter` (the

actual GPU) - these limits are not affected by `WGPU_SETTINGS_PRIO`. So

the plugins try to use features that are unavailable and wgpu says "oh

no". See https://github.com/bevyengine/bevy/issues/17869 for details.

The PR adds various checks on `RenderDevice` limits. This is enough to

get most examples working, but some are not fixed (see below).

# Testing

- Tested native, with and without "WGPU_SETTINGS=webgl2".

Win10/Vulkan/Nvidia".

- Also WebGL. Win10/Chrome/Nvidia.

```

$env:WGPU_SETTINGS_PRIO = "webgl2"

cargo run --example testbed_3d

cargo run --example testbed_2d

cargo run --example testbed_ui

cargo run --example deferred_rendering

cargo run --example many_lights

cargo run --example order_independent_transparency # Still broken, see below.

cargo run --example occlusion_culling # Still broken, see below.

```

# Not Fixed

While testing I found a few other cases of limits being broken.

"Compatibility" settings (WebGPU minimums) breaks native in various

examples.

```

$env:WGPU_SETTINGS_PRIO = "compatibility"

cargo run --example testbed_3d

In Device::create_bind_group_layout, label = 'build mesh uniforms GPU early occlusion culling bind group layout'

Too many bindings of type StorageBuffers in Stage ShaderStages(COMPUTE), limit is 8, count was 9. Check the limit `max_storage_buffers_per_shader_stage` passed to `Adapter::request_device`

```

`occlusion_culling` breaks fake webgl.

```

$env:WGPU_SETTINGS_PRIO = "webgl2"

cargo run --example occlusion_culling

thread '<unnamed>' panicked at C:\Projects\bevy\crates\bevy_render\src\render_resource\pipeline_cache.rs:555:28:

index out of bounds: the len is 0 but the index is 2

Encountered a panic in system `bevy_render::renderer::render_system`!

```

`occlusion_culling` breaks real webgl.

```

cargo run --example occlusion_culling --target wasm32-unknown-unknown

ERROR app: panicked at C:\Users\T\.cargo\registry\src\index.crates.io-1949cf8c6b5b557f\glow-0.16.0\src\web_sys.rs:4223:9:

Tex storage 2D multisample is not supported

```

OIT breaks fake webgl.

```

$env:WGPU_SETTINGS_PRIO = "webgl2"

cargo run --example order_independent_transparency

In Device::create_bind_group, label = 'mesh_view_bind_group'

Number of bindings in bind group descriptor (28) does not match the number of bindings defined in the bind group layout (25)

```

OIT breaks real webgl

```

cargo run --example order_independent_transparency --target wasm32-unknown-unknown

In Device::create_render_pipeline, label = 'pbr_oit_mesh_pipeline'

Error matching ShaderStages(FRAGMENT) shader requirements against the pipeline

Shader global ResourceBinding { group: 0, binding: 34 } is not available in the pipeline layout

Binding is missing from the pipeline layout

```

# Objective

Component `require()` IDE integration is fully broken, as of #16575.

## Solution

This reverts us back to the previous "put the docs on Component trait"

impl. This _does_ reduce the accessibility of the required components in

rust docs, but the complete erasure of "required component IDE

experience" is not worth the price of slightly increased prominence of

requires in docs.

Additionally, Rust Analyzer has recently started including derive

attributes in suggestions, so we aren't losing that benefit of the

proc_macro attribute impl.

# Objective

As discussed in #14275, Bevy is currently too prone to panic, and makes

the easy / beginner-friendly way to do a large number of operations just

to panic on failure.

This is seriously frustrating in library code, but also slows down

development, as many of the `Query::single` panics can actually safely

be an early return (these panics are often due to a small ordering issue

or a change in game state.

More critically, in most "finished" products, panics are unacceptable:

any unexpected failures should be handled elsewhere. That's where the

new

With the advent of good system error handling, we can now remove this.

Note: I was instrumental in a) introducing this idea in the first place

and b) pushing to make the panicking variant the default. The

introduction of both `let else` statements in Rust and the fancy system

error handling work in 0.16 have changed my mind on the right balance

here.

## Solution

1. Make `Query::single` and `Query::single_mut` (and other random

related methods) return a `Result`.

2. Handle all of Bevy's internal usage of these APIs.

3. Deprecate `Query::get_single` and friends, since we've moved their

functionality to the nice names.

4. Add detailed advice on how to best handle these errors.

Generally I like the diff here, although `get_single().unwrap()` in

tests is a bit of a downgrade.

## Testing

I've done a global search for `.single` to track down any missed

deprecated usages.

As to whether or not all the migrations were successful, that's what CI

is for :)

## Future work

~~Rename `Query::get_single` and friends to `Query::single`!~~

~~I've opted not to do this in this PR, and smear it across two releases

in order to ease the migration. Successive deprecations are much easier

to manage than the semantics and types shifting under your feet.~~

Cart has convinced me to change my mind on this; see

https://github.com/bevyengine/bevy/pull/18082#discussion_r1974536085.

## Migration guide

`Query::single`, `Query::single_mut` and their `QueryState` equivalents

now return a `Result`. Generally, you'll want to:

1. Use Bevy 0.16's system error handling to return a `Result` using the

`?` operator.

2. Use a `let else Ok(data)` block to early return if it's an expected

failure.

3. Use `unwrap()` or `Ok` destructuring inside of tests.

The old `Query::get_single` (etc) methods which did this have been

deprecated.

# Objective

- Fixes#17960

## Solution

- Followed the [edition upgrade

guide](https://doc.rust-lang.org/edition-guide/editions/transitioning-an-existing-project-to-a-new-edition.html)

## Testing

- CI

---

## Summary of Changes

### Documentation Indentation

When using lists in documentation, proper indentation is now linted for.

This means subsequent lines within the same list item must start at the

same indentation level as the item.

```rust

/* Valid */

/// - Item 1

/// Run-on sentence.

/// - Item 2

struct Foo;

/* Invalid */

/// - Item 1

/// Run-on sentence.

/// - Item 2

struct Foo;

```

### Implicit `!` to `()` Conversion

`!` (the never return type, returned by `panic!`, etc.) no longer

implicitly converts to `()`. This is particularly painful for systems

with `todo!` or `panic!` statements, as they will no longer be functions

returning `()` (or `Result<()>`), making them invalid systems for

functions like `add_systems`. The ideal fix would be to accept functions

returning `!` (or rather, _not_ returning), but this is blocked on the

[stabilisation of the `!` type

itself](https://doc.rust-lang.org/std/primitive.never.html), which is

not done.

The "simple" fix would be to add an explicit `-> ()` to system

signatures (e.g., `|| { todo!() }` becomes `|| -> () { todo!() }`).

However, this is _also_ banned, as there is an existing lint which (IMO,

incorrectly) marks this as an unnecessary annotation.

So, the "fix" (read: workaround) is to put these kinds of `|| -> ! { ...

}` closuers into variables and give the variable an explicit type (e.g.,

`fn()`).

```rust

// Valid

let system: fn() = || todo!("Not implemented yet!");

app.add_systems(..., system);

// Invalid

app.add_systems(..., || todo!("Not implemented yet!"));

```

### Temporary Variable Lifetimes

The order in which temporary variables are dropped has changed. The

simple fix here is _usually_ to just assign temporaries to a named

variable before use.

### `gen` is a keyword

We can no longer use the name `gen` as it is reserved for a future

generator syntax. This involved replacing uses of the name `gen` with

`r#gen` (the raw-identifier syntax).

### Formatting has changed

Use statements have had the order of imports changed, causing a

substantial +/-3,000 diff when applied. For now, I have opted-out of

this change by amending `rustfmt.toml`

```toml

style_edition = "2021"

```

This preserves the original formatting for now, reducing the size of

this PR. It would be a simple followup to update this to 2024 and run

`cargo fmt`.

### New `use<>` Opt-Out Syntax

Lifetimes are now implicitly included in RPIT types. There was a handful

of instances where it needed to be added to satisfy the borrow checker,

but there may be more cases where it _should_ be added to avoid

breakages in user code.

### `MyUnitStruct { .. }` is an invalid pattern

Previously, you could match against unit structs (and unit enum

variants) with a `{ .. }` destructuring. This is no longer valid.

### Pretty much every use of `ref` and `mut` are gone

Pattern binding has changed to the point where these terms are largely

unused now. They still serve a purpose, but it is far more niche now.

### `iter::repeat(...).take(...)` is bad

New lint recommends using the more explicit `iter::repeat_n(..., ...)`

instead.

## Migration Guide

The lifetimes of functions using return-position impl-trait (RPIT) are

likely _more_ conservative than they had been previously. If you

encounter lifetime issues with such a function, please create an issue

to investigate the addition of `+ use<...>`.

## Notes

- Check the individual commits for a clearer breakdown for what

_actually_ changed.

---------

Co-authored-by: François Mockers <francois.mockers@vleue.com>

Two-phase occlusion culling can be helpful for shadow maps just as it

can for a prepass, in order to reduce vertex and alpha mask fragment

shading overhead. This patch implements occlusion culling for shadow

maps from directional lights, when the `OcclusionCulling` component is

present on the entities containing the lights. Shadow maps from point

lights are deferred to a follow-up patch. Much of this patch involves

expanding the hierarchical Z-buffer to cover shadow maps in addition to

standard view depth buffers.

The `scene_viewer` example has been updated to add `OcclusionCulling` to

the directional light that it creates.

This improved the performance of the rend3 sci-fi test scene when

enabling shadows.

Deferred rendering currently doesn't support occlusion culling. This PR

implements it in a straightforward way, mirroring what we already do for

the non-deferred pipeline.

On the rend3 sci-fi base test scene, this resulted in roughly a 2×

speedup when applied on top of my other patches. For that scene, it was

useful to add another option, `--add-light`, which forces the addition

of a shadow-casting light, to the scene viewer, which I included in this

patch.

Conceptually, bins are ordered hash maps. We currently implement these

as a list of keys with an associated hash map. But we already have a

data type that implements ordered hash maps directly: `IndexMap`. This

patch switches Bevy to use `IndexMap`s for bins. Because we're memory

bound, this doesn't affect performance much, but it is cleaner.

* Use texture atomics rather than buffer atomics for the visbuffer

(haven't tested perf on a raster-heavy scene yet)

* Unfortunately to clear the visbuffer we now need a compute pass to

clear it. Using wgpu's clear_texture function internally uses a buffer

-> image copy that's insanely expensive. Ideally it should be using

vkCmdClearColorImage, which I've opened an issue for

https://github.com/gfx-rs/wgpu/issues/7090. For now we'll have to stick

with a custom compute pass and all the extra code that brings.

* Faster resolve depth pass by discarding 0 depth pixels instead of

redundantly writing zero (2x faster for big depth textures like shadow

views)

# Objective

https://github.com/bevyengine/bevy/issues/17746

## Solution

- Change `Image.data` from being a `Vec<u8>` to a `Option<Vec<u8>>`

- Added functions to help with creating images

## Testing

- Did you test these changes? If so, how?

All current tests pass

Tested a variety of existing examples to make sure they don't crash

(they don't)

- If relevant, what platforms did you test these changes on, and are

there any important ones you can't test?

Linux x86 64-bit NixOS

---

## Migration Guide

Code that directly access `Image` data will now need to use unwrap or

handle the case where no data is provided.

Behaviour of new_fill slightly changed, but not in a way that is likely

to affect anything. It no longer panics and will fill the whole texture

instead of leaving black pixels if the data provided is not a nice

factor of the size of the image.

---------

Co-authored-by: IceSentry <IceSentry@users.noreply.github.com>

Didn't remove WgpuWrapper. Not sure if it's needed or not still.

## Testing

- Did you test these changes? If so, how? Example runner

- Are there any parts that need more testing? Web (portable atomics

thingy?), DXC.

## Migration Guide

- Bevy has upgraded to [wgpu

v24](https://github.com/gfx-rs/wgpu/blob/trunk/CHANGELOG.md#v2400-2025-01-15).

- When using the DirectX 12 rendering backend, the new priority system

for choosing a shader compiler is as follows:

- If the `WGPU_DX12_COMPILER` environment variable is set at runtime, it

is used

- Else if the new `statically-linked-dxc` feature is enabled, a custom

version of DXC will be statically linked into your app at compile time.

- Else Bevy will look in the app's working directory for

`dxcompiler.dll` and `dxil.dll` at runtime.

- Else if they are missing, Bevy will fall back to FXC (not recommended)

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: IceSentry <c.giguere42@gmail.com>

Co-authored-by: François Mockers <francois.mockers@vleue.com>

# Objective

- publish script copy the license files to all subcrates, meaning that

all publish are dirty. this breaks git verification of crates

- the order and list of crates to publish is manually maintained,

leading to error. cargo 1.84 is more strict and the list is currently

wrong

## Solution

- duplicate all the licenses to all crates and remove the

`--allow-dirty` flag

- instead of a manual list of crates, get it from `cargo package

--workspace`

- remove the `--no-verify` flag to... verify more things?

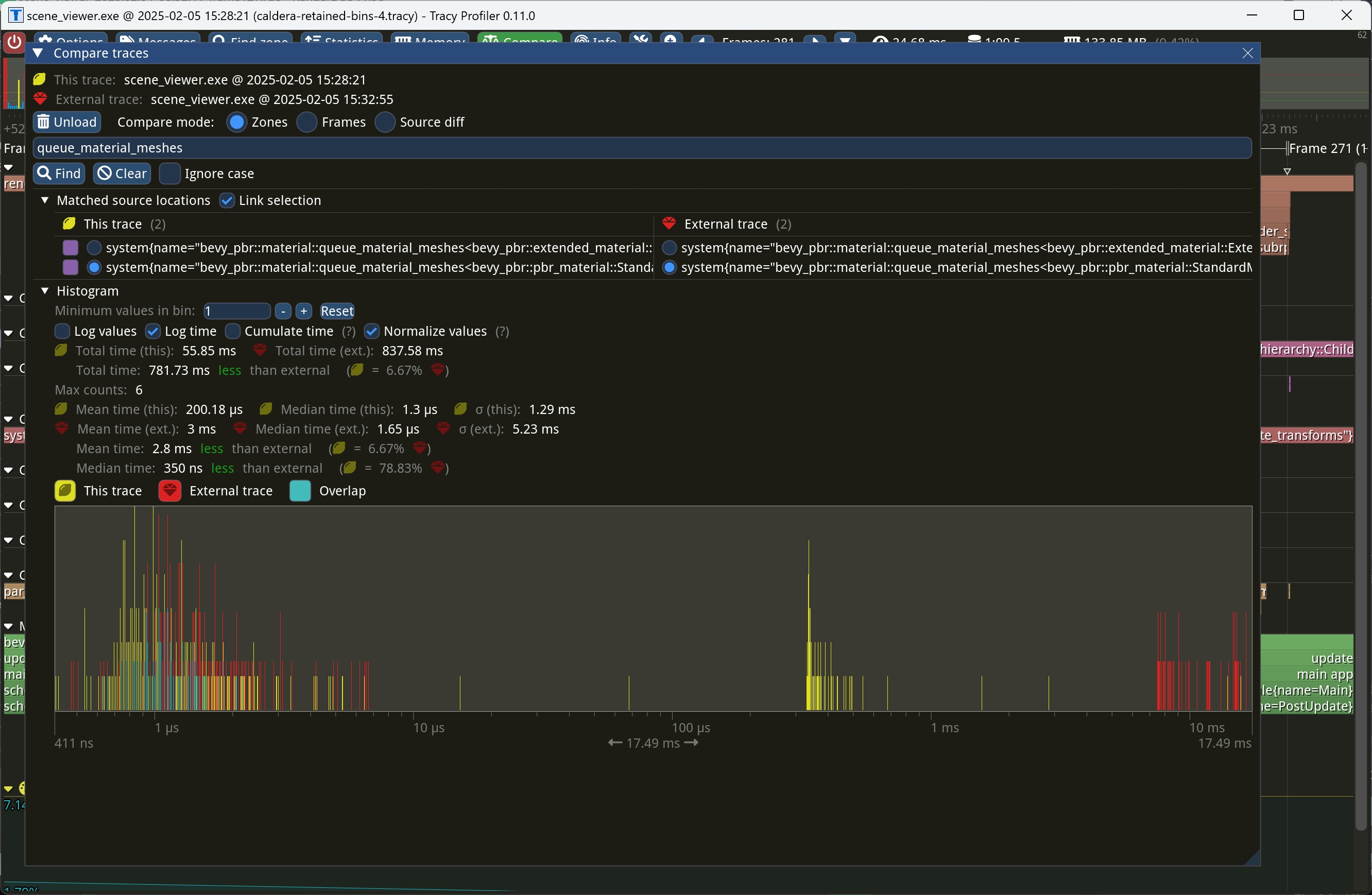

This PR makes Bevy keep entities in bins from frame to frame if they

haven't changed. This reduces the time spent in `queue_material_meshes`

and related functions to near zero for static geometry. This patch uses

the same change tick technique that #17567 uses to detect when meshes

have changed in such a way as to require re-binning.

In order to quickly find the relevant bin for an entity when that entity

has changed, we introduce a new type of cache, the *bin key cache*. This

cache stores a mapping from main world entity ID to cached bin key, as

well as the tick of the most recent change to the entity. As we iterate

through the visible entities in `queue_material_meshes`, we check the

cache to see whether the entity needs to be re-binned. If it doesn't,

then we mark it as clean in the `valid_cached_entity_bin_keys` bit set.

If it does, then we insert it into the correct bin, and then mark the

entity as clean. At the end, all entities not marked as clean are

removed from the bins.

This patch has a dramatic effect on the rendering performance of most

benchmarks, as it effectively eliminates `queue_material_meshes` from

the profile. Note, however, that it generally simultaneously regresses

`batch_and_prepare_binned_render_phase` by a bit (not by enough to

outweigh the win, however). I believe that's because, before this patch,

`queue_material_meshes` put the bins in the CPU cache for

`batch_and_prepare_binned_render_phase` to use, while with this patch,

`batch_and_prepare_binned_render_phase` must load the bins into the CPU

cache itself.

On Caldera, this reduces the time spent in `queue_material_meshes` from

5+ ms to 0.2ms-0.3ms. Note that benchmarking on that scene is very noisy

right now because of https://github.com/bevyengine/bevy/issues/17535.

# Objective

- Make use of the new `weak_handle!` macro added in

https://github.com/bevyengine/bevy/pull/17384

## Solution

- Migrate bevy from `Handle::weak_from_u128` to the new `weak_handle!`

macro that takes a random UUID

- Deprecate `Handle::weak_from_u128`, since there are no remaining use

cases that can't also be addressed by constructing the type manually

## Testing

- `cargo run -p ci -- test`

---

## Migration Guide

Replace `Handle::weak_from_u128` with `weak_handle!` and a random UUID.

# Objective

- Fixes#17411

## Solution

- Deprecated `Component::register_component_hooks`

- Added individual methods for each hook which return `None` if the hook

is unused.

## Testing

- CI

---

## Migration Guide

`Component::register_component_hooks` is now deprecated and will be

removed in a future release. When implementing `Component` manually,

also implement the respective hook methods on `Component`.

```rust

// Before

impl Component for Foo {

// snip

fn register_component_hooks(hooks: &mut ComponentHooks) {

hooks.on_add(foo_on_add);

}

}

// After

impl Component for Foo {

// snip

fn on_add() -> Option<ComponentHook> {

Some(foo_on_add)

}

}

```

## Notes

I've chosen to deprecate `Component::register_component_hooks` rather

than outright remove it to ease the migration guide. While it is in a

state of deprecation, it must be used by

`Components::register_component_internal` to ensure users who haven't

migrated to the new hook definition scheme aren't left behind. For users

of the new scheme, a default implementation of

`Component::register_component_hooks` is provided which forwards the new

individual hook implementations.

Personally, I think this is a cleaner API to work with, and would allow

the documentation for hooks to exist on the respective `Component`

methods (e.g., documentation for `OnAdd` can exist on

`Component::on_add`). Ideally, `Component::on_add` would be the hook

itself rather than a getter for the hook, but it is the only way to

early-out for a no-op hook, which is important for performance.

## Migration Guide

`Component::register_component_hooks` has been deprecated. If you are

manually implementing the `Component` trait and registering hooks there,

use the individual methods such as `on_add` instead for increased

clarity.

# Objective

Fixes#16628

## Solution

Matrices were being applied in the wrong order.

## Testing

Ran `skybox` example with rotations applied to the `Skybox` on the `x`,

`y`, and `z` axis (one at a time).

e.g.

```rust

Skybox {

image: skybox_handle.clone(),

brightness: 1000.0,

rotation: Quat::from_rotation_y(-45.0_f32.to_radians()),

}

```

## Showcase

[Screencast_20250121_151232.webm](https://github.com/user-attachments/assets/3df68714-f5f1-4d8c-8e08-cbab525a8bda)

*Occlusion culling* allows the GPU to skip the vertex and fragment

shading overhead for objects that can be quickly proved to be invisible

because they're behind other geometry. A depth prepass already

eliminates most fragment shading overhead for occluded objects, but the

vertex shading overhead, as well as the cost of testing and rejecting

fragments against the Z-buffer, is presently unavoidable for standard

meshes. We currently perform occlusion culling only for meshlets. But

other meshes, such as skinned meshes, can benefit from occlusion culling

too in order to avoid the transform and skinning overhead for unseen

meshes.

This commit adapts the same [*two-phase occlusion culling*] technique

that meshlets use to Bevy's standard 3D mesh pipeline when the new

`OcclusionCulling` component, as well as the `DepthPrepass` component,

are present on the camera. It has these steps:

1. *Early depth prepass*: We use the hierarchical Z-buffer from the

previous frame to cull meshes for the initial depth prepass, effectively

rendering only the meshes that were visible in the last frame.

2. *Early depth downsample*: We downsample the depth buffer to create

another hierarchical Z-buffer, this time with the current view

transform.

3. *Late depth prepass*: We use the new hierarchical Z-buffer to test

all meshes that weren't rendered in the early depth prepass. Any meshes

that pass this check are rendered.

4. *Late depth downsample*: Again, we downsample the depth buffer to

create a hierarchical Z-buffer in preparation for the early depth

prepass of the next frame. This step is done after all the rendering, in

order to account for custom phase items that might write to the depth

buffer.

Note that this patch has no effect on the per-mesh CPU overhead for

occluded objects, which remains high for a GPU-driven renderer due to

the lack of `cold-specialization` and retained bins. If

`cold-specialization` and retained bins weren't on the horizon, then a

more traditional approach like potentially visible sets (PVS) or low-res

CPU rendering would probably be more efficient than the GPU-driven

approach that this patch implements for most scenes. However, at this

point the amount of effort required to implement a PVS baking tool or a

low-res CPU renderer would probably be greater than landing

`cold-specialization` and retained bins, and the GPU driven approach is

the more modern one anyway. It does mean that the performance

improvements from occlusion culling as implemented in this patch *today*

are likely to be limited, because of the high CPU overhead for occluded

meshes.

Note also that this patch currently doesn't implement occlusion culling

for 2D objects or shadow maps. Those can be addressed in a follow-up.

Additionally, note that the techniques in this patch require compute

shaders, which excludes support for WebGL 2.

This PR is marked experimental because of known precision issues with

the downsampling approach when applied to non-power-of-two framebuffer

sizes (i.e. most of them). These precision issues can, in rare cases,

cause objects to be judged occluded that in fact are not. (I've never

seen this in practice, but I know it's possible; it tends to be likelier

to happen with small meshes.) As a follow-up to this patch, we desire to

switch to the [SPD-based hi-Z buffer shader from the Granite engine],

which doesn't suffer from these problems, at which point we should be

able to graduate this feature from experimental status. I opted not to

include that rewrite in this patch for two reasons: (1) @JMS55 is

planning on doing the rewrite to coincide with the new availability of

image atomic operations in Naga; (2) to reduce the scope of this patch.

A new example, `occlusion_culling`, has been added. It demonstrates

objects becoming quickly occluded and disoccluded by dynamic geometry

and shows the number of objects that are actually being rendered. Also,

a new `--occlusion-culling` switch has been added to `scene_viewer`, in

order to make it easy to test this patch with large scenes like Bistro.

[*two-phase occlusion culling*]:

https://medium.com/@mil_kru/two-pass-occlusion-culling-4100edcad501

[Aaltonen SIGGRAPH 2015]:

https://www.advances.realtimerendering.com/s2015/aaltonenhaar_siggraph2015_combined_final_footer_220dpi.pdf

[Some literature]:

https://gist.github.com/reduz/c5769d0e705d8ab7ac187d63be0099b5?permalink_comment_id=5040452#gistcomment-5040452

[SPD-based hi-Z buffer shader from the Granite engine]:

https://github.com/Themaister/Granite/blob/master/assets/shaders/post/hiz.comp

## Migration guide

* When enqueuing a custom mesh pipeline, work item buffers are now

created with

`bevy::render::batching::gpu_preprocessing::get_or_create_work_item_buffer`,

not `PreprocessWorkItemBuffers::new`. See the

`specialized_mesh_pipeline` example.

## Showcase

Occlusion culling example:

Bistro zoomed out, before occlusion culling:

Bistro zoomed out, after occlusion culling:

In this scene, occlusion culling reduces the number of meshes Bevy has

to render from 1591 to 585.

# Objective

- Contributes to #16877

## Solution

- Moved `hashbrown`, `foldhash`, and related types out of `bevy_utils`

and into `bevy_platform_support`

- Refactored the above to match the layout of these types in `std`.

- Updated crates as required.

## Testing

- CI

---

## Migration Guide

- The following items were moved out of `bevy_utils` and into

`bevy_platform_support::hash`:

- `FixedState`

- `DefaultHasher`

- `RandomState`

- `FixedHasher`

- `Hashed`

- `PassHash`

- `PassHasher`

- `NoOpHash`

- The following items were moved out of `bevy_utils` and into

`bevy_platform_support::collections`:

- `HashMap`

- `HashSet`

- `bevy_utils::hashbrown` has been removed. Instead, import from

`bevy_platform_support::collections` _or_ take a dependency on

`hashbrown` directly.

- `bevy_utils::Entry` has been removed. Instead, import from

`bevy_platform_support::collections::hash_map` or

`bevy_platform_support::collections::hash_set` as appropriate.

- All of the above equally apply to `bevy::utils` and

`bevy::platform_support`.

## Notes

- I left `PreHashMap`, `PreHashMapExt`, and `TypeIdMap` in `bevy_utils`

as they might be candidates for micro-crating. They can always be moved

into `bevy_platform_support` at a later date if desired.

# Objective

- Make the function signature for `ComponentHook` less verbose

## Solution

- Refactored `Entity`, `ComponentId`, and `Option<&Location>` into a new

`HookContext` struct.

## Testing

- CI

---

## Migration Guide

Update the function signatures for your component hooks to only take 2

arguments, `world` and `context`. Note that because `HookContext` is

plain data with all members public, you can use de-structuring to

simplify migration.

```rust

// Before

fn my_hook(

mut world: DeferredWorld,

entity: Entity,

component_id: ComponentId,

) { ... }

// After

fn my_hook(

mut world: DeferredWorld,

HookContext { entity, component_id, caller }: HookContext,

) { ... }

```

Likewise, if you were discarding certain parameters, you can use `..` in

the de-structuring:

```rust

// Before

fn my_hook(

mut world: DeferredWorld,

entity: Entity,

_: ComponentId,

) { ... }

// After

fn my_hook(

mut world: DeferredWorld,

HookContext { entity, .. }: HookContext,

) { ... }

```

# Objective

Fixes#14708

Also fixes some commands not updating tracked location.

## Solution

`ObserverTrigger` has a new `caller` field with the

`track_change_detection` feature;

hooks take an additional caller parameter (which is `Some(…)` or `None`

depending on the feature).

## Testing

See the new tests in `src/observer/mod.rs`

---

## Showcase

Observers now know from where they were triggered (if

`track_change_detection` is enabled):

```rust

world.observe(move |trigger: Trigger<OnAdd, Foo>| {

println!("Added Foo from {}", trigger.caller());

});

```

## Migration

- hooks now take an additional `Option<&'static Location>` argument

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

# Objective

`bevy_ecs`'s `system` module is something of a grab bag, and *very*

large. This is particularly true for the `system_param` module, which is

more than 2k lines long!

While it could be defensible to put `Res` and `ResMut` there (lol no

they're in change_detection.rs, obviously), it doesn't make any sense to

put the `Resource` trait there. This is confusing to navigate (and

painful to work on and review).

## Solution

- Create a root level `bevy_ecs/resource.rs` module to mirror

`bevy_ecs/component.rs`

- move the `Resource` trait to that module

- move the `Resource` derive macro to that module as well (Rust really

likes when you pun on the names of the derive macro and trait and put

them in the same path)

- fix all of the imports

## Notes to reviewers

- We could probably move more stuff into here, but I wanted to keep this

PR as small as possible given the absurd level of import changes.

- This PR is ground work for my upcoming attempts to store resource data

on components (resources-as-entities). Splitting this code out will make

the work and review a bit easier, and is the sort of overdue refactor

that's good to do as part of more meaningful work.

## Testing

cargo build works!

## Migration Guide

`bevy_ecs::system::Resource` has been moved to

`bevy_ecs::resource::Resource`.

# Objective

The existing `RelationshipSourceCollection` uses `Vec` as the only

possible backing for our relationships. While a reasonable choice,

benchmarking use cases might reveal that a different data type is better

or faster.

For example:

- Not all relationships require a stable ordering between the

relationship sources (i.e. children). In cases where we a) have many

such relations and b) don't care about the ordering between them, a hash

set is likely a better datastructure than a `Vec`.

- The number of children-like entities may be small on average, and a

`smallvec` may be faster

## Solution

- Implement `RelationshipSourceCollection` for `EntityHashSet`, our

custom entity-optimized `HashSet`.

-~~Implement `DoubleEndedIterator` for `EntityHashSet` to make things

compile.~~

- This implementation was cursed and very surprising.

- Instead, by moving the iterator type on `RelationshipSourceCollection`

from an erased RPTIT to an explicit associated type we can add a trait

bound on the offending methods!

- Implement `RelationshipSourceCollection` for `SmallVec`

## Testing

I've added a pair of new tests to make sure this pattern compiles

successfully in practice!

## Migration Guide

`EntityHashSet` and `EntityHashMap` are no longer re-exported in

`bevy_ecs::entity` directly. If you were not using `bevy_ecs` / `bevy`'s

`prelude`, you can access them through their now-public modules,

`hash_set` and `hash_map` instead.

## Notes to reviewers

The `EntityHashSet::Iter` type needs to be public for this impl to be

allowed. I initially renamed it to something that wasn't ambiguous and

re-exported it, but as @Victoronz pointed out, that was somewhat

unidiomatic.

In

1a8564898f,

I instead made the `entity_hash_set` public (and its `entity_hash_set`)

sister public, and removed the re-export. I prefer this design (give me

module docs please), but it leads to a lot of churn in this PR.

Let me know which you'd prefer, and if you'd like me to split that

change out into its own micro PR.

# Objective

- Contributes to #16877

## Solution

- Initial creation of `bevy_platform_support` crate.

- Moved `bevy_utils::Instant` into new `bevy_platform_support` crate.

- Moved `portable-atomic`, `portable-atomic-util`, and

`critical-section` into new `bevy_platform_support` crate.

## Testing

- CI

---

## Showcase

Instead of needing code like this to import an `Arc`:

```rust

#[cfg(feature = "portable-atomic")]

use portable_atomic_util::Arc;

#[cfg(not(feature = "portable-atomic"))]

use alloc::sync::Arc;

```

We can now use:

```rust

use bevy_platform_support::sync::Arc;

```

This applies to many other types, but the goal is overall the same:

allowing crates to use `std`-like types without the boilerplate of

conditional compilation and platform-dependencies.

## Migration Guide

- Replace imports of `bevy_utils::Instant` with

`bevy_platform_support::time::Instant`

- Replace imports of `bevy::utils::Instant` with

`bevy::platform_support::time::Instant`

## Notes

- `bevy_platform_support` hasn't been reserved on `crates.io`

- ~~`bevy_platform_support` is not re-exported from `bevy` at this time.

It may be worthwhile exporting this crate, but I am unsure of a

reasonable name to export it under (`platform_support` may be a bit

wordy for user-facing).~~

- I've included an implementation of `Instant` which is suitable for

`no_std` platforms that are not Wasm for the sake of eliminating feature

gates around its use. It may be a controversial inclusion, so I'm happy

to remove it if required.

- There are many other items (`spin`, `bevy_utils::Sync(Unsafe)Cell`,

etc.) which should be added to this crate. I have kept the initial scope

small to demonstrate utility without making this too unwieldy.

---------

Co-authored-by: TimJentzsch <TimJentzsch@users.noreply.github.com>

Co-authored-by: Chris Russell <8494645+chescock@users.noreply.github.com>

Co-authored-by: François Mockers <francois.mockers@vleue.com>

# Objective

Fixes https://github.com/bevyengine/bevy/issues/17111

## Solution

Move `#![warn(clippy::allow_attributes,

clippy::allow_attributes_without_reason)]` to the workspace `Cargo.toml`

## Testing

Lots of CI testing, and local testing too.

---------

Co-authored-by: Benjamin Brienen <benjamin.brienen@outlook.com>

# Objective

- https://github.com/bevyengine/bevy/issues/17111

## Solution

Set the `clippy::allow_attributes` and

`clippy::allow_attributes_without_reason` lints to `warn`, and bring

`bevy_core_pipeline` in line with the new restrictions.

## Testing

`cargo clippy` and `cargo test --package bevy_core_pipeline` were run,

and no warnings were encountered.

This commit allows Bevy to use `multi_draw_indirect_count` for drawing

meshes. The `multi_draw_indirect_count` feature works just like

`multi_draw_indirect`, but it takes the number of indirect parameters

from a GPU buffer rather than specifying it on the CPU.

Currently, the CPU constructs the list of indirect draw parameters with

the instance count for each batch set to zero, uploads the resulting

buffer to the GPU, and dispatches a compute shader that bumps the

instance count for each mesh that survives culling. Unfortunately, this

is inefficient when we support `multi_draw_indirect_count`. Draw

commands corresponding to meshes for which all instances were culled

will remain present in the list when calling

`multi_draw_indirect_count`, causing overhead. Proper use of

`multi_draw_indirect_count` requires eliminating these empty draw

commands.

To address this inefficiency, this PR makes Bevy fully construct the

indirect draw commands on the GPU instead of on the CPU. Instead of

writing instance counts to the draw command buffer, the mesh

preprocessing shader now writes them to a separate *indirect metadata

buffer*. A second compute dispatch known as the *build indirect

parameters* shader runs after mesh preprocessing and converts the

indirect draw metadata into actual indirect draw commands for the GPU.

The build indirect parameters shader operates on a batch at a time,

rather than an instance at a time, and as such each thread writes only 0

or 1 indirect draw parameters, simplifying the current logic in

`mesh_preprocessing`, which currently has to have special cases for the

first mesh in each batch. The build indirect parameters shader emits

draw commands in a tightly packed manner, enabling maximally efficient

use of `multi_draw_indirect_count`.

Along the way, this patch switches mesh preprocessing to dispatch one

compute invocation per render phase per view, instead of dispatching one

compute invocation per view. This is preparation for two-phase occlusion

culling, in which we will have two mesh preprocessing stages. In that

scenario, the first mesh preprocessing stage must only process opaque

and alpha tested objects, so the work items must be separated into those

that are opaque or alpha tested and those that aren't. Thus this PR

splits out the work items into a separate buffer for each phase. As this

patch rewrites so much of the mesh preprocessing infrastructure, it was

simpler to just fold the change into this patch instead of deferring it

to the forthcoming occlusion culling PR.

Finally, this patch changes mesh preprocessing so that it runs

separately for indexed and non-indexed meshes. This is because draw

commands for indexed and non-indexed meshes have different sizes and

layouts. *The existing code is actually broken for non-indexed meshes*,

as it attempts to overlay the indirect parameters for non-indexed meshes

on top of those for indexed meshes. Consequently, right now the

parameters will be read incorrectly when multiple non-indexed meshes are

multi-drawn together. *This is a bug fix* and, as with the change to

dispatch phases separately noted above, was easiest to include in this

patch as opposed to separately.

## Migration Guide

* Systems that add custom phase items now need to populate the indirect

drawing-related buffers. See the `specialized_mesh_pipeline` example for

an example of how this is done.

We won't be able to retain render phases from frame to frame if the keys

are unstable. It's not as simple as simply keying off the main world

entity, however, because some main world entities extract to multiple

render world entities. For example, directional lights extract to

multiple shadow cascades, and point lights extract to one view per

cubemap face. Therefore, we key off a new type, `RetainedViewEntity`,

which contains the main entity plus a *subview ID*.

This is part of the preparation for retained bins.

---------

Co-authored-by: ickshonpe <david.curthoys@googlemail.com>

# Objective

Stumbled upon a `from <-> form` transposition while reviewing a PR,

thought it was interesting, and went down a bit of a rabbit hole.

## Solution

Fix em

# Objective

Many instances of `clippy::too_many_arguments` linting happen to be on

systems - functions which we don't call manually, and thus there's not

much reason to worry about the argument count.

## Solution

Allow `clippy::too_many_arguments` globally, and remove all lint

attributes related to it.

# Objective

I never realized `clippy::type_complexity` was an allowed lint - I've

been assuming it'd generate a warning when performing my linting PRs.

## Solution

Removes any instances of `#[allow(clippy::type_complexity)]` and

`#[expect(clippy::type_complexity)]`

## Testing

`cargo clippy` ran without errors or warnings.