# Objective

Fixes#9367.

Yet another follow-up to #16547.

These traits were initially based on `Borrow<Entity>` because that trait

was what they were replacing, and it felt close enough in meaning.

However, they ultimately don't quite match: `borrow` always returns

references, whereas `EntityBorrow` always returns a plain `Entity`.

Additionally, `EntityBorrow` can imply that we are borrowing an `Entity`

from the ECS, which is not what it does.

Due to its safety contract, `TrustedEntityBorrow` is important an

important and widely used trait for `EntitySet` functionality.

In contrast, the safe `EntityBorrow` does not see much use, because even

outside of `EntitySet`-related functionality, it is a better idea to

accept `TrustedEntityBorrow` over `EntityBorrow`.

Furthermore, as #9367 points out, abstracting over returning `Entity`

from pointers/structs that contain it can skip some ergonomic friction.

On top of that, there are aspects of #18319 and #18408 that are relevant

to naming:

We've run into the issue that relying on a type default can switch

generic order. This is livable in some contexts, but unacceptable in

others.

To remedy that, we'd need to switch to a type alias approach:

The "defaulted" `Entity` case becomes a

`UniqueEntity*`/`Entity*Map`/`Entity*Set` alias, and the base type

receives a more general name. `TrustedEntityBorrow` does not mesh

clearly with sensible base type names.

## Solution

Replace any `EntityBorrow` bounds with `TrustedEntityBorrow`.

+

Rename them as such:

`EntityBorrow` -> `ContainsEntity`

`TrustedEntityBorrow` -> `EntityEquivalent`

For `EntityBorrow` we produce a change in meaning; We designate it for

types that aren't necessarily strict wrappers around `Entity` or some

pointer to `Entity`, but rather any of the myriad of types that contain

a single associated `Entity`.

This pattern can already be seen in the common `entity`/`id` methods

across the engine.

We do not mean for `ContainsEntity` to be a trait that abstracts input

API (like how `AsRef<T>` is often used, f.e.), because eliding

`entity()` would be too implicit in the general case.

We prefix "Contains" to match the intuition of a struct with an `Entity`

field, like some contain a `length` or `capacity`.

It gives the impression of structure, which avoids the implication of a

relationship to the `ECS`.

`HasEntity` f.e. could be interpreted as "a currently live entity",

As an input trait for APIs like #9367 envisioned, `TrustedEntityBorrow`

is a better fit, because it *does* restrict itself to strict wrappers

and pointers. Which is why we replace any

`EntityBorrow`/`ContainsEntity` bounds with

`TrustedEntityBorrow`/`EntityEquivalent`.

Here, the name `EntityEquivalent` is a lot closer to its actual meaning,

which is "A type that is both equivalent to an `Entity`, and forms the

same total order when compared".

Prior art for this is the

[`Equivalent`](https://docs.rs/hashbrown/latest/hashbrown/trait.Equivalent.html)

trait in `hashbrown`, which utilizes both `Borrow` and `Eq` for its one

blanket impl!

Given that we lose the `Borrow` moniker, and `Equivalent` can carry

various meanings, we expand on the safety comment of `EntityEquivalent`

somewhat. That should help prevent the confusion we saw in

[#18408](https://github.com/bevyengine/bevy/pull/18408#issuecomment-2742094176).

The new name meshes a lot better with the type aliasing approach in

#18408, by aligning with the base name `EntityEquivalentHashMap`.

For a consistent scheme among all set types, we can use this scheme for

the `UniqueEntity*` wrapper types as well!

This allows us to undo the switched generic order that was introduced to

`UniqueEntityArray` by its `Entity` default.

Even without the type aliases, I think these renames are worth doing!

## Migration Guide

Any use of `EntityBorrow` becomes `ContainsEntity`.

Any use of `TrustedEntityBorrow` becomes `EntityEquivalent`.

# Objective

Unlike for their helper typers, the import paths for

`unique_array::UniqueEntityArray`, `unique_slice::UniqueEntitySlice`,

`unique_vec::UniqueEntityVec`, `hash_set::EntityHashSet`,

`hash_map::EntityHashMap`, `index_set::EntityIndexSet`,

`index_map::EntityIndexMap` are quite redundant.

When looking at the structure of `hashbrown`, we can also see that while

both `HashSet` and `HashMap` have their own modules, the main types

themselves are re-exported to the crate level.

## Solution

Re-export the types in their shared `entity` parent module, and simplify

the imports where they're used.

# Objective

Fixes#18515

After the recent changes to system param validation, the panic message

for a missing resource is currently:

```

Encountered an error in system `missing_resource_error::res_system`: SystemParamValidationError { skipped: false }

```

Add the parameter type name and a descriptive message, improving the

panic message to:

```

Encountered an error in system `missing_resource_error::res_system`: SystemParamValidationError { skipped: false, message: "Resource does not exist", param: "bevy_ecs::change_detection::Res<missing_resource_error::MissingResource>" }

```

## Solution

Add fields to `SystemParamValidationError` for error context. Include

the `type_name` of the param and a message.

Store them as `Cow<'static, str>` and only format them into a friendly

string in the `Display` impl. This lets us create errors using a

`&'static str` with no allocation or formatting, while still supporting

runtime `String` values if necessary.

Add a unit test that verifies the panic message.

## Future Work

If we change the default error handling to use `Display` instead of

`Debug`, and to use `ShortName` for the system name, the panic message

could be further improved to:

```

Encountered an error in system `res_system`: Parameter `Res<MissingResource>` failed validation: Resource does not exist

```

However, `BevyError` currently includes the backtrace in `Debug` but not

`Display`, and I didn't want to try to change that in this PR.

# Objective

This fixes `NonMesh` draw commands not receiving render-world entities

since

- https://github.com/bevyengine/bevy/pull/17698

This unbreaks item queries for queued non-mesh entities:

```rust

struct MyDrawCommand {

type ItemQuery = Read<DynamicUniformIndex<SomeUniform>>;

// ...

}

```

### Solution

Pass render entity to `NonMesh` draw commands instead of

`Entity::PLACEHOLDER`. This PR also introduces sorting of the `NonMesh`

bin keys like other types, which I assume is the intended behavior.

@pcwalton

## Testing

- Tested on a local project that extensively uses `NonMesh` items.

# Objective

- feature `shader_format_wesl` doesn't compile in Wasm

- once fixed, example `shader_material_wesl` doesn't work in WebGL2

## Solution

- remove special path handling when loading shaders. this seems like a

way to escape the asset folder which we don't want to allow, and can't

compile on android or wasm, and can't work on iOS (filesystem is rooted

there)

- pad material so that it's 16 bits. I couldn't get conditional

compilation to work in wesl for type declaration, it fails to parse

- the shader renders the color `(0.0, 0.0, 0.0, 0.0)` when it's not a

polka dot. this renders as black on WebGPU/metal/..., and white on

WebGL2. change it to `(0.0, 0.0, 0.0, 1.0)` so that it's black

everywhere

* `submit_graph_commands` was incorrectly timing the command buffer

generation tasks as well, and not only the queue submission. Moved the

span to fix that.

* Added a new `command_buffer_generation_tasks` span as a parent for all

the individual command buffer generation tasks that don't run as part of

the Core3d span.

# Objective

Fixes https://github.com/bevyengine/bevy/issues/16586.

## Solution

- Free meshes before allocating new ones (so hopefully the existing

allocation is used, but it's not guaranteed since it might end up

getting used by a smaller mesh first).

- Keep track of modified render assets, and have the mesh allocator free

their allocations.

- Cleaned up some render asset code to make it more understandable,

since it took me several minutes to reverse engineer/remember how it was

supposed to work.

Long term we'll probably want to explicitly reusing allocations for

modified meshes that haven't grown in size, or do delta uploads using a

compute shader or something, but this is an easy fix for the near term.

## Testing

Ran the example provided in the issue. No crash after a few minutes, and

memory usage remains steady.

# Objective

Make it easier to short-circuit system parameter validation.

Simplify the API surface by combining `ValidationOutcome` with

`SystemParamValidationError`.

## Solution

Replace `ValidationOutcome` with `Result<(),

SystemParamValidationError>`. Move the docs from `ValidationOutcome` to

`SystemParamValidationError`.

Add a `skipped` field to `SystemParamValidationError` to distinguish the

`Skipped` and `Invalid` variants.

Use the `?` operator to short-circuit validation in tuples of system

params.

# Objective

- Fixes https://github.com/bevyengine/bevy/issues/17891

- Cherry-picked from https://github.com/bevyengine/bevy/pull/18411

## Solution

The `name` argument could either be made permanent (by removing the

`#[cfg(...)]` condition) or eliminated entirely. I opted to remove it,

as debugging a specific DDS texture edge case in GLTF files doesn't seem

necessary, and there isn't any other foreseeable need to have it.

## Migration Guide

- `Image::from_buffer()` no longer has a `name` argument that's only

present in debug builds when the `"dds"` feature is enabled. If you

happen to pass a name, remove it.

# Objective

When introduced, `Single` was intended to simply be silently skipped,

allowing for graceful and efficient handling of systems during invalid

game states (such as when the player is dead).

However, this also caused missing resources to *also* be silently

skipped, leading to confusing and very hard to debug failures. In

0.15.1, this behavior was reverted to a panic, making missing resources

easier to debug, but largely making `Single` (and `Populated`)

worthless, as they would panic during expected game states.

Ultimately, the consensus is that this behavior should differ on a

per-system-param basis. However, there was no sensible way to *do* that

before this PR.

## Solution

Swap `SystemParam::validate_param` from a `bool` to:

```rust

/// The outcome of system / system param validation,

/// used by system executors to determine what to do with a system.

pub enum ValidationOutcome {

/// All system parameters were validated successfully and the system can be run.

Valid,

/// At least one system parameter failed validation, and an error must be handled.

/// By default, this will result in1 a panic. See [crate::error] for more information.

///

/// This is the default behavior, and is suitable for system params that should *always* be valid,

/// either because sensible fallback behavior exists (like [`Query`] or because

/// failures in validation should be considered a bug in the user's logic that must be immediately addressed (like [`Res`]).

Invalid,

/// At least one system parameter failed validation, but the system should be skipped due to [`ValidationBehavior::Skip`].

/// This is suitable for system params that are intended to only operate in certain application states, such as [`Single`].

Skipped,

}

```

Then, inside of each `SystemParam` implementation, return either Valid,

Invalid or Skipped.

Currently, only `Single`, `Option<Single>` and `Populated` use the

`Skipped` behavior. Other params (like resources) retain their current

failing

## Testing

Messed around with the fallible_params example. Added a pair of tests:

one for panicking when resources are missing, and another for properly

skipping `Single` and `Populated` system params.

## To do

- [x] get https://github.com/bevyengine/bevy/pull/18454 merged

- [x] fix the todo!() in the macro-powered tuple implementation (please

help 🥺)

- [x] test

- [x] write a migration guide

- [x] update the example comments

## Migration Guide

Various system and system parameter validation methods

(`SystemParam::validate_param`, `System::validate_param` and

`System::validate_param_unsafe`) now return and accept a

`ValidationOutcome` enum, rather than a `bool`. The previous `true`

values map to `ValidationOutcome::Valid`, while `false` maps to

`ValidationOutcome::Invalid`.

However, if you wrote a custom schedule executor, you should now respect

the new `ValidationOutcome::Skipped` parameter, skipping any systems

whose validation was skipped. By contrast, `ValidationOutcome::Invalid`

systems should also be skipped, but you should call the

`default_error_handler` on them first, which by default will result in a

panic.

If you are implementing a custom `SystemParam`, you should consider

whether failing system param validation is an error or an expected

state, and choose between `Invalid` and `Skipped` accordingly. In Bevy

itself, `Single` and `Populated` now once again skip the system when

their conditions are not met. This is the 0.15.0 behavior, but stands in

contrast to the 0.15.1 behavior, where they would panic.

---------

Co-authored-by: MiniaczQ <xnetroidpl@gmail.com>

Co-authored-by: Dmytro Banin <banind@cs.washington.edu>

Co-authored-by: Chris Russell <8494645+chescock@users.noreply.github.com>

# Objective

- This variable is unused and never populated. I searched for the

literal text of the const and got no hits.

## Solution

- Delete it!

## Testing

- None.

# Objective

There are two related problems here:

1. Users should be able to change the fallback behavior of *all*

ECS-based errors in their application by setting the

`GLOBAL_ERROR_HANDLER`. See #18351 for earlier work in this vein.

2. The existing solution (#15500) for customizing this behavior is high

on boilerplate, not global and adds a great deal of complexity.

The consensus is that the default behavior when a parameter fails

validation should be set based on the kind of system parameter in

question: `Single` / `Populated` should silently skip the system, but

`Res` should panic. Setting this behavior at the system level is a

bandaid that makes getting to that ideal behavior more painful, and can

mask real failures (if a resource is missing but you've ignored a system

to make the Single stop panicking you're going to have a bad day).

## Solution

I've removed the existing `ParamWarnPolicy`-based configuration, and

wired up the `GLOBAL_ERROR_HANDLER`/`default_error_handler` to the

various schedule executors to properly plumb through errors .

Additionally, I've done a small cleanup pass on the corresponding

example.

## Testing

I've run the `fallible_params` example, with both the default and a

custom global error handler. The former panics (as expected), and the

latter spams the error console with warnings 🥲

## Questions for reviewers

1. Currently, failed system param validation will result in endless

console spam. Do you want me to implement a solution for warn_once-style

debouncing somehow?

2. Currently, the error reporting for failed system param validation is

very limited: all we get is that a system param failed validation and

the name of the system. Do you want me to implement improved error

reporting by bubbling up errors in this PR?

3. There is broad consensus that the default behavior for failed system

param validation should be set on a per-system param basis. Would you

like me to implement that in this PR?

My gut instinct is that we absolutely want to solve 2 and 3, but it will

be much easier to do that work (and review it) if we split the PRs

apart.

## Migration Guide

`ParamWarnPolicy` and the `WithParamWarnPolicy` have been removed

completely. Failures during system param validation are now handled via

the `GLOBAL_ERROR_HANDLER`: please see the `bevy_ecs::error` module docs

for more information.

---------

Co-authored-by: MiniaczQ <xnetroidpl@gmail.com>

# Objective

Create new `NonSendMarker` that does not depend on `NonSend`.

Required, in order to accomplish #17682. In that issue, we are trying to

replace `!Send` resources with `thread_local!` in order to unblock the

resources-as-components effort. However, when we remove all the `!Send`

resources from a system, that allows the system to run on a thread other

than the main thread, which is against the design of the system. So this

marker gives us the control to require a system to run on the main

thread without depending on `!Send` resources.

## Solution

Create a new `NonSendMarker` to replace the existing one that does not

depend on `NonSend`.

## Testing

Other than running tests, I ran a few examples:

- `window_resizing`

- `wireframe`

- `volumetric_fog` (looks so cool)

- `rotation`

- `button`

There is a Mac/iOS-specific change and I do not have a Mac or iOS device

to test it. I am doubtful that it would cause any problems for 2

reasons:

1. The change is the same as the non-wasm change which I did test

2. The Pixel Eagle tests run Mac tests

But it wouldn't hurt if someone wanted to spin up an example that

utilizes the `bevy_render` crate, which is where the Mac/iSO change was.

## Migration Guide

If `NonSendMarker` is being used from `bevy_app::prelude::*`, replace it

with `bevy_ecs::system::NonSendMarker` or use it from

`bevy_ecs::prelude::*`. In addition to that, `NonSendMarker` does not

need to be wrapped like so:

```rust

fn my_system(_non_send_marker: Option<NonSend<NonSendMarker>>) {

...

}

```

Instead, it can be used without any wrappers:

```rust

fn my_system(_non_send_marker: NonSendMarker) {

...

}

```

---------

Co-authored-by: Chris Russell <8494645+chescock@users.noreply.github.com>

# Objective

Fixes https://github.com/bevyengine/bevy/issues/18366 which seems to

have a similar underlying cause than the already closed (but not fixed)

https://github.com/bevyengine/bevy/issues/16185.

## Solution

For Windows with the AMD vulkan driver, there was already a hack to

force serial command encoding, which prevented these issues. The Linux

version of the AMD vulkan driver seems to have similar issues than its

Windows counterpart, so I extended the hack to also cover AMD on Linux.

I also removed the mention of `wgpu` since it was already outdated, and

doesn't seem to be relevant to the core issue (the AMD driver being

buggy).

## Testing

- Did you test these changes? If so, how?

- I ran the `3d_scene` example, which on `main` produced the flickering

shadows on Linux with the amdvlk driver, while it no longer does with

the workaround applied.

- Are there any parts that need more testing?

- Not sure.

- How can other people (reviewers) test your changes? Is there anything

specific they need to know?

- Requires a Linux system with an AMD card and the AMDVLK driver.

- If relevant, what platforms did you test these changes on, and are

there any important ones you can't test?

- My change should only affect Linux, where I did test it.

# Objective

WESL was broken on windows.

## Solution

- Upgrade to `wesl_rs` 1.2.

- Fix path handling on windows.

- Improve example for khronos demo this week.

# Objective

Now that #13432 has been merged, it's important we update our reflected

types to properly opt into this feature. If we do not, then this could

cause issues for users downstream who want to make use of

reflection-based cloning.

## Solution

This PR is broken into 4 commits:

1. Add `#[reflect(Clone)]` on all types marked `#[reflect(opaque)]` that

are also `Clone`. This is mandatory as these types would otherwise cause

the cloning operation to fail for any type that contains it at any

depth.

2. Update the reflection example to suggest adding `#[reflect(Clone)]`

on opaque types.

3. Add `#[reflect(clone)]` attributes on all fields marked

`#[reflect(ignore)]` that are also `Clone`. This prevents the ignored

field from causing the cloning operation to fail.

Note that some of the types that contain these fields are also `Clone`,

and thus can be marked `#[reflect(Clone)]`. This makes the

`#[reflect(clone)]` attribute redundant. However, I think it's safer to

keep it marked in the case that the `Clone` impl/derive is ever removed.

I'm open to removing them, though, if people disagree.

4. Finally, I added `#[reflect(Clone)]` on all types that are also

`Clone`. While not strictly necessary, it enables us to reduce the

generated output since we can just call `Clone::clone` directly instead

of calling `PartialReflect::reflect_clone` on each variant/field. It

also means we benefit from any optimizations or customizations made in

the `Clone` impl, including directly dereferencing `Copy` values and

increasing reference counters.

Along with that change I also took the liberty of adding any missing

registrations that I saw could be applied to the type as well, such as

`Default`, `PartialEq`, and `Hash`. There were hundreds of these to

edit, though, so it's possible I missed quite a few.

That last commit is **_massive_**. There were nearly 700 types to

update. So it's recommended to review the first three before moving onto

that last one.

Additionally, I can break the last commit off into its own PR or into

smaller PRs, but I figured this would be the easiest way of doing it

(and in a timely manner since I unfortunately don't have as much time as

I used to for code contributions).

## Testing

You can test locally with a `cargo check`:

```

cargo check --workspace --all-features

```

Extracted from my DLSS branch.

## Changelog

* Added `source_texture` and `destination_texture` to

`PostProcessWrite`, in addition to the existing texture views.

# Objective

In its existing form, the clamping that's done in `camera_system`

doesn't work well when the `physical_position` of the associated

viewport is nonzero. In such cases, it may produce invalid viewport

rectangles (i.e. not lying inside the render target), which may result

in crashes during the render pass.

The goal of this PR is to eliminate this possibility by making the

clamping behavior always result in a valid viewport rectangle when

possible.

## Solution

Incorporate the `physical_position` information into the clamping

behavior. In particular, always cut off enough so that it's contained in

the render target rather than clamping it to the same dimensions as the

target itself. In weirder situations, still try to produce a valid

viewport rectangle to avoid crashes.

## Testing

Tested these changes on my work branch where I encountered the crash.

# Objective

The `ViewportConversionError` error type does not implement `Error`,

making it incompatible with `BevyError`.

## Solution

Derive `Error` for `ViewportConversionError`.

I chose to use `thiserror` since it's already a dependency, but do let

me know if we should be preferring `derive_more`.

## Testing

You can test this by trying to compile the following:

```rust

let error: BevyError = ViewportConversionError::InvalidData.into();

```

# Objective

Prevents duplicate implementation between IntoSystemConfigs and

IntoSystemSetConfigs using a generic, adds a NodeType trait for more

config flexibility (opening the door to implement

https://github.com/bevyengine/bevy/issues/14195?).

## Solution

Followed writeup by @ItsDoot:

https://hackmd.io/@doot/rJeefFHc1x

Removes IntoSystemConfigs and IntoSystemSetConfigs, instead using

IntoNodeConfigs with generics.

## Testing

Pending

---

## Showcase

N/A

## Migration Guide

SystemSetConfigs -> NodeConfigs<InternedSystemSet>

SystemConfigs -> NodeConfigs<ScheduleSystem>

IntoSystemSetConfigs -> IntoNodeConfigs<InternedSystemSet, M>

IntoSystemConfigs -> IntoNodeConfigs<ScheduleSystem, M>

---------

Co-authored-by: Christian Hughes <9044780+ItsDoot@users.noreply.github.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

# Objective

- #16883

- Improve the default behaviour of the exclusive fullscreen API.

## Solution

This PR changes the exclusive fullscreen window mode to require the type

`WindowMode::Fullscreen(MonitorSelection, VideoModeSelection)` and

removes `WindowMode::SizedFullscreen`. This API somewhat intentionally

more closely resembles Winit 0.31's upcoming fullscreen and video mode

API.

The new VideoModeSelection enum is specified as follows:

```rust

pub enum VideoModeSelection {

/// Uses the video mode that the monitor is already in.

Current,

/// Uses a given [`crate::monitor::VideoMode`]. A list of video modes supported by the monitor

/// is supplied by [`crate::monitor::Monitor::video_modes`].

Specific(VideoMode),

}

```

### Changing default behaviour

This might be contentious because it removes the previous behaviour of

`WindowMode::Fullscreen` which selected the highest resolution possible.

While the previous behaviour would be quite easy to re-implement as

additional options, or as an impl method on Monitor, I would argue that

this isn't an implementation that should be encouraged.

From the perspective of a Windows user, I prefer what the majority of

modern games do when entering fullscreen which is to preserve the OS's

current resolution settings, which allows exclusive fullscreen to be

entered faster, and to only have it change if I manually select it in

either the options of the game or the OS. The highest resolution

available is not necessarily what the user prefers.

I am open to changing this if I have just missed a good use case for it.

Likewise, the only functionality that `WindowMode::SizedFullscreen`

provided was that it selected the resolution closest to the current size

of the window so it was removed since this behaviour can be replicated

via the new `VideoModeSelection::Specific` if necessary.

## Out of scope

WindowResolution and scale factor act strangely in exclusive fullscreen,

this PR doesn't address it or regress it.

## Testing

- Tested on Windows 11 and macOS 12.7

- Linux untested

## Migration Guide

`WindowMode::SizedFullscreen(MonitorSelection)` and

`WindowMode::Fullscreen(MonitorSelection)` has become

`WindowMode::Fullscreen(MonitorSelection, VideoModeSelection)`.

Previously, the VideoMode was selected based on the closest resolution

to the current window size for SizedFullscreen and the largest

resolution for Fullscreen. It is possible to replicate that behaviour by

searching `Monitor::video_modes` and selecting it with

`VideoModeSelection::Specific(VideoMode)` but it is recommended to use

`VideoModeSelection::Current` as the default video mode when entering

fullscreen.

PR #17965 mistakenly made the `AsBindGroup` macro no longer emit a bind

group layout entry and a resource descriptor for buffers. This commit

adds that functionality back, fixing the `shader_material_bindless`

example.

Closes#18124.

# Objective

- In `Camera::viewport_to_world_2d`, `Camera::viewport_to_world`,

`Camera::world_to_viewport` and `Camera::world_to_viewport_with_depth`,

the results were incorrect when the `Camera::viewport` field was

configured with a viewport position that was non-zero. This PR attempts

to correct that.

- Fixes#16200

## Solution

- This PR now takes the viewport position into account in the functions

mentioned above.

- Extended `2d_viewport_to_world` example to test the functions with a

dynamic viewport position and size, camera positions and zoom levels. It

is probably worth discussing whether to change the example, add a new

one or just completely skip touching the examples.

## Testing

Used the modified example to test the functions with dynamic camera

transform as well as dynamic viewport size and position.

# Objective

Because `prepare_assets::<T>` had a mutable reference to the

`RenderAssetBytesPerFrame` resource, no render asset preparation could

happen in parallel. This PR fixes this by using an `AtomicUsize` to

count bytes written (if there's a limit in place), so that the system

doesn't need mutable access.

- Related: https://github.com/bevyengine/bevy/pull/12622

**Before**

<img width="1049" alt="Screenshot 2025-02-17 at 11 40 53 AM"

src="https://github.com/user-attachments/assets/040e6184-1192-4368-9597-5ceda4b8251b"

/>

**After**

<img width="836" alt="Screenshot 2025-02-17 at 1 38 37 PM"

src="https://github.com/user-attachments/assets/95488796-3323-425c-b0a6-4cf17753512e"

/>

## Testing

- Tested on a local project (with and without limiting enabled)

- Someone with more knowledge of wgpu/underlying driver guts should

confirm that this doesn't actually bite us by introducing contention

(i.e. if buffer writing really *should be* serial).

# Objective

- Allow bevy and wgpu developers to test newer versions of wgpu without

having to update naga_oil.

## Solution

- Currently bevy feeds wgsl through naga_oil to get a naga::Module that

it passes to wgpu.

- Added a way to pass wgsl through naga_oil, and then serialize the

naga::Module back into a wgsl string to feed to wgpu, allowing wgpu to

parse it using it's internal version of naga (and not the version of

naga bevy_render/naga_oil is using).

## Testing

1. Run 3d_scene (it works)

2. Run 3d_scene with `--features bevy_render/decoupled_naga` (it still

works)

3. Add the following patch to bevy/Cargo.toml, run cargo update, and

compile again (it will fail)

```toml

[patch.crates-io]

wgpu = { git = "https://github.com/gfx-rs/wgpu", rev = "2764e7a39920e23928d300e8856a672f1952da63" }

wgpu-core = { git = "https://github.com/gfx-rs/wgpu", rev = "2764e7a39920e23928d300e8856a672f1952da63" }

wgpu-hal = { git = "https://github.com/gfx-rs/wgpu", rev = "2764e7a39920e23928d300e8856a672f1952da63" }

wgpu-types = { git = "https://github.com/gfx-rs/wgpu", rev = "2764e7a39920e23928d300e8856a672f1952da63" }

```

4. Fix errors and compile again (it will work, and you didn't have to

touch naga_oil)

# Objective

WESL's pre-MVP `0.1.0` has been

[released](https://docs.rs/wesl/latest/wesl/)!

Add support for WESL shader source so that we can begin playing and

testing WESL, as well as aiding in their development.

## Solution

Adds a `ShaderSource::WESL` that can be used to load `.wesl` shaders.

Right now, we don't support mixing `naga-oil`. Additionally, WESL

shaders currently need to pass through the naga frontend, which the WESL

team is aware isn't great for performance (they're working on compiling

into naga modules). Also, since our shaders are managed using the asset

system, we don't currently support using file based imports like `super`

or package scoped imports. Further work will be needed to asses how we

want to support this.

---

## Showcase

See the `shader_material_wesl` example. Be sure to press space to

activate party mode (trigger conditional compilation)!

https://github.com/user-attachments/assets/ec6ad19f-b6e4-4e9d-a00f-6f09336b08a4

# Objective

- Contributes to #15460

- Supersedes #8520

- Fixes#4906

## Solution

- Added a new `web` feature to `bevy`, and several of its crates.

- Enabled new `web` feature automatically within crates without `no_std`

support.

## Testing

- `cargo build --no-default-features --target wasm32v1-none`

---

## Migration Guide

When using Bevy crates which _don't_ automatically enable the `web`

feature, please enable it when building for the browser.

## Notes

- I added [`cfg_if`](https://crates.io/crates/cfg-if) to help manage

some of the feature gate gore that this extra feature introduces. It's

still pretty ugly, but I think much easier to read.

- Certain `wasm` targets (e.g.,

[wasm32-wasip1](https://doc.rust-lang.org/nightly/rustc/platform-support/wasm32-wasip1.html#wasm32-wasip1))

provide an incomplete implementation for `std`. I have not tested these

platforms, but I suspect Bevy's liberal use of usually unsupported

features (e.g., threading) will cause these targets to fail. As such,

consider `wasm32-unknown-unknown` as the only `wasm` platform with

support from Bevy for `std`. All others likely will need to be treated

as `no_std` platforms.

# Objective

- Fixes#15460 (will open new issues for further `no_std` efforts)

- Supersedes #17715

## Solution

- Threaded in new features as required

- Made certain crates optional but default enabled

- Removed `compile-check-no-std` from internal `ci` tool since GitHub CI

can now simply check `bevy` itself now

- Added CI task to check `bevy` on `thumbv6m-none-eabi` to ensure

`portable-atomic` support is still valid [^1]

[^1]: This may be controversial, since it could be interpreted as

implying Bevy will maintain support for `thumbv6m-none-eabi` going

forward. In reality, just like `x86_64-unknown-none`, this is a

[canary](https://en.wiktionary.org/wiki/canary_in_a_coal_mine) target to

make it clear when `portable-atomic` no longer works as intended (fixing

atomic support on atomically challenged platforms). If a PR comes

through and makes supporting this class of platforms impossible, then

this CI task can be removed. I however wager this won't be a problem.

## Testing

- CI

---

## Release Notes

Bevy now has support for `no_std` directly from the `bevy` crate.

Users can disable default features and enable a new `default_no_std`

feature instead, allowing `bevy` to be used in `no_std` applications and

libraries.

```toml

# Bevy for `no_std` platforms

bevy = { version = "0.16", default-features = false, features = ["default_no_std"] }

```

`default_no_std` enables certain required features, such as `libm` and

`critical-section`, and as many optional crates as possible (currently

just `bevy_state`). For atomically-challenged platforms such as the

Raspberry Pi Pico, `portable-atomic` will be used automatically.

For library authors, we recommend depending on `bevy` with

`default-features = false` to allow `std` and `no_std` users to both

depend on your crate. Here are some recommended features a library crate

may want to expose:

```toml

[features]

# Most users will be on a platform which has `std` and can use the more-powerful `async_executor`.

default = ["std", "async_executor"]

# Features for typical platforms.

std = ["bevy/std"]

async_executor = ["bevy/async_executor"]

# Features for `no_std` platforms.

libm = ["bevy/libm"]

critical-section = ["bevy/critical-section"]

[dependencies]

# We disable default features to ensure we don't accidentally enable `std` on `no_std` targets, for example.

bevy = { version = "0.16", default-features = false }

```

While this is verbose, it gives the maximum control to end-users to

decide how they wish to use Bevy on their platform.

We encourage library authors to experiment with `no_std` support. For

libraries relying exclusively on `bevy` and no other dependencies, it

may be as simple as adding `#![no_std]` to your `lib.rs` and exposing

features as above! Bevy can also provide many `std` types, such as

`HashMap`, `Mutex`, and `Instant` on all platforms. See

`bevy::platform_support` for details on what's available out of the box!

## Migration Guide

- If you were previously relying on `bevy` with default features

disabled, you may need to enable the `std` and `async_executor`

features.

- `bevy_reflect` has had its `bevy` feature removed. If you were relying

on this feature, simply enable `smallvec` and `smol_str` instead.

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

## Objective

`insert_or_spawn_batch` is due to be deprecated eventually (#15704), and

removing uses internally will make that easier.

## Solution

Replaced internal uses of `insert_or_spawn_batch` with

`try_insert_batch` (non-panicking variant because

`insert_or_spawn_batch` didn't panic).

All of the internal uses are in rendering code. Since retained rendering

was meant to get rid non-opaque entity IDs, I assume the code was just

using `insert_or_spawn_batch` because `insert_batch` didn't exist and

not because it actually wanted to spawn something. However, I am *not*

confident in my ability to judge rendering code.

# Objective

Component `require()` IDE integration is fully broken, as of #16575.

## Solution

This reverts us back to the previous "put the docs on Component trait"

impl. This _does_ reduce the accessibility of the required components in

rust docs, but the complete erasure of "required component IDE

experience" is not worth the price of slightly increased prominence of

requires in docs.

Additionally, Rust Analyzer has recently started including derive

attributes in suggestions, so we aren't losing that benefit of the

proc_macro attribute impl.

# Objective

Fix unsound query transmutes on queries obtained from

`Query::as_readonly()`.

The following compiles, and the call to `transmute_lens()` should panic,

but does not:

```rust

fn bad_system(query: Query<&mut A>) {

let mut readonly = query.as_readonly();

let mut lens: QueryLens<&mut A> = readonly.transmute_lens();

let other_readonly: Query<&A> = query.as_readonly();

// `lens` and `other_readonly` alias, and are both alive here!

}

```

To make `Query::as_readonly()` zero-cost, we pointer-cast

`&QueryState<D, F>` to `&QueryState<D::ReadOnly, F>`. This means that

the `component_access` for a read-only query's state may include

accesses for the original mutable version, but the `Query` does not have

exclusive access to those components! `transmute` and `join` use that

access to ensure that a join is valid, and will incorrectly allow a

transmute that includes mutable access.

As a bonus, allow `Query::join`s that output `FilteredEntityRef` or

`FilteredEntityMut` to receive access from the `other` query. Currently

they only receive access from `self`.

## Solution

When transmuting or joining from a read-only query, remove any writes

before performing checking that the transmute is valid. For joins, be

sure to handle the case where one input query was the result of

`as_readonly()` but the other has valid mutable access.

This requires identifying read-only queries, so add a

`QueryData::IS_READ_ONLY` associated constant. Note that we only call

`QueryState::as_transmuted_state()` with `NewD: ReadOnlyQueryData`, so

checking for read-only queries is sufficient to check for

`as_transmuted_state()`.

Removing writes requires allocating a new `FilteredAccess`, so only do

so if the query is read-only and the state has writes. Otherwise, the

existing access is correct and we can continue using a reference to it.

Use the new read-only state to call `NewD::set_access`, so that

transmuting to a `FilteredAccessMut` results in a read-only

`FilteredAccessMut`. Otherwise, it would take the original write access,

and then the transmute would panic because it had too much access.

Note that `join` was previously passing `self.component_access` to

`NewD::set_access`. Switching it to `joined_component_access` also

allows a join that outputs `FilteredEntity(Ref|Mut)` to receive access

from `other`. The fact that it didn't do that before seems like an

oversight, so I didn't try to prevent that change.

## Testing

Added unit tests with the unsound transmute and join.

# Objective

As discussed in #14275, Bevy is currently too prone to panic, and makes

the easy / beginner-friendly way to do a large number of operations just

to panic on failure.

This is seriously frustrating in library code, but also slows down

development, as many of the `Query::single` panics can actually safely

be an early return (these panics are often due to a small ordering issue

or a change in game state.

More critically, in most "finished" products, panics are unacceptable:

any unexpected failures should be handled elsewhere. That's where the

new

With the advent of good system error handling, we can now remove this.

Note: I was instrumental in a) introducing this idea in the first place

and b) pushing to make the panicking variant the default. The

introduction of both `let else` statements in Rust and the fancy system

error handling work in 0.16 have changed my mind on the right balance

here.

## Solution

1. Make `Query::single` and `Query::single_mut` (and other random

related methods) return a `Result`.

2. Handle all of Bevy's internal usage of these APIs.

3. Deprecate `Query::get_single` and friends, since we've moved their

functionality to the nice names.

4. Add detailed advice on how to best handle these errors.

Generally I like the diff here, although `get_single().unwrap()` in

tests is a bit of a downgrade.

## Testing

I've done a global search for `.single` to track down any missed

deprecated usages.

As to whether or not all the migrations were successful, that's what CI

is for :)

## Future work

~~Rename `Query::get_single` and friends to `Query::single`!~~

~~I've opted not to do this in this PR, and smear it across two releases

in order to ease the migration. Successive deprecations are much easier

to manage than the semantics and types shifting under your feet.~~

Cart has convinced me to change my mind on this; see

https://github.com/bevyengine/bevy/pull/18082#discussion_r1974536085.

## Migration guide

`Query::single`, `Query::single_mut` and their `QueryState` equivalents

now return a `Result`. Generally, you'll want to:

1. Use Bevy 0.16's system error handling to return a `Result` using the

`?` operator.

2. Use a `let else Ok(data)` block to early return if it's an expected

failure.

3. Use `unwrap()` or `Ok` destructuring inside of tests.

The old `Query::get_single` (etc) methods which did this have been

deprecated.

# Objective

There are currently three ways to access the parent stored on a ChildOf

relationship:

1. `child_of.parent` (field accessor)

2. `child_of.get()` (get function)

3. `**child_of` (Deref impl)

I will assert that we should only have one (the field accessor), and

that the existence of the other implementations causes confusion and

legibility issues. The deref approach is heinous, and `child_of.get()`

is significantly less clear than `child_of.parent`.

## Solution

Remove `impl Deref for ChildOf` and `ChildOf::get`.

The one "downside" I'm seeing is that:

```rust

entity.get::<ChildOf>().map(ChildOf::get)

```

Becomes this:

```rust

entity.get::<ChildOf>().map(|c| c.parent)

```

I strongly believe that this is worth the increased clarity and

consistency. I'm also not really a huge fan of the "pass function

pointer to map" syntax. I think most people don't think this way about

maps. They think in terms of a function that takes the item in the

Option and returns the result of some action on it.

## Migration Guide

```rust

// Before

**child_of

// After

child_of.parent

// Before

child_of.get()

// After

child_of.parent

// Before

entity.get::<ChildOf>().map(ChildOf::get)

// After

entity.get::<ChildOf>().map(|c| c.parent)

```

# Objective

fixes#17896

## Solution

Change ChildOf ( Entity ) to ChildOf { parent: Entity }

by doing this we also allow users to use named structs for relationship

derives, When you have more than 1 field in a struct with named fields

the macro will look for a field with the attribute #[relationship] and

all of the other fields should implement the Default trait. Unnamed

fields are still supported.

When u have a unnamed struct with more than one field the macro will

fail.

Do we want to support something like this ?

```rust

#[derive(Component)]

#[relationship_target(relationship = ChildOf)]

pub struct Children (#[relationship] Entity, u8);

```

I could add this, it but doesn't seem nice.

## Testing

crates/bevy_ecs - cargo test

## Showcase

```rust

use bevy_ecs::component::Component;

use bevy_ecs::entity::Entity;

#[derive(Component)]

#[relationship(relationship_target = Children)]

pub struct ChildOf {

#[relationship]

pub parent: Entity,

internal: u8,

};

#[derive(Component)]

#[relationship_target(relationship = ChildOf)]

pub struct Children {

children: Vec<Entity>

};

```

---------

Co-authored-by: Tim Overbeek <oorbecktim@Tims-MacBook-Pro.local>

Co-authored-by: Tim Overbeek <oorbecktim@c-001-001-042.client.nl.eduvpn.org>

Co-authored-by: Tim Overbeek <oorbecktim@c-001-001-059.client.nl.eduvpn.org>

Co-authored-by: Tim Overbeek <oorbecktim@c-001-001-054.client.nl.eduvpn.org>

Co-authored-by: Tim Overbeek <oorbecktim@c-001-001-027.client.nl.eduvpn.org>

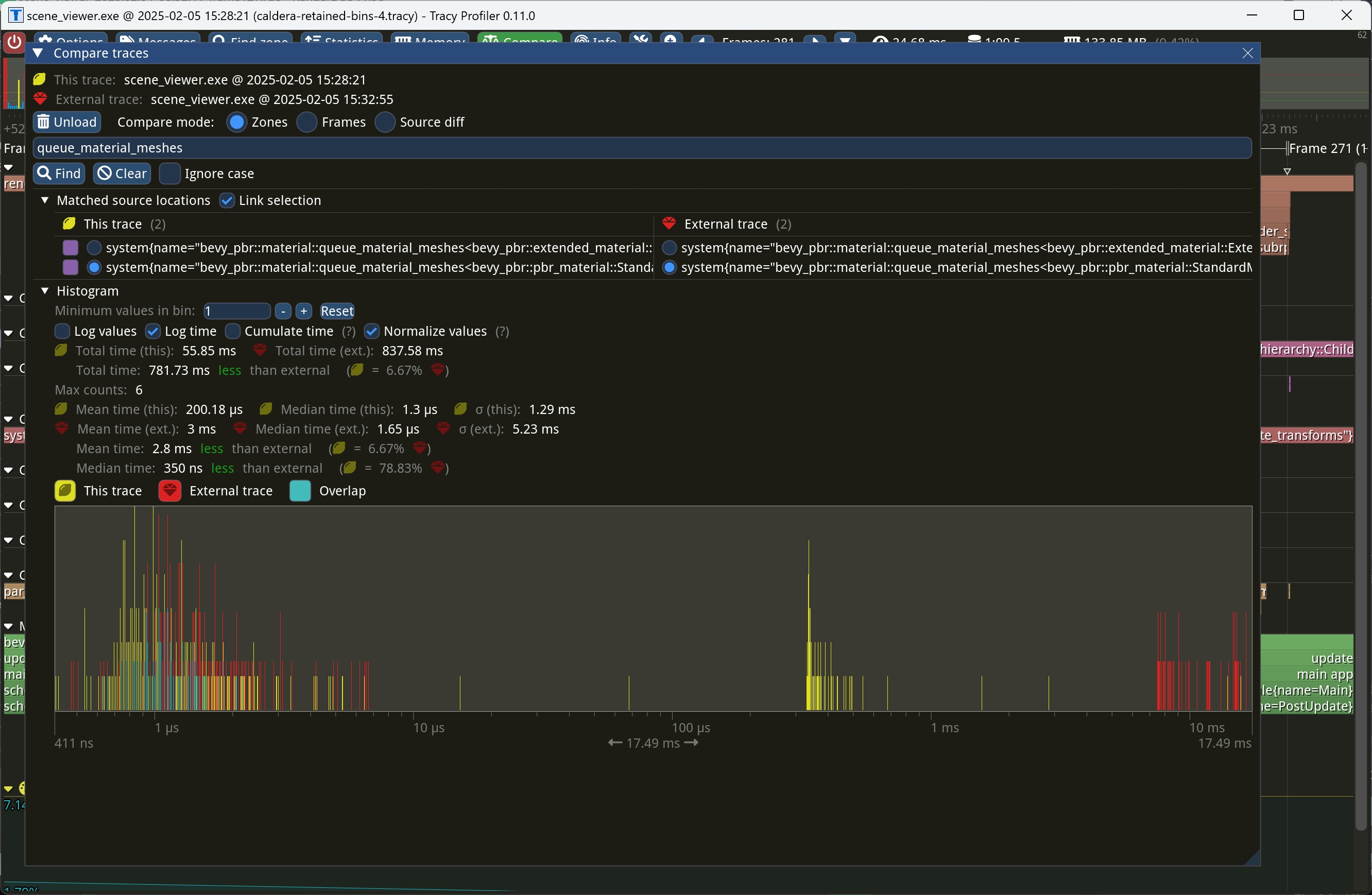

Even though opaque deferred entities aren't placed into the `Opaque3d`

bin, we still want to cache them as though they were, so that we don't

have to re-queue them every frame. This commit implements that logic,

reducing the time of `queue_material_meshes` to near-zero on Caldera.

Currently, the structure-level `#[uniform]` attribute of `AsBindGroup`

creates a binding array of individual buffers, each of which contains

data for a single material. A more efficient approach would be to

provide a single buffer with an array containing all of the data for all

materials in the bind group. Because `StandardMaterial` uses

`#[uniform]`, this can be notably inefficient with large numbers of

materials.

This patch introduces a new attribute on `AsBindGroup`, `#[data]`, which

works identically to `#[uniform]` except that it concatenates all the

data into a single buffer that the material bind group allocator itself

manages. It also converts `StandardMaterial` to use this new

functionality. This effectively provides the "material data in arrays"

feature.

# Objective

- Fixes#17960

## Solution

- Followed the [edition upgrade

guide](https://doc.rust-lang.org/edition-guide/editions/transitioning-an-existing-project-to-a-new-edition.html)

## Testing

- CI

---

## Summary of Changes

### Documentation Indentation

When using lists in documentation, proper indentation is now linted for.

This means subsequent lines within the same list item must start at the

same indentation level as the item.

```rust

/* Valid */

/// - Item 1

/// Run-on sentence.

/// - Item 2

struct Foo;

/* Invalid */

/// - Item 1

/// Run-on sentence.

/// - Item 2

struct Foo;

```

### Implicit `!` to `()` Conversion

`!` (the never return type, returned by `panic!`, etc.) no longer

implicitly converts to `()`. This is particularly painful for systems

with `todo!` or `panic!` statements, as they will no longer be functions

returning `()` (or `Result<()>`), making them invalid systems for

functions like `add_systems`. The ideal fix would be to accept functions

returning `!` (or rather, _not_ returning), but this is blocked on the

[stabilisation of the `!` type

itself](https://doc.rust-lang.org/std/primitive.never.html), which is

not done.

The "simple" fix would be to add an explicit `-> ()` to system

signatures (e.g., `|| { todo!() }` becomes `|| -> () { todo!() }`).

However, this is _also_ banned, as there is an existing lint which (IMO,

incorrectly) marks this as an unnecessary annotation.

So, the "fix" (read: workaround) is to put these kinds of `|| -> ! { ...

}` closuers into variables and give the variable an explicit type (e.g.,

`fn()`).

```rust

// Valid

let system: fn() = || todo!("Not implemented yet!");

app.add_systems(..., system);

// Invalid

app.add_systems(..., || todo!("Not implemented yet!"));

```

### Temporary Variable Lifetimes

The order in which temporary variables are dropped has changed. The

simple fix here is _usually_ to just assign temporaries to a named

variable before use.

### `gen` is a keyword

We can no longer use the name `gen` as it is reserved for a future

generator syntax. This involved replacing uses of the name `gen` with

`r#gen` (the raw-identifier syntax).

### Formatting has changed

Use statements have had the order of imports changed, causing a

substantial +/-3,000 diff when applied. For now, I have opted-out of

this change by amending `rustfmt.toml`

```toml

style_edition = "2021"

```

This preserves the original formatting for now, reducing the size of

this PR. It would be a simple followup to update this to 2024 and run

`cargo fmt`.

### New `use<>` Opt-Out Syntax

Lifetimes are now implicitly included in RPIT types. There was a handful

of instances where it needed to be added to satisfy the borrow checker,

but there may be more cases where it _should_ be added to avoid

breakages in user code.

### `MyUnitStruct { .. }` is an invalid pattern

Previously, you could match against unit structs (and unit enum

variants) with a `{ .. }` destructuring. This is no longer valid.

### Pretty much every use of `ref` and `mut` are gone

Pattern binding has changed to the point where these terms are largely

unused now. They still serve a purpose, but it is far more niche now.

### `iter::repeat(...).take(...)` is bad

New lint recommends using the more explicit `iter::repeat_n(..., ...)`

instead.

## Migration Guide

The lifetimes of functions using return-position impl-trait (RPIT) are

likely _more_ conservative than they had been previously. If you

encounter lifetime issues with such a function, please create an issue

to investigate the addition of `+ use<...>`.

## Notes

- Check the individual commits for a clearer breakdown for what

_actually_ changed.

---------

Co-authored-by: François Mockers <francois.mockers@vleue.com>

Two-phase occlusion culling can be helpful for shadow maps just as it

can for a prepass, in order to reduce vertex and alpha mask fragment

shading overhead. This patch implements occlusion culling for shadow

maps from directional lights, when the `OcclusionCulling` component is

present on the entities containing the lights. Shadow maps from point

lights are deferred to a follow-up patch. Much of this patch involves

expanding the hierarchical Z-buffer to cover shadow maps in addition to

standard view depth buffers.

The `scene_viewer` example has been updated to add `OcclusionCulling` to

the directional light that it creates.

This improved the performance of the rend3 sci-fi test scene when

enabling shadows.

Currently, Bevy's implementation of bindless resources is rather

unusual: every binding in an object that implements `AsBindGroup` (most

commonly, a material) becomes its own separate binding array in the

shader. This is inefficient for two reasons:

1. If multiple materials reference the same texture or other resource,

the reference to that resource will be duplicated many times. This

increases `wgpu` validation overhead.

2. It creates many unused binding array slots. This increases `wgpu` and

driver overhead and makes it easier to hit limits on APIs that `wgpu`

currently imposes tight resource limits on, like Metal.

This PR fixes these issues by switching Bevy to use the standard

approach in GPU-driven renderers, in which resources are de-duplicated

and passed as global arrays, one for each type of resource.

Along the way, this patch introduces per-platform resource limits and

bumps them from 16 resources per binding array to 64 resources per bind

group on Metal and 2048 resources per bind group on other platforms.

(Note that the number of resources per *binding array* isn't the same as

the number of resources per *bind group*; as it currently stands, if all

the PBR features are turned on, Bevy could pack as many as 496 resources

into a single slab.) The limits have been increased because `wgpu` now

has universal support for partially-bound binding arrays, which mean

that we no longer need to fill the binding arrays with fallback

resources on Direct3D 12. The `#[bindless(LIMIT)]` declaration when

deriving `AsBindGroup` can now simply be written `#[bindless]` in order

to have Bevy choose a default limit size for the current platform.

Custom limits are still available with the new

`#[bindless(limit(LIMIT))]` syntax: e.g. `#[bindless(limit(8))]`.

The material bind group allocator has been completely rewritten. Now

there are two allocators: one for bindless materials and one for

non-bindless materials. The new non-bindless material allocator simply

maintains a 1:1 mapping from material to bind group. The new bindless

material allocator maintains a list of slabs and allocates materials

into slabs on a first-fit basis. This unfortunately makes its

performance O(number of resources per object * number of slabs), but the

number of slabs is likely to be low, and it's planned to become even

lower in the future with `wgpu` improvements. Resources are

de-duplicated with in a slab and reference counted. So, for instance, if

multiple materials refer to the same texture, that texture will exist

only once in the appropriate binding array.

To support these new features, this patch adds the concept of a

*bindless descriptor* to the `AsBindGroup` trait. The bindless

descriptor allows the material bind group allocator to probe the layout

of the material, now that an array of `BindGroupLayoutEntry` records is

insufficient to describe the group. The `#[derive(AsBindGroup)]` has

been heavily modified to support the new features. The most important

user-facing change to that macro is that the struct-level `uniform`

attribute, `#[uniform(BINDING_NUMBER, StandardMaterial)]`, now reads

`#[uniform(BINDLESS_INDEX, MATERIAL_UNIFORM_TYPE,

binding_array(BINDING_NUMBER)]`, allowing the material to specify the

binding number for the binding array that holds the uniform data.

To make this patch simpler, I removed support for bindless

`ExtendedMaterial`s, as well as field-level bindless uniform and storage

buffers. I intend to add back support for these as a follow-up. Because

they aren't in any released Bevy version yet, I figured this was OK.

Finally, this patch updates `StandardMaterial` for the new bindless

changes. Generally, code throughout the PBR shaders that looked like

`base_color_texture[slot]` now looks like

`bindless_2d_textures[material_indices[slot].base_color_texture]`.

This patch fixes a system hang that I experienced on the [Caldera test]

when running with `caldera --random-materials --texture-count 100`. The

time per frame is around 19.75 ms, down from 154.2 ms in Bevy 0.14: a

7.8× speedup.

[Caldera test]: https://github.com/DGriffin91/bevy_caldera_scene

This commit restructures the multidrawable batch set builder for better

performance in various ways:

* The bin traversal is optimized to make the best use of the CPU cache.

* The inner loop that iterates over the bins, which is the hottest part

of `batch_and_prepare_binned_render_phase`, has been shrunk as small as

possible.

* Where possible, multiple elements are added to or reserved from GPU

buffers as a batch instead of one at a time.

* Methods that LLVM wasn't inlining have been marked `#[inline]` where

doing so would unlock optimizations.

This code has also been refactored to avoid duplication between the

logic for indexed and non-indexed meshes via the introduction of a

`MultidrawableBatchSetPreparer` object.

Together, this improved the `batch_and_prepare_binned_render_phase` time

on Caldera by approximately 2×.

Eventually, we should optimize the batchable-but-not-multidrawable and

unbatchable logic as well, but these meshes are much rarer, so in the

interests of keeping this patch relatively small I opted to leave those

to a follow-up.

# Objective

Make checked vs unchecked shaders configurable

Fixes#17786

## Solution

Added `ValidateShaders` enum to `Shader` and added

`create_and_validate_shader_module` to `RenderDevice`

## Testing

I tested the shader examples locally and they all worked. I'd like to

write a few tests to verify but am unsure how to start.

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

The `check_visibility` system currently follows this algorithm:

1. Store all meshes that were visible last frame in the

`PreviousVisibleMeshes` set.

2. Determine which meshes are visible. For each such visible mesh,

remove it from `PreviousVisibleMeshes`.

3. Mark all meshes that remain in `PreviousVisibleMeshes` as invisible.

This algorithm would be correct if the `check_visibility` were the only

system that marked meshes visible. However, it's not: the shadow-related

systems `check_dir_light_mesh_visibility` and

`check_point_light_mesh_visibility` can as well. This results in the

following sequence of events for meshes that are in a shadow map but

*not* visible from a camera:

A. `check_visibility` runs, finds that no camera contains these meshes,

and marks them hidden, which sets the changed flag.

B. `check_dir_light_mesh_visibility` and/or

`check_point_light_mesh_visibility` run, discover that these meshes

are visible in the shadow map, and marks them as visible, again

setting the `ViewVisibility` changed flag.

C. During the extraction phase, the mesh extraction system sees that

`ViewVisibility` is changed and re-extracts the mesh.

This is inefficient and results in needless work during rendering.

This patch fixes the issue in two ways:

* The `check_dir_light_mesh_visibility` and

`check_point_light_mesh_visibility` systems now remove meshes that they

discover from `PreviousVisibleMeshes`.

* Step (3) above has been moved from `check_visibility` to a separate

system, `mark_newly_hidden_entities_invisible`. This system runs after

all visibility-determining systems, ensuring that

`PreviousVisibleMeshes` contains only those meshes that truly became

invisible on this frame.

This fix dramatically improves the performance of [the Caldera

benchmark], when combined with several other patches I've submitted.

[the Caldera benchmark]:

https://github.com/DGriffin91/bevy_caldera_scene

PR #17688 broke motion vector computation, and therefore motion blur,

because it enabled retention of `MeshInputUniform`s, and

`MeshInputUniform`s contain the indices of the previous frame's

transform and the previous frame's skinned mesh joint matrices. On frame

N, if a `MeshInputUniform` is retained on GPU from the previous frame,

the `previous_input_index` and `previous_skin_index` would refer to the

indices for frame N - 2, not the index for frame N - 1.

This patch fixes the problems. It solves these issues in two different

ways, one for transforms and one for skins:

1. To fix transforms, this patch supplies the *frame index* to the

shader as part of the view uniforms, and specifies which frame index

each mesh's previous transform refers to. So, in the situation described

above, the frame index would be N, the previous frame index would be N -

1, and the `previous_input_frame_number` would be N - 2. The shader can

now detect this situation and infer that the mesh has been retained, and

can therefore conclude that the mesh's transform hasn't changed.

2. To fix skins, this patch replaces the explicit `previous_skin_index`

with an invariant that the index of the joints for the current frame and

the index of the joints for the previous frame are the same. This means

that the `MeshInputUniform` never has to be updated even if the skin is

animated. The downside is that we have to copy joint matrices from the

previous frame's buffer to the current frame's buffer in

`extract_skins`.

The rationale behind (2) is that we currently have no mechanism to

detect when joints that affect a skin have been updated, short of

comparing all the transforms and setting a flag for

`extract_meshes_for_gpu_building` to consume, which would regress

performance as we want `extract_skins` and

`extract_meshes_for_gpu_building` to be able to run in parallel.

To test this change, use `cargo run --example motion_blur`.

Currently, Bevy rebuilds the buffer containing all the transforms for

joints every frame, during the extraction phase. This is inefficient in

cases in which many skins are present in the scene and their joints

don't move, such as the Caldera test scene.

To address this problem, this commit switches skin extraction to use a

set of retained GPU buffers with allocations managed by the offset

allocator. I use fine-grained change detection in order to determine

which skins need updating. Note that the granularity is on the level of

an entire skin, not individual joints. Using the change detection at

that level would yield poor performance in common cases in which an

entire skin is animated at once. Also, this patch yields additional

performance from the fact that changing joint transforms no longer

requires the skinned mesh to be re-extracted.

Note that this optimization can be a double-edged sword. In

`many_foxes`, fine-grained change detection regressed the performance of

`extract_skins` by 3.4x. This is because every joint is updated every

frame in that example, so change detection is pointless and is pure

overhead. Because the `many_foxes` workload is actually representative

of animated scenes, this patch includes a heuristic that disables

fine-grained change detection if the number of transformed entities in

the frame exceeds a certain fraction of the total number of joints.

Currently, this threshold is set to 25%. Note that this is a crude

heuristic, because it doesn't distinguish between the number of

transformed *joints* and the number of transformed *entities*; however,

it should be good enough to yield the optimum code path most of the

time.

Finally, this patch fixes a bug whereby skinned meshes are actually

being incorrectly retained if the buffer offsets of the joints of those

skinned meshes changes from frame to frame. To fix this without

retaining skins, we would have to re-extract every skinned mesh every

frame. Doing this was a significant regression on Caldera. With this PR,

by contrast, mesh joints stay at the same buffer offset, so we don't

have to update the `MeshInputUniform` containing the buffer offset every

frame. This also makes PR #17717 easier to implement, because that PR

uses the buffer offset from the previous frame, and the logic for

calculating that is simplified if the previous frame's buffer offset is

guaranteed to be identical to that of the current frame.

On Caldera, this patch reduces the time spent in `extract_skins` from

1.79 ms to near zero. On `many_foxes`, this patch regresses the

performance of `extract_skins` by approximately 10%-25%, depending on

the number of foxes. This has only a small impact on frame rate.

The GPU can fill out many of the fields in `IndirectParametersMetadata`

using information it already has:

* `early_instance_count` and `late_instance_count` are always

initialized to zero.

* `mesh_index` is already present in the work item buffer as the

`input_index` of the first work item in each batch.

This patch moves these fields to a separate buffer, the *GPU indirect

parameters metadata* buffer. That way, it avoids having to write them on

CPU during `batch_and_prepare_binned_render_phase`. This effectively

reduces the number of bits that that function must write per mesh from

160 to 64 (in addition to the 64 bits per mesh *instance*).

Additionally, this PR refactors `UntypedPhaseIndirectParametersBuffers`

to add another layer, `MeshClassIndirectParametersBuffers`, which allows

abstracting over the buffers corresponding indexed and non-indexed

meshes. This patch doesn't make much use of this abstraction, but

forthcoming patches will, and it's overall a cleaner approach.

This didn't seem to have much of an effect by itself on

`batch_and_prepare_binned_render_phase` time, but subsequent PRs

dependent on this PR yield roughly a 2× speedup.

## Objective

There's no general error for when an entity doesn't exist, and some

methods are going to need one when they get Resultified. The closest

thing is `EntityFetchError`, but that error has a slightly more specific

purpose.

## Solution

- Added `EntityDoesNotExistError`.

- Contains `Entity` and `EntityDoesNotExistDetails`.

- Changed `EntityFetchError` and `QueryEntityError`:

- Changed `NoSuchEntity` variant to wrap `EntityDoesNotExistError` and

renamed the variant to `EntityDoesNotExist`.

- Renamed `EntityFetchError` to `EntityMutableFetchError` to make its

purpose clearer.

- Renamed `TryDespawnError` to `EntityDespawnError` to make it more

general.

- Changed `World::inspect_entity` to return `Result<[ok],

EntityDoesNotExistError>` instead of panicking.

- Changed `World::get_entity` and `WorldEntityFetch::fetch_ref` to

return `Result<[ok], EntityDoesNotExistError>` instead of `Result<[ok],

Entity>`.

- Changed `UnsafeWorldCell::get_entity` to return

`Result<UnsafeEntityCell, EntityDoesNotExistError>` instead of

`Option<UnsafeEntityCell>`.

## Migration Guide

- `World::inspect_entity` now returns `Result<impl Iterator<Item =

&ComponentInfo>, EntityDoesNotExistError>` instead of `impl

Iterator<Item = &ComponentInfo>`.

- `World::get_entity` now returns `EntityDoesNotExistError` as an error

instead of `Entity`. You can still access the entity's ID through the

error's `entity` field.

- `UnsafeWorldCell::get_entity` now returns `Result<UnsafeEntityCell,

EntityDoesNotExistError>` instead of `Option<UnsafeEntityCell>`.

Appending to these vectors is performance-critical in

`batch_and_prepare_binned_render_phase`, so `RawBufferVec`, which

doesn't have the overhead of `encase`, is more appropriate.

The `collect_buffers_for_phase` system tries to reuse these buffers, but

its efforts are stymied by the fact that

`clear_batched_gpu_instance_buffers` clears the containing hash table

and therefore frees the buffers. This patch makes

`clear_batched_gpu_instance_buffers` stop doing that so that the

allocations can be reused.

There was nonsense code in `batch_and_prepare_sorted_render_phase` that

created temporary buffers to add objects to instead of using the correct

ones. I think this was debug code. This commit removes that code in

favor of writing to the actual buffers.

Closes#17846.

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

The `output_index` field is only used in direct mode, and the

`indirect_parameters_index` field is only used in indirect mode.

Consequently, we can combine them into a single field, reducing the size

of `PreprocessWorkItem`, which

`batch_and_prepare_{binned,sorted}_render_phase` must construct every

frame for every mesh instance, from 96 bits to 64 bits.

Currently, invocations of `batch_and_prepare_binned_render_phase` and

`batch_and_prepare_sorted_render_phase` can't run in parallel because

they write to scene-global GPU buffers. After PR #17698,

`batch_and_prepare_binned_render_phase` started accounting for the

lion's share of the CPU time, causing us to be strongly CPU bound on

scenes like Caldera when occlusion culling was on (because of the

overhead of batching for the Z-prepass). Although I eventually plan to

optimize `batch_and_prepare_binned_render_phase`, we can obtain

significant wins now by parallelizing that system across phases.

This commit splits all GPU buffers that

`batch_and_prepare_binned_render_phase` and

`batch_and_prepare_sorted_render_phase` touches into separate buffers

for each phase so that the scheduler will run those phases in parallel.

At the end of batch preparation, we gather the render phases up into a

single resource with a new *collection* phase. Because we already run

mesh preprocessing separately for each phase in order to make occlusion

culling work, this is actually a cleaner separation. For example, mesh

output indices (the unique ID that identifies each mesh instance on GPU)

are now guaranteed to be sequential starting from 0, which will simplify

the forthcoming work to remove them in favor of the compute dispatch ID.

On Caldera, this brings the frame time down to approximately 9.1 ms with

occlusion culling on.

Conceptually, bins are ordered hash maps. We currently implement these

as a list of keys with an associated hash map. But we already have a

data type that implements ordered hash maps directly: `IndexMap`. This

patch switches Bevy to use `IndexMap`s for bins. Because we're memory

bound, this doesn't affect performance much, but it is cleaner.

# Objective

- Wgpu has some expensive code it injects into shaders to avoid the

possibility of things like infinite loops. Generally our shaders are

written by users who won't do this, so it just makes our shaders perform

worse.

## Solution

- Turn off the checks.

- We could try to conditionally keep them, but that complicates the code

and 99.9% of users won't want this.

## Migration Guide

- Bevy no longer turns on wgpu's runtime safety checks

https://docs.rs/wgpu/latest/wgpu/struct.ShaderRuntimeChecks.html. If you

were using Bevy with untrusted shaders, please file an issue.

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

## Objective

Get rid of a redundant Cargo feature flag.

## Solution

Use the built-in `target_abi = "sim"` instead of a custom Cargo feature

flag, which is set for the iOS (and visionOS and tvOS) simulator. This

has been stable since Rust 1.78.

In the future, some of this may become redundant if Wgpu implements

proper supper for the iOS Simulator:

https://github.com/gfx-rs/wgpu/issues/7057

CC @mockersf who implemented [the original

fix](https://github.com/bevyengine/bevy/pull/10178).

## Testing

- Open mobile example in Xcode.

- Launch the simulator.

- See that no errors are emitted.

- Remove the code cfg-guarded behind `target_abi = "sim"`.

- See that an error now happens.

(I haven't actually performed these steps on the latest `main`, because

I'm hitting an unrelated error (EDIT: It was

https://github.com/bevyengine/bevy/pull/17637). But tested it on

0.15.0).

---

## Migration Guide

> If you're using a project that builds upon the mobile example, remove

the `ios_simulator` feature from your `Cargo.toml` (Bevy now handles

this internally).

Currently, we look up each `MeshInputUniform` index in a hash table that

maps the main entity ID to the index every frame. This is inefficient,

cache unfriendly, and unnecessary, as the `MeshInputUniform` index for

an entity remains the same from frame to frame (even if the input

uniform changes). This commit changes the `IndexSet` in the `RenderBin`

to an `IndexMap` that maps the `MainEntity` to `MeshInputUniformIndex`

(a new type that this patch adds for more type safety).

On Caldera with parallel `batch_and_prepare_binned_render_phase`, this

patch improves that function from 3.18 ms to 2.42 ms, a 31% speedup.

Currently, when a mesh slab overflows, we recreate the allocator and

reinsert all the meshes that were in it in an arbitrary order. This can

result in the meshes moving around. Before `MeshInputUniform`s were

retained, this was slow but harmless, because the `MeshInputUniform`

that contained the positions of the vertex and index data in the slab

would be recreated every frame. However, with mesh retention, there's no

guarantee that the `MeshInputUniform`, which could be cached from the

previous frame, will reflect the new position of the mesh data within

the buffer if that buffer happened to grow. This manifested itself as

seeming mesh data corruption when adding many meshes dynamically to the

scene.

There are three possible ways that I could have fixed this that I can

see:

1. Invalidate and rebuild all the `MeshInputUniform`s belonging to all

meshes in a slab when that mesh grows.

2. Introduce a second layer of indirection so that the

`MeshInputUniform` points to a *mesh allocation table* that contains the

current locations of the data of each mesh.

3. Avoid moving meshes when reallocating the buffer.

To be efficient, option (1) would require scanning meshes to see if

their positions changed, a la

`mark_meshes_as_changed_if_their_materials_changed`. Option (2) would

add more runtime indirection and would require additional bookkeeping on

the part of the allocator.

Therefore, this PR chooses option (3), which was remarkably simple to

implement. The key is that the offset allocator happens to allocate

addresses from low addresses to high addresses. So all we have to do is

to *conceptually* allocate the full 512 MiB mesh slab as far as the

offset allocator is concerned, and grow the underlying backing store

from 1 MiB to 512 MiB as needed. In other words, the allocator now

allocates *virtual* GPU memory, and the actual backing slab resizes to

fit the virtual memory. This ensures that the location of mesh data

remains constant for the lifetime of the mesh asset, and we can remove